The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

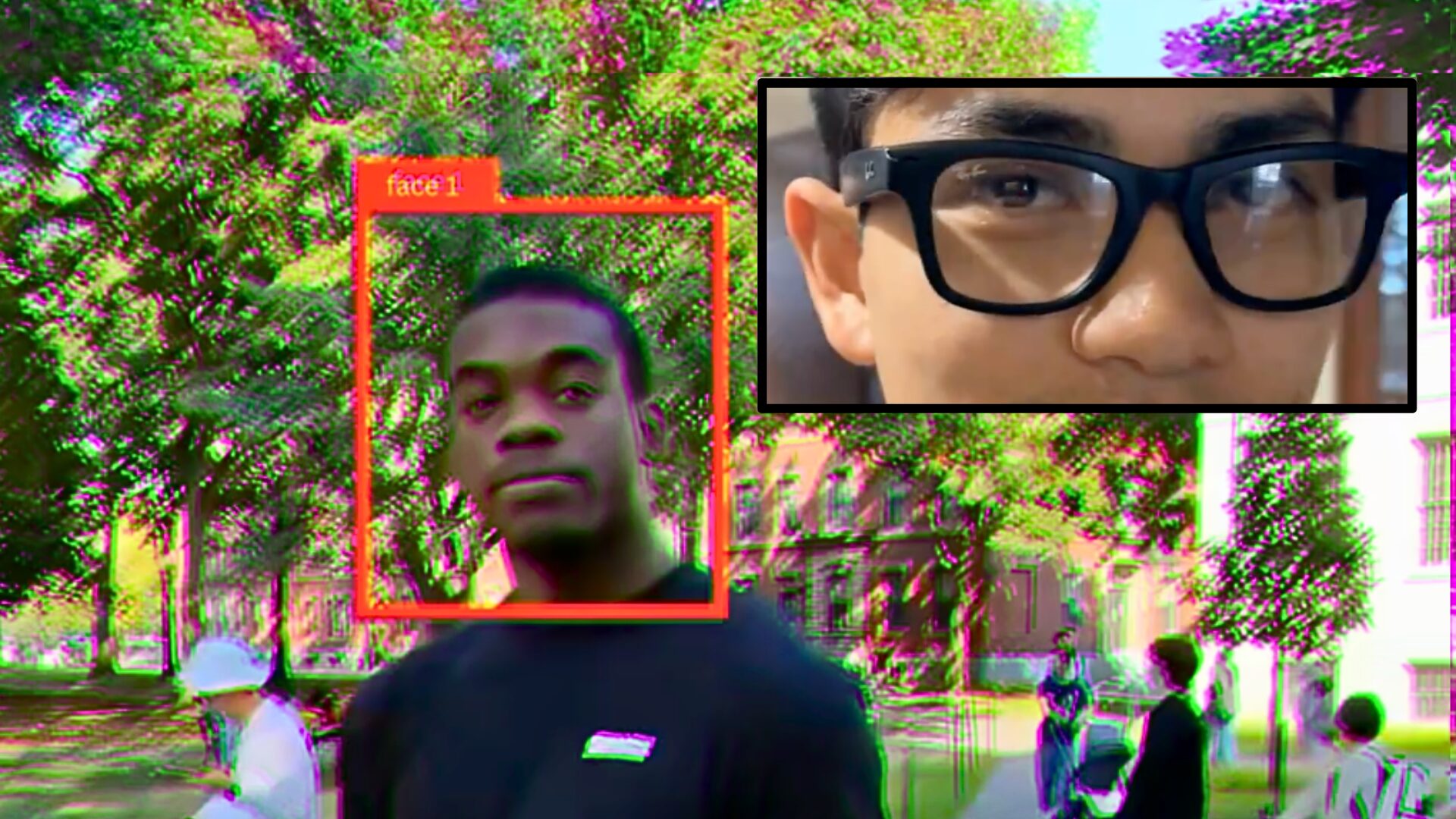

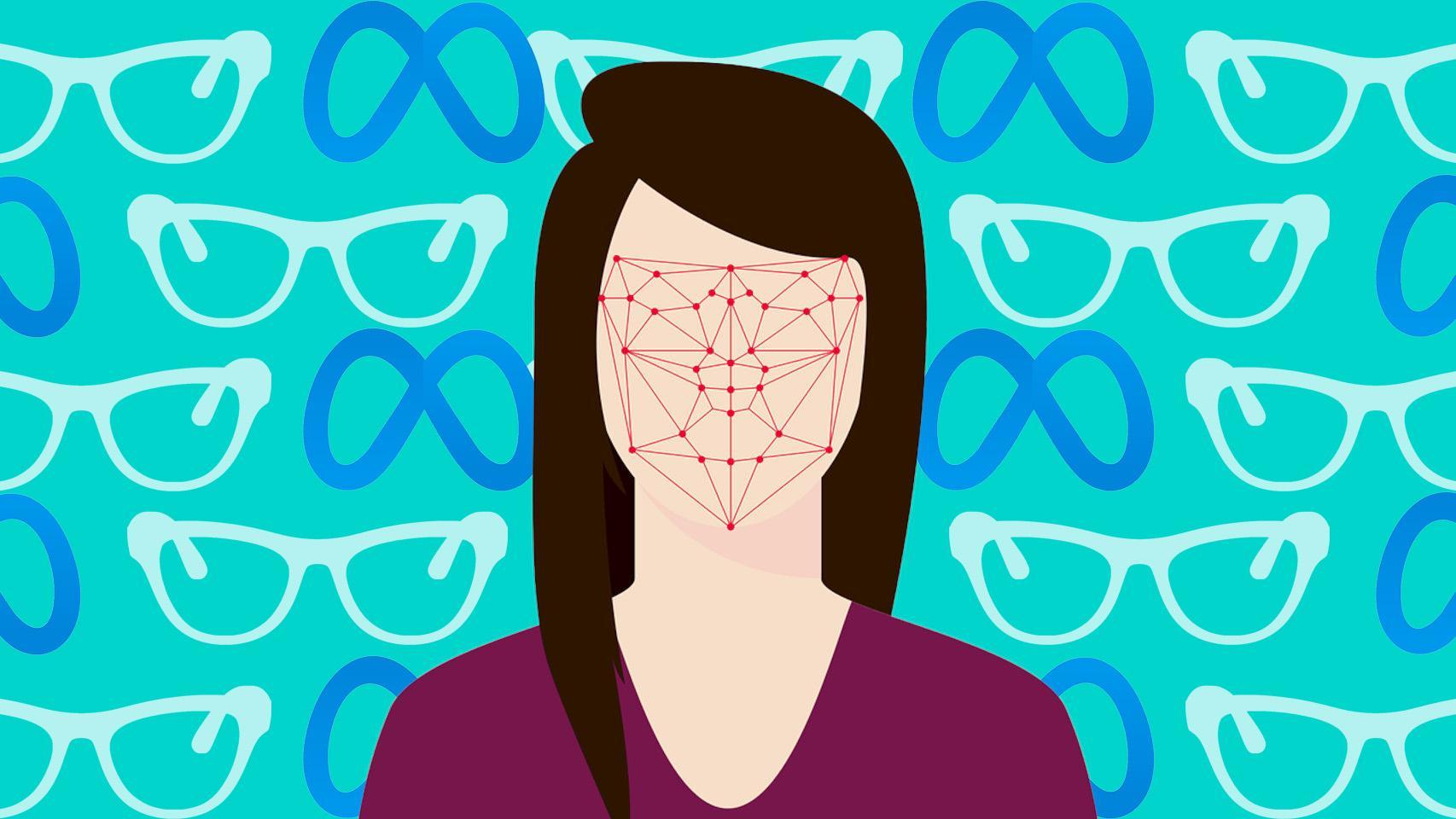

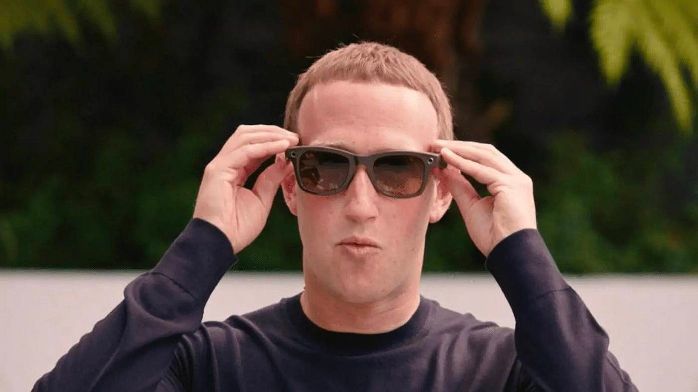

Two Harvard students demonstrated the potential privacy risks of Meta's Ray-Ban smart glasses by using AI-powered facial recognition to identify strangers and access their personal information without consent. This raises significant privacy concerns as the glasses can generate AI-created profiles, potentially leading to unauthorized surveillance and data collection.[AI generated]