The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

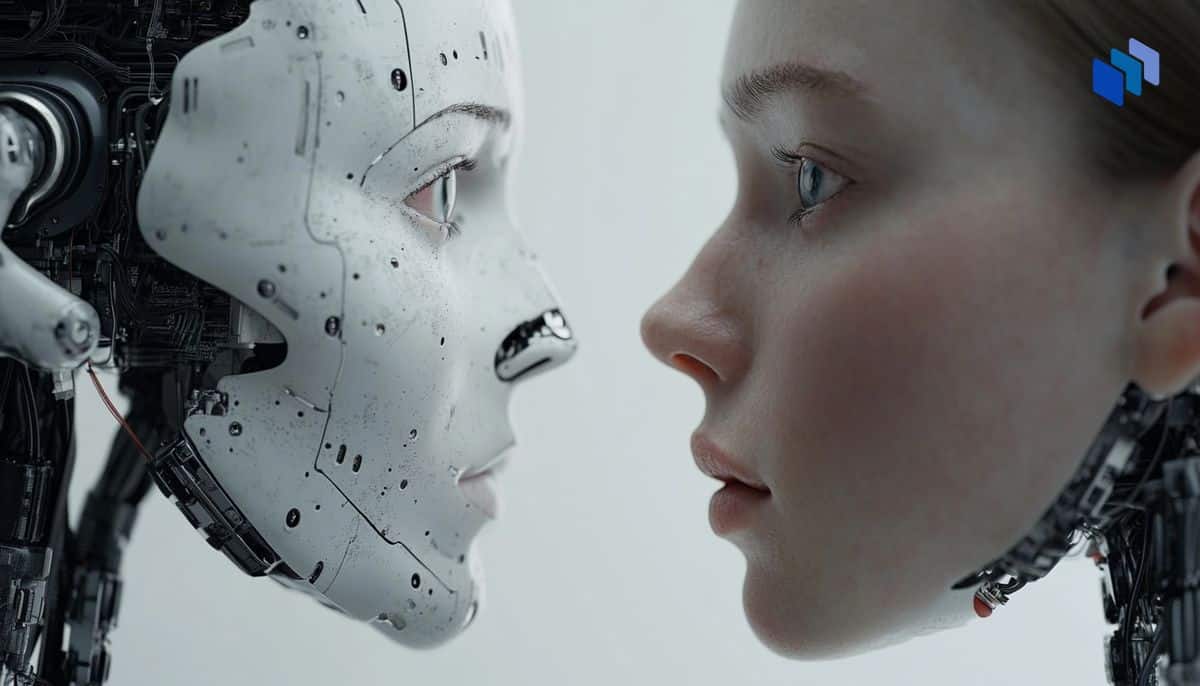

Cybercriminals are using an AI-powered deepfake tool, ProKYC, to bypass security protocols on cryptocurrency exchanges. This tool generates fake IDs and videos to pass facial recognition systems, facilitating money laundering and fraud. Cato Networks reports that these activities are increasing fraud in the crypto industry, posing significant security threats.[AI generated]