The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

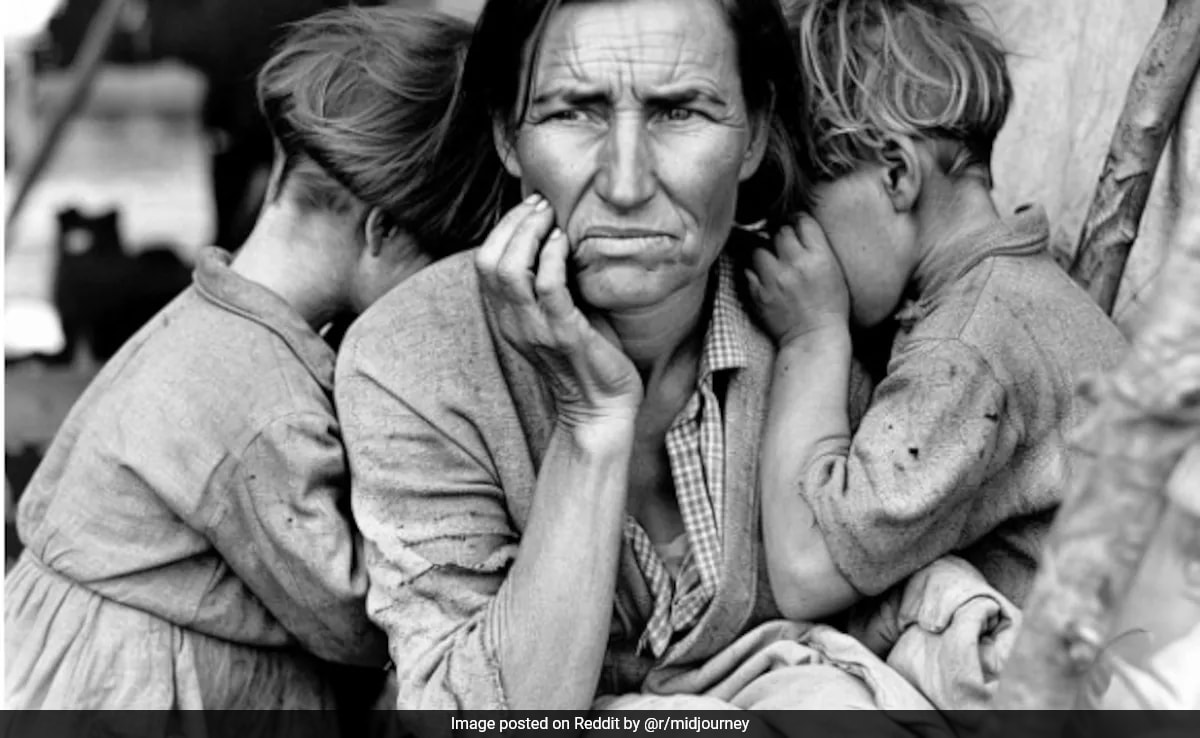

AI image generators like Midjourney are producing fake historical photos that are widely shared and mistaken for real, leading to misinformation and distorting public understanding of history. Historians warn this undermines trust in visual evidence and harms communities by eroding historical truth.[AI generated]