The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

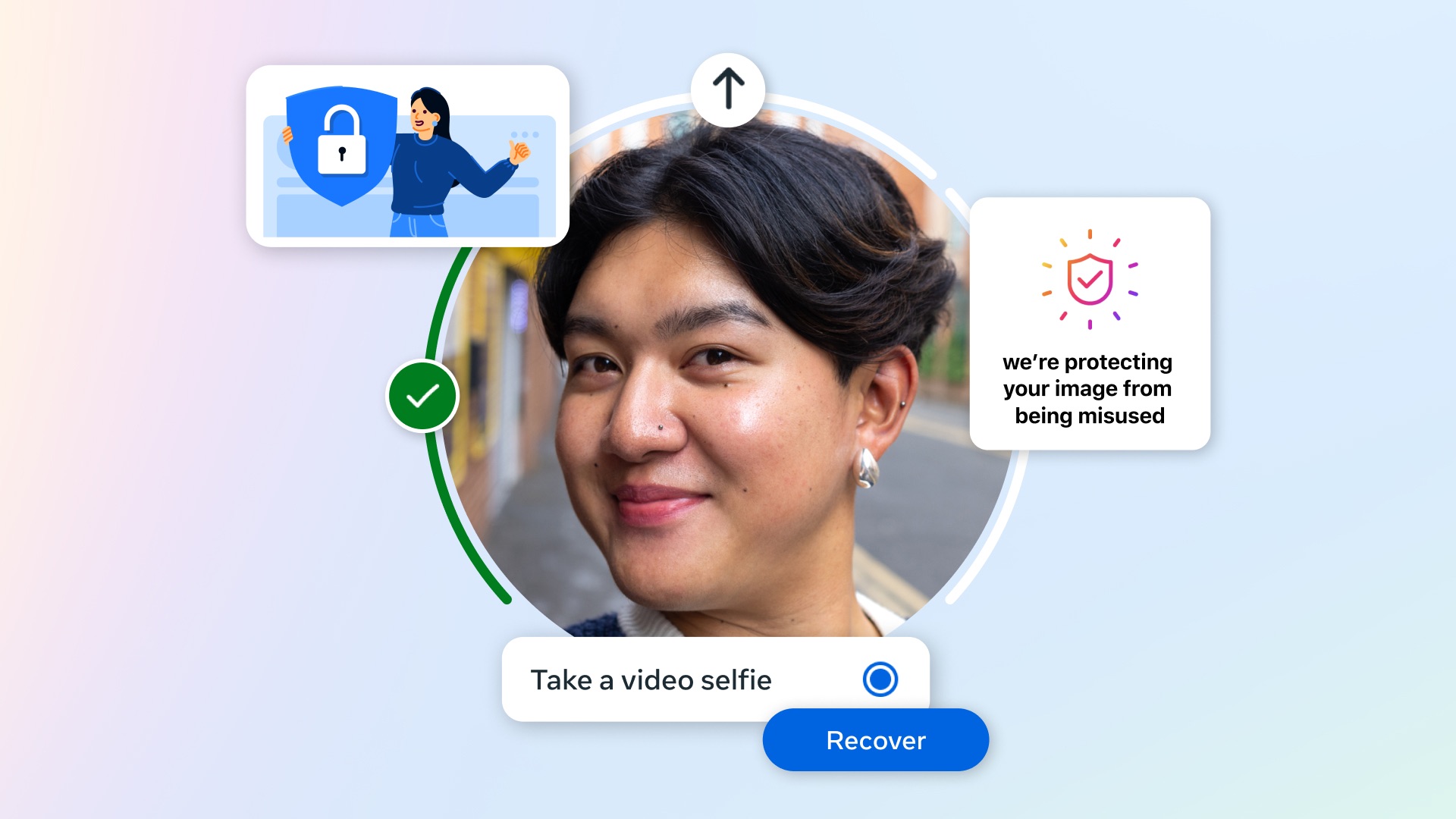

Generative AI is increasingly used in Japan to create deepfake images and audio for online scams impersonating celebrities, leading to financial losses and theft of personal information. Meta is deploying facial recognition AI to detect and block such fraudulent ads, but privacy and security concerns remain regarding biometric data use.[AI generated]