The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

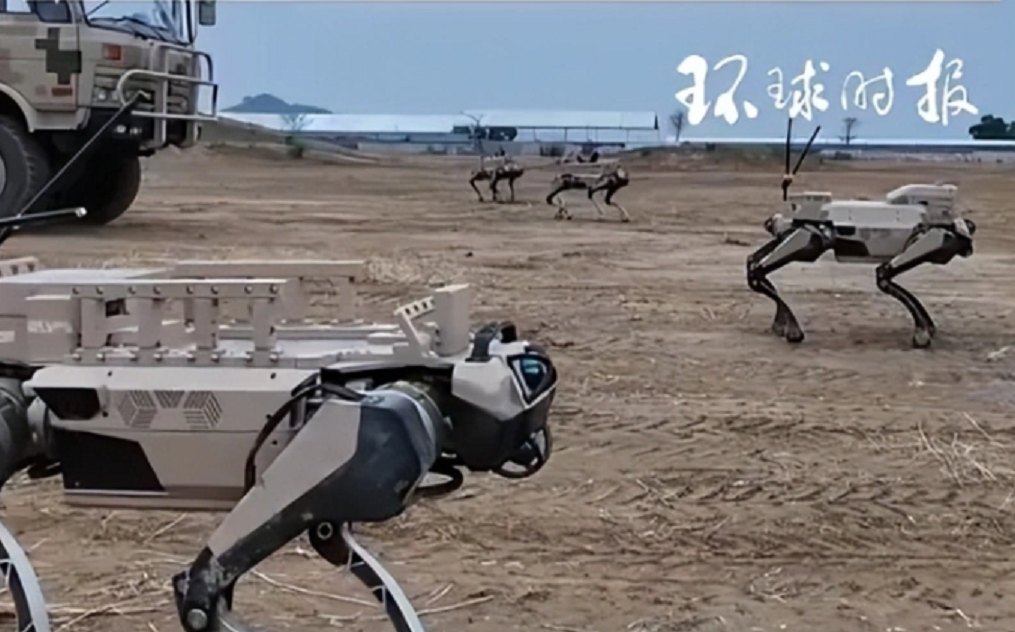

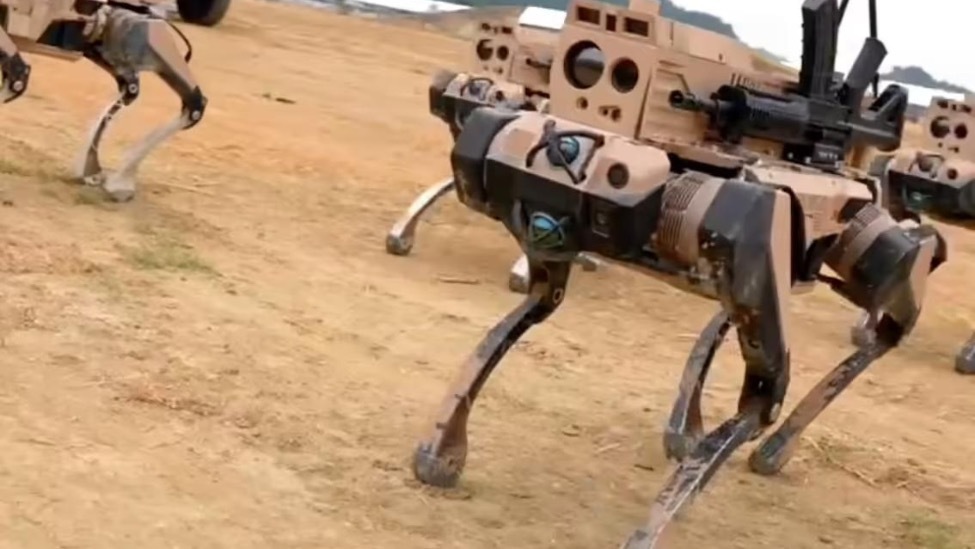

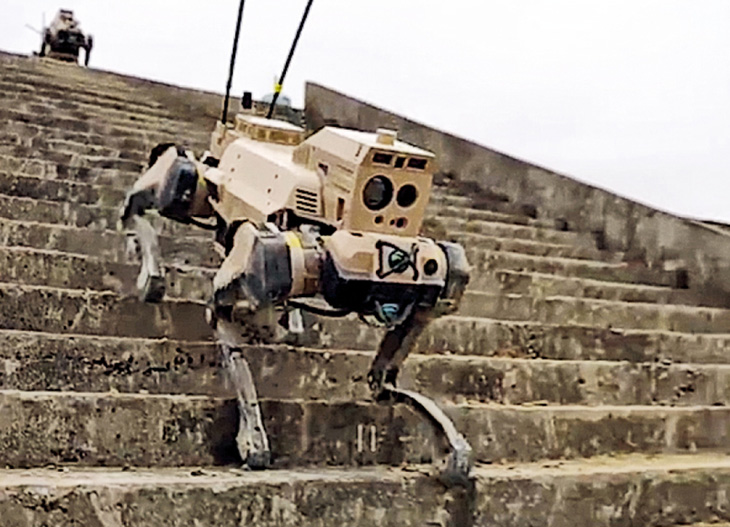

China unveiled its AI-driven "Machine Wolf" autonomous ground vehicle squad at the Zhuhai Airshow, featuring scout, shooter and support variants capable of reconnaissance, precision rifle fire and carrying supplies. The multi-role robotic wolves coordinate with troops, promising to adapt to complex terrain and reduce soldier casualties on future battlefields.[AI generated]