The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

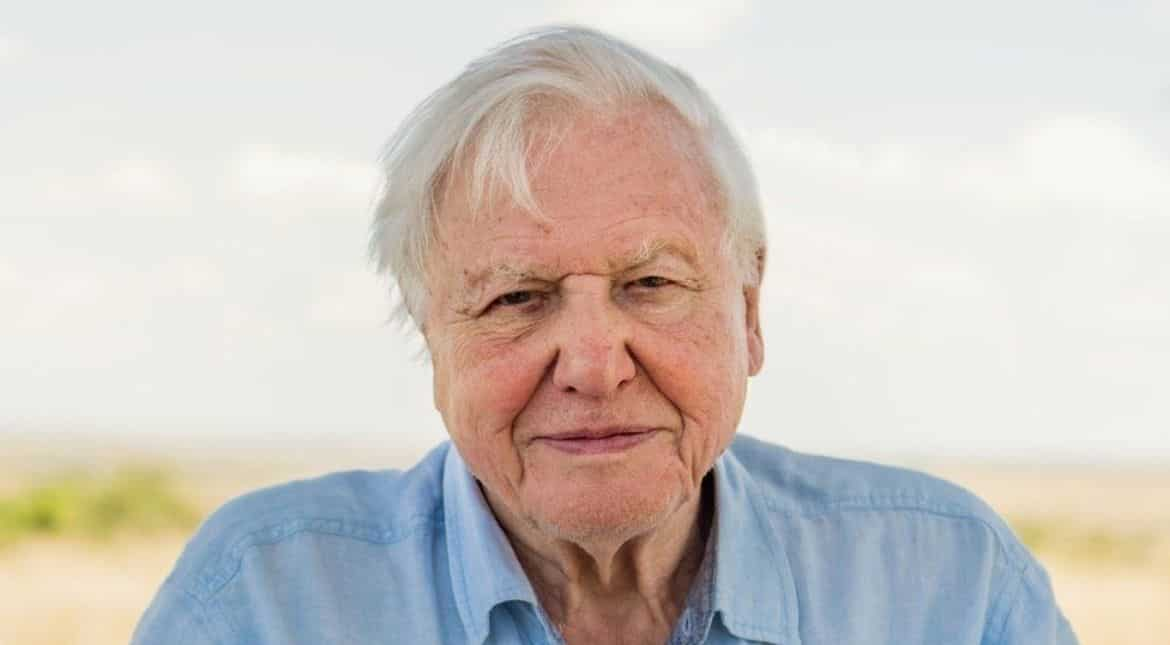

Sir David Attenborough is deeply disturbed by unauthorized AI clones of his voice being used on platforms like YouTube for partisan news reports. He considers this identity theft and a violation of intellectual property rights, as the AI-generated voice closely mimics his own, potentially misleading audiences.[AI generated]