The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

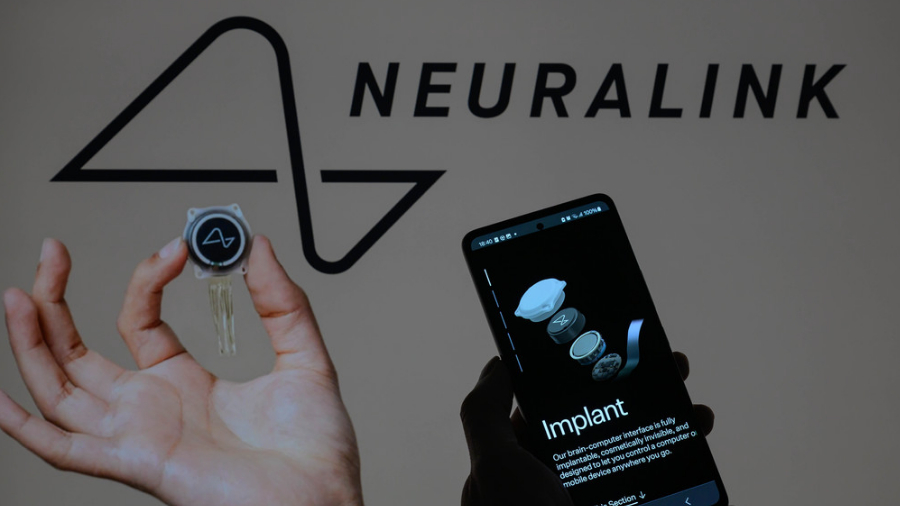

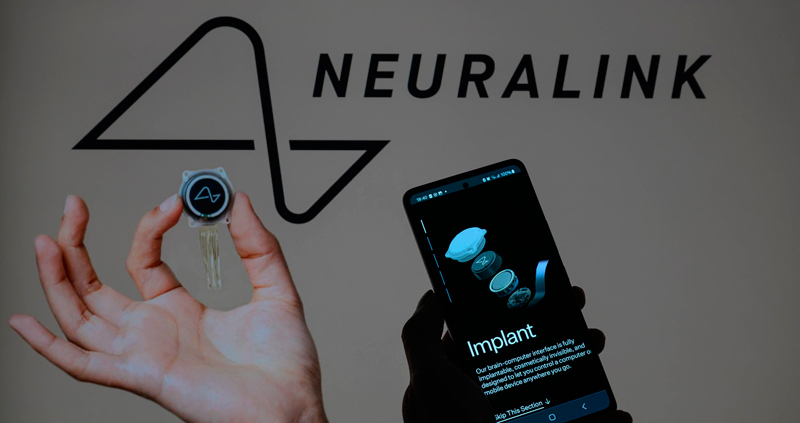

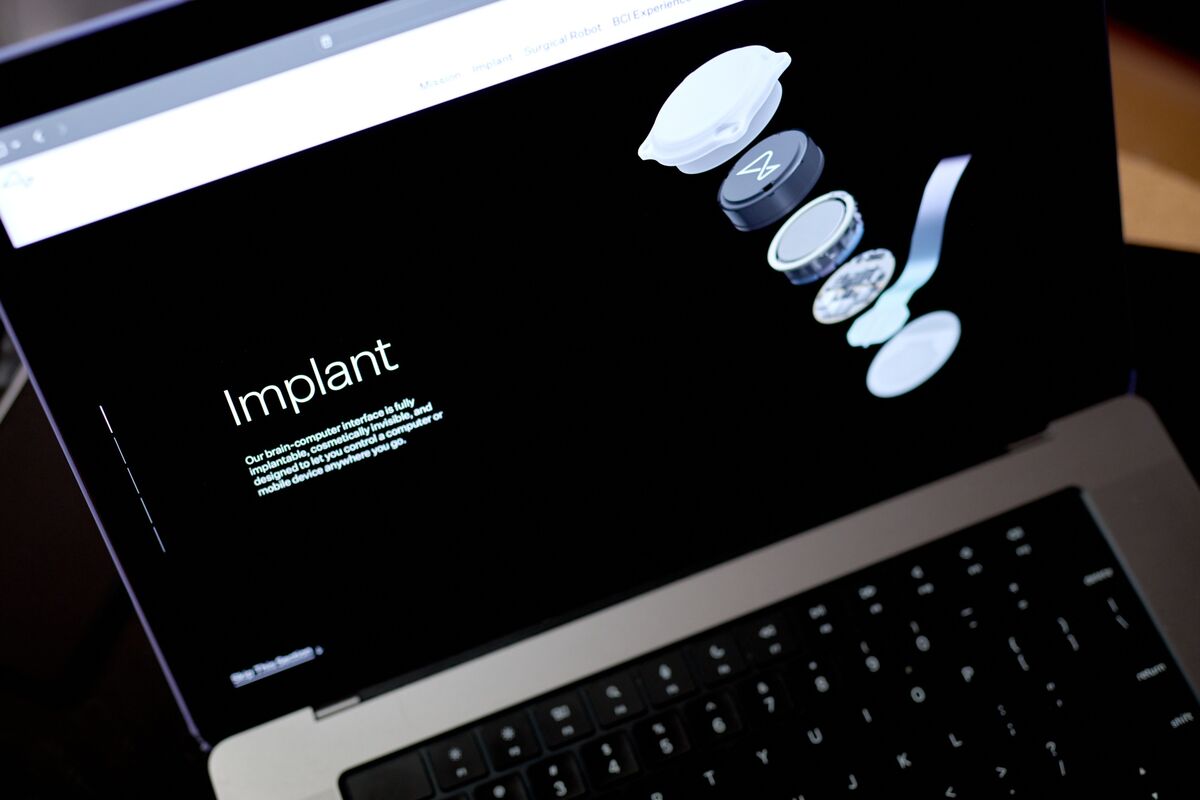

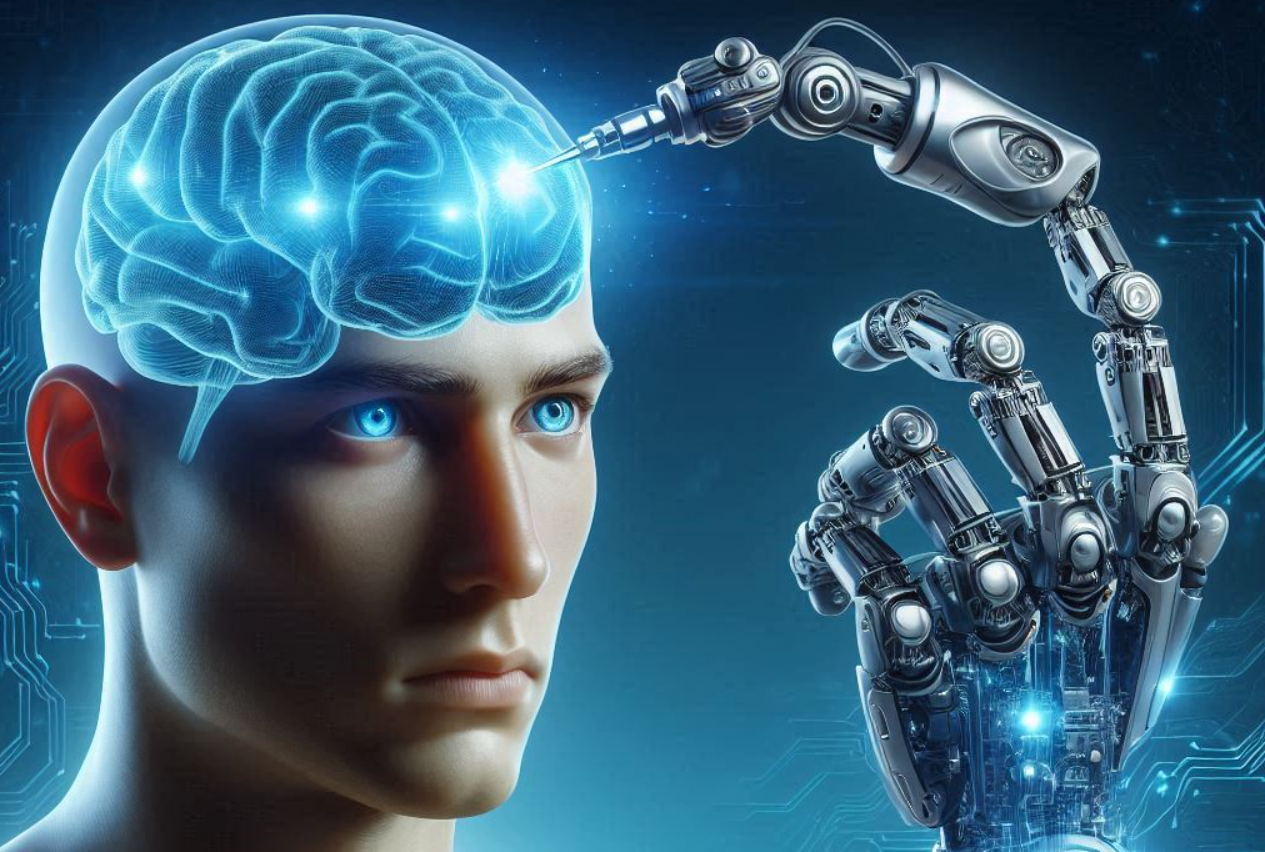

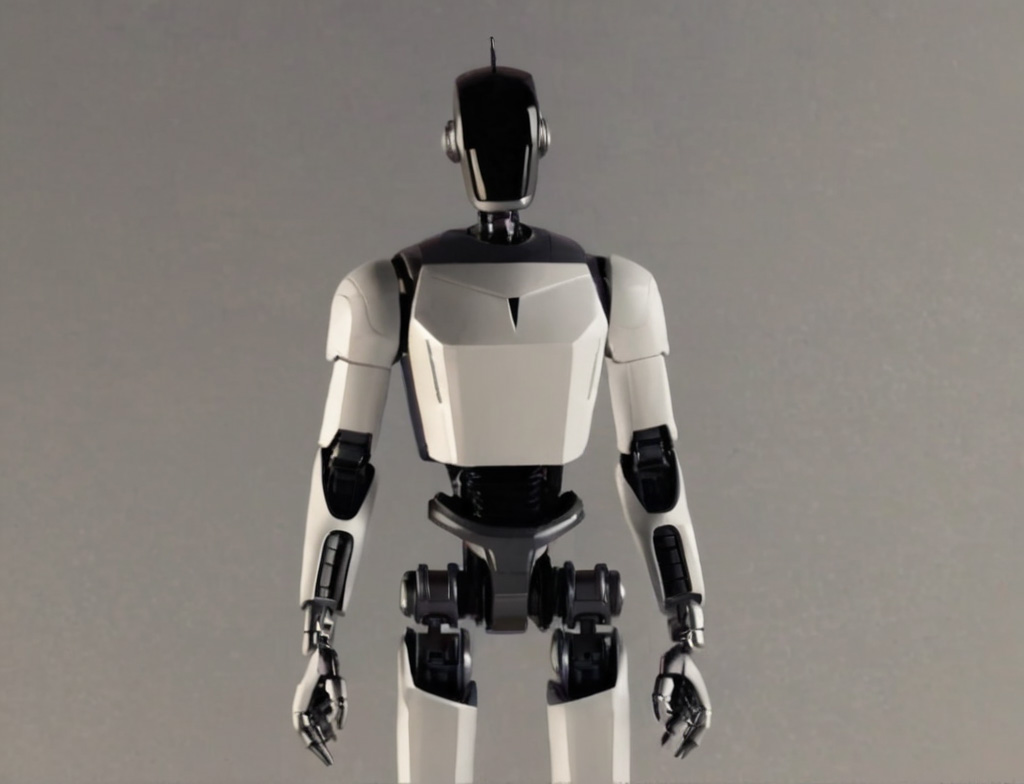

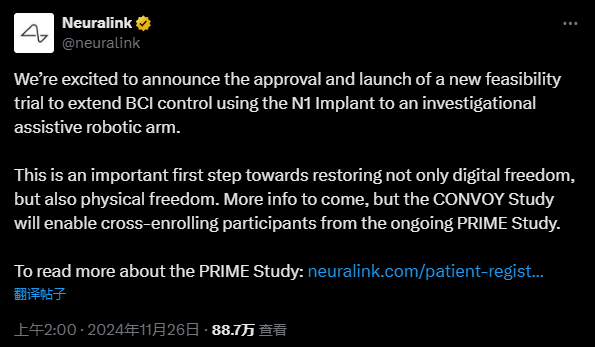

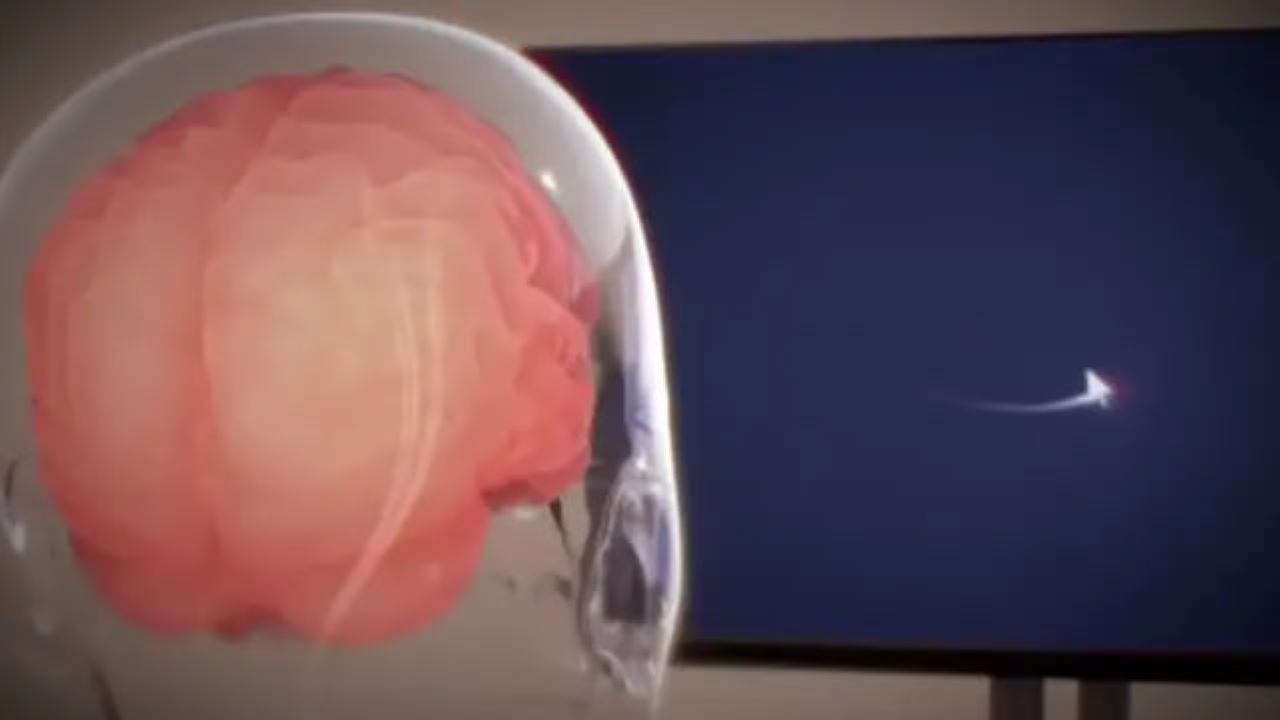

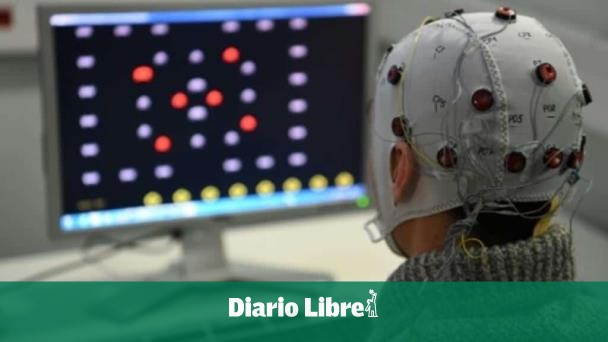

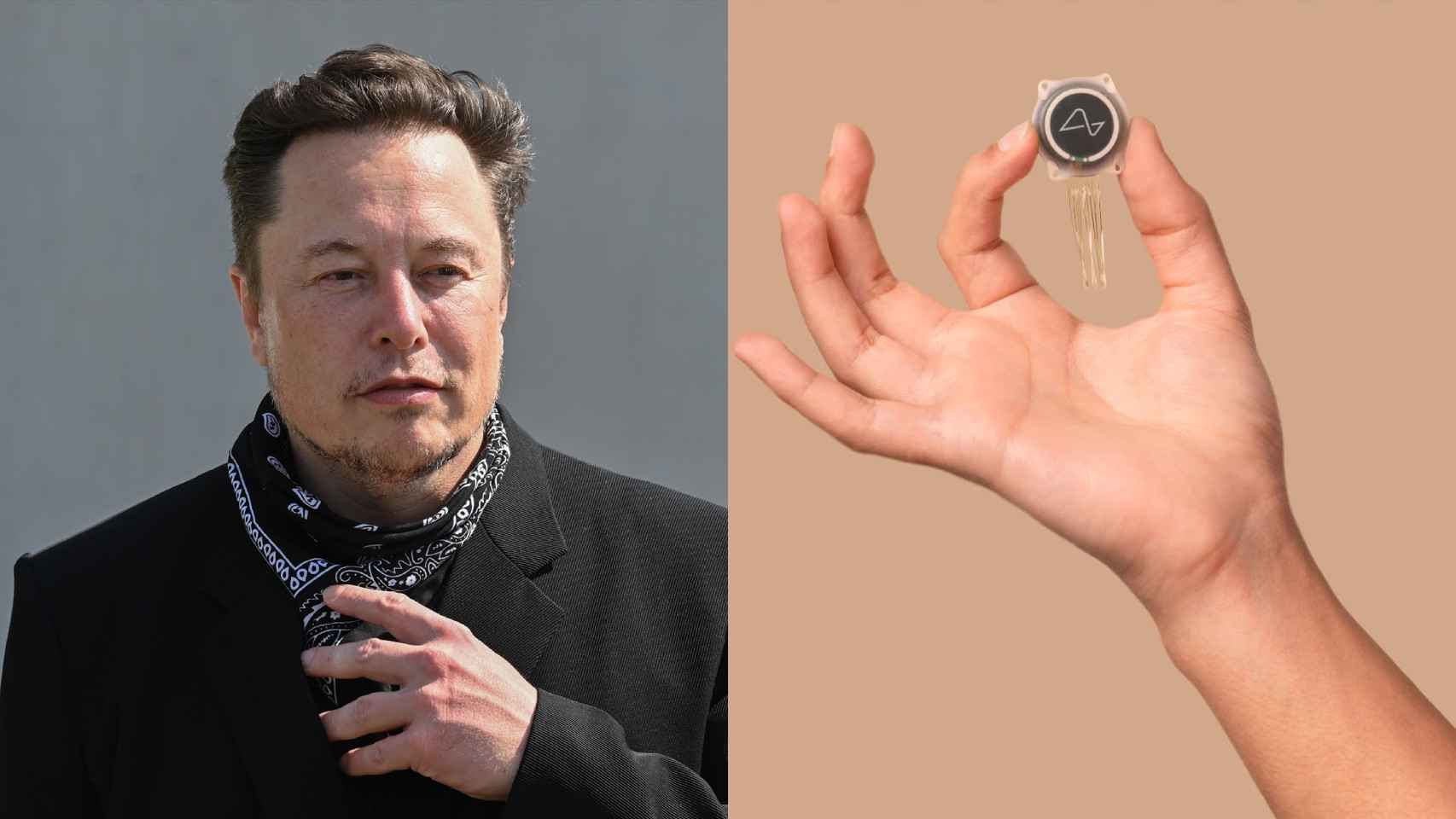

Neuralink, founded by Elon Musk, received FDA approval to test its N1 brain-computer interface in humans, enabling paralyzed patients to control robotic arms using neural signals. The feasibility trials (PRIME and follow-up CONVOY) will assess the wireless implant’s safety, decoding accuracy and potential to restore physical autonomy.[AI generated]

:format(jpg):quality(99)/f.elconfidencial.com/original/a34/b55/bfd/a34b55bfd1df70d1d49e3cb97e3610d6.jpg)