The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

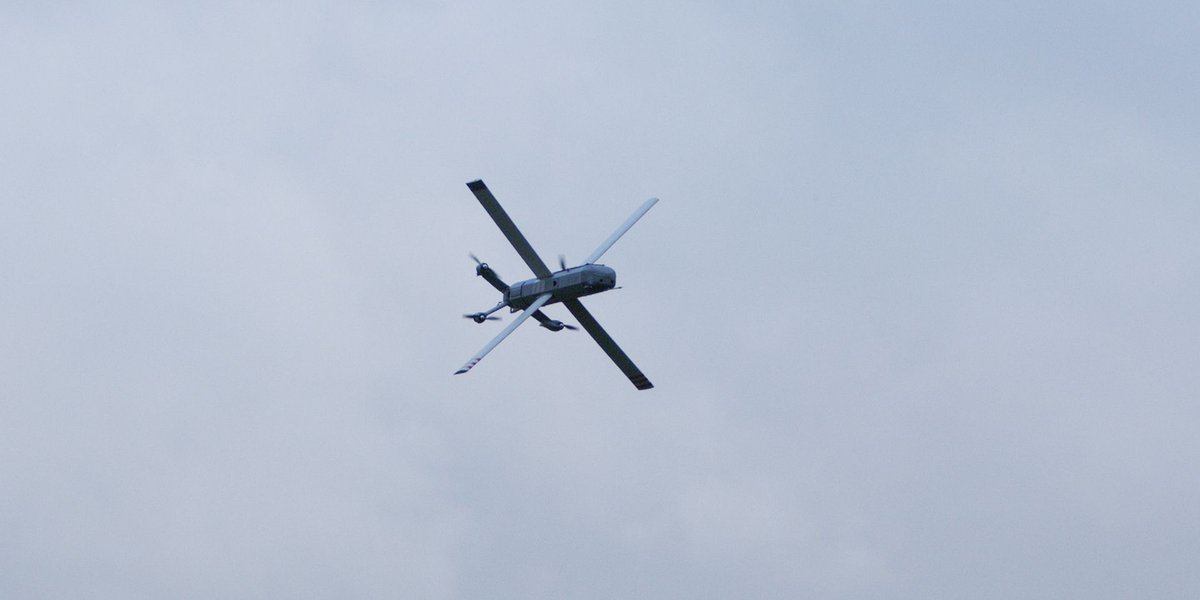

German AI company Helsing has launched the HX-2, an AI-powered attack drone, for deployment in Ukraine. The drone can autonomously identify and attack targets, is resistant to electronic warfare, and can operate in swarms. It is produced at a low cost using 3D printing, raising concerns about potential harm and human rights violations.[AI generated]

:format(jpg):quality(99)/f.elconfidencial.com/original/1ec/652/dfb/1ec652dfbceb06c04f3203fa5f1cc916.jpg)