The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

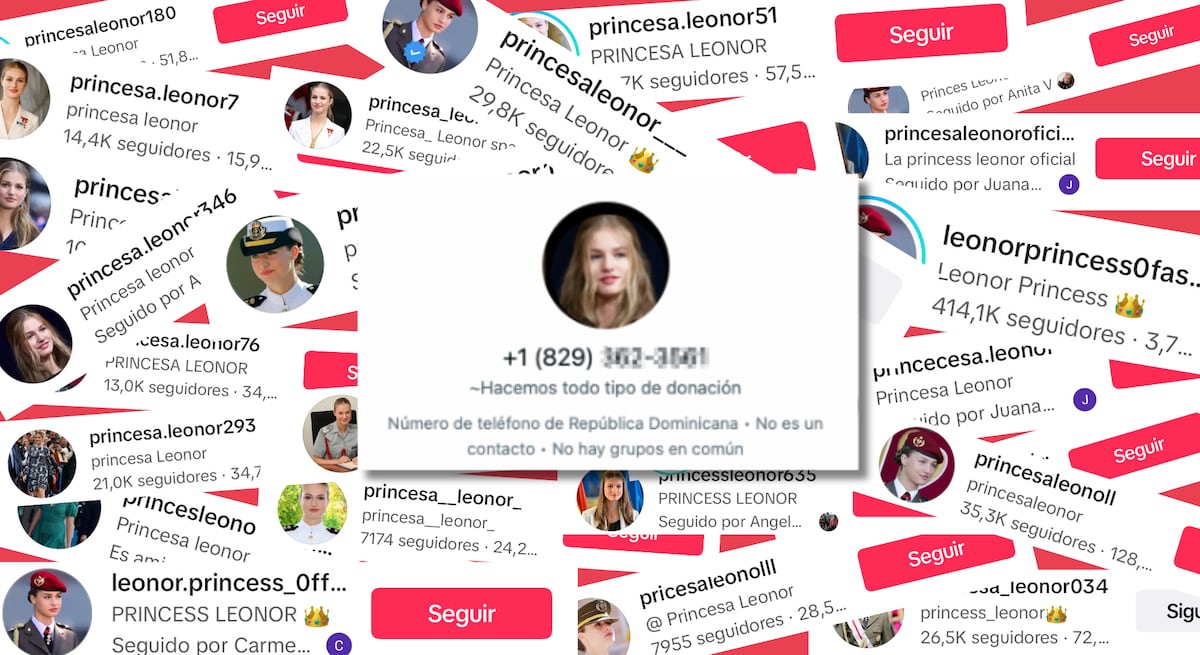

Criminals use AI-generated deepfake videos and audio of Princess Leonor on TikTok and Facebook to promise massive money transfers. Victims, mainly in Latin America, are lured into paying fees (€100–200) under pretexts of taxes or transfer costs. The sophisticated AI impersonation has defrauded dozens, causing significant financial losses.[AI generated]