The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

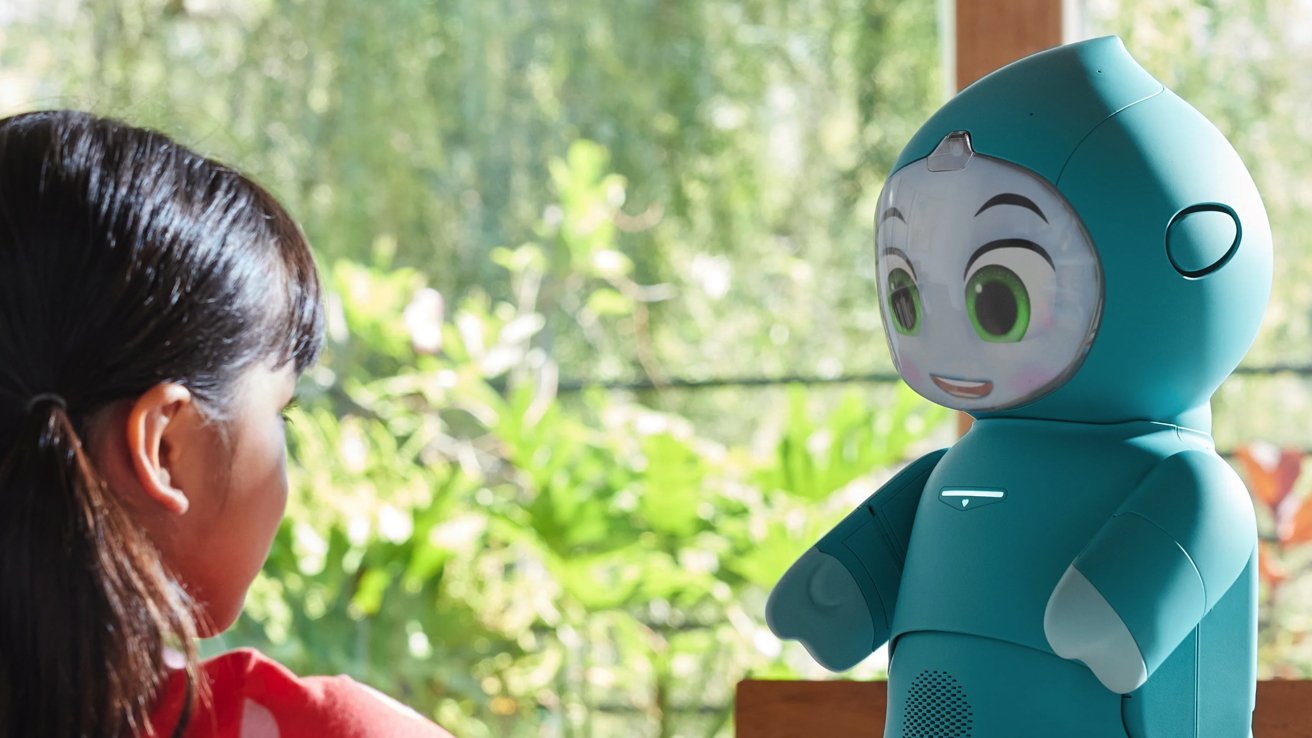

Embodied’s abrupt shutdown has rendered $799 Moxie AI robots—marketed as emotional-support companions for autistic children—completely nonfunctional, with refunds denied for most owners. Families face emotional distress and financial loss, underscoring the vulnerability of proprietary, cloud-reliant AI devices dependent on company solvency.[AI generated]