The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

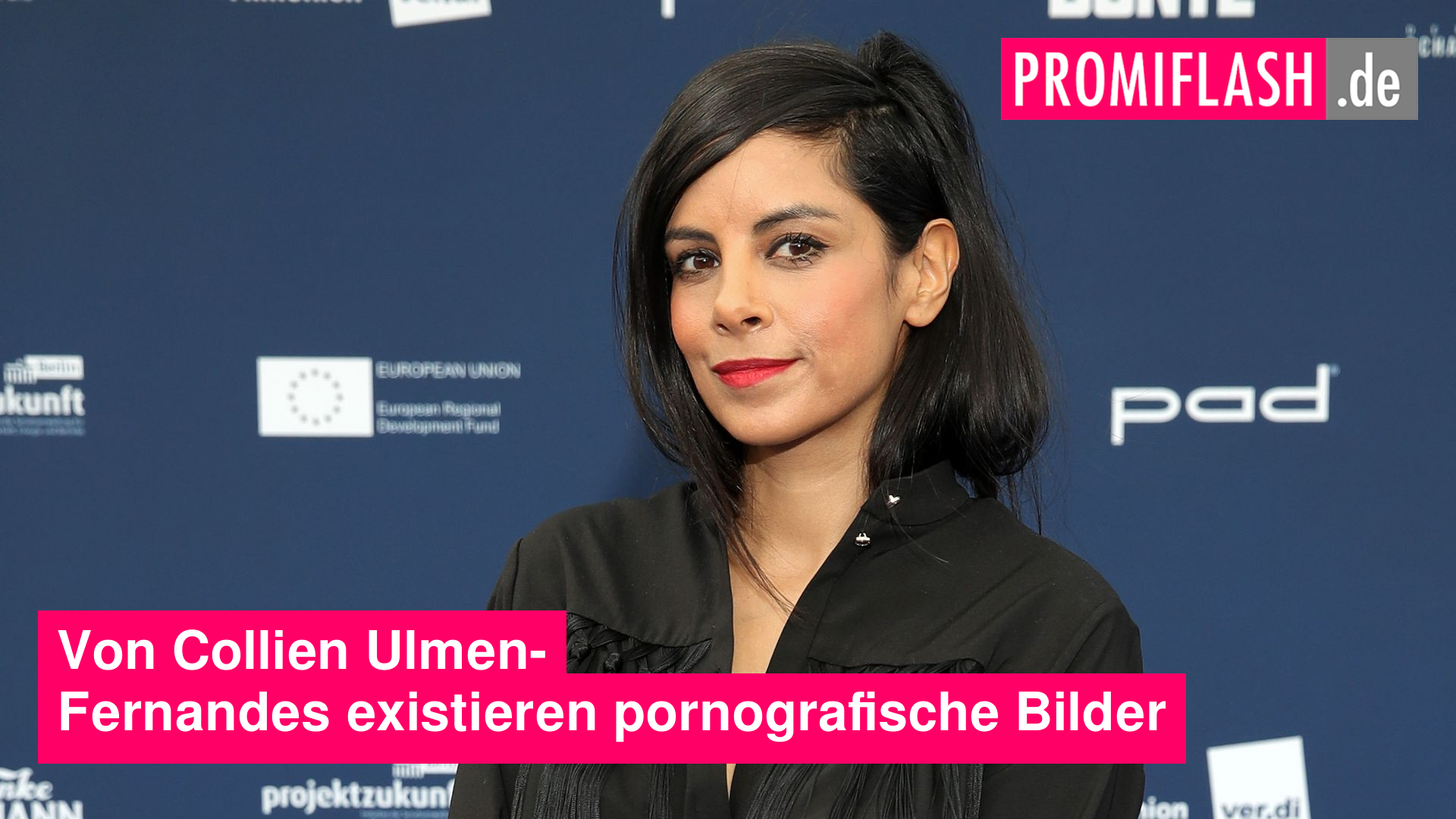

Collien Ulmen-Fernandes, a German actress, discovered deepfake pornography of herself online during a ZDF documentary investigation. AI technology was used to superimpose her face onto explicit images, leading to privacy violations and financial exploitation. This incident highlights the misuse of AI in creating harmful deepfake content.[AI generated]