The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

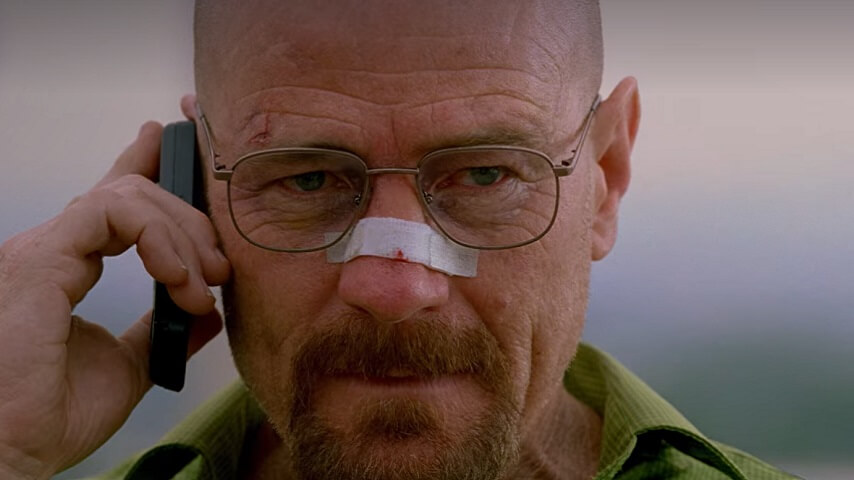

The Writers Guild of America has urged major Hollywood studios to take legal action against AI companies using copyrighted film and TV subtitles to train their models without permission. The Guild accuses tech firms like Apple and Nvidia of intellectual property theft, demanding studios defend writers' rights against unauthorized use.[AI generated]