The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

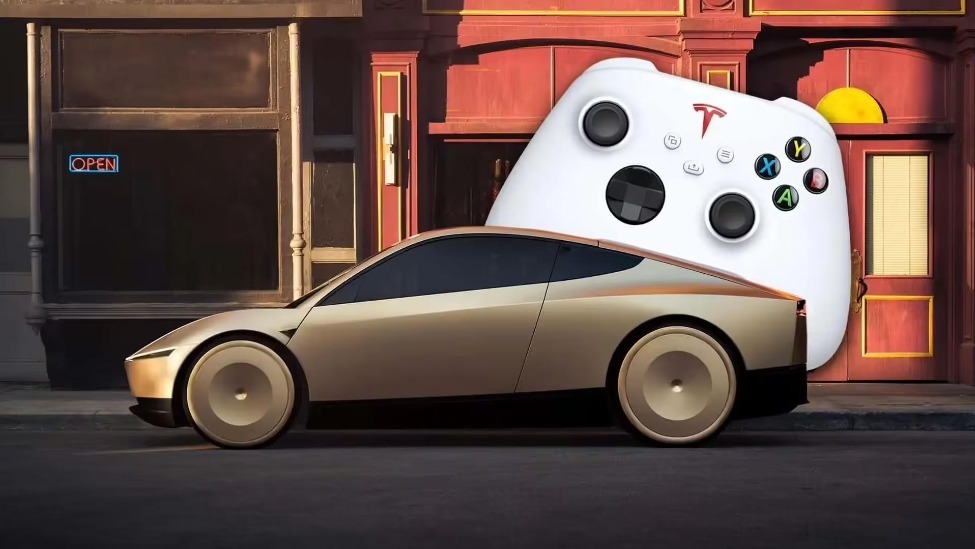

Tesla’s Cybercab, a steering wheel-less, pedal-free autonomous taxi relying on AI full self-driving, costs under $30k and uses 50% fewer parts than Model 3. Planned for production by 2026 with 2025 road tests, it features remote human intervention via gamepad, marking a significant step toward autonomous taxis and potential AI hazards.[AI generated]