The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

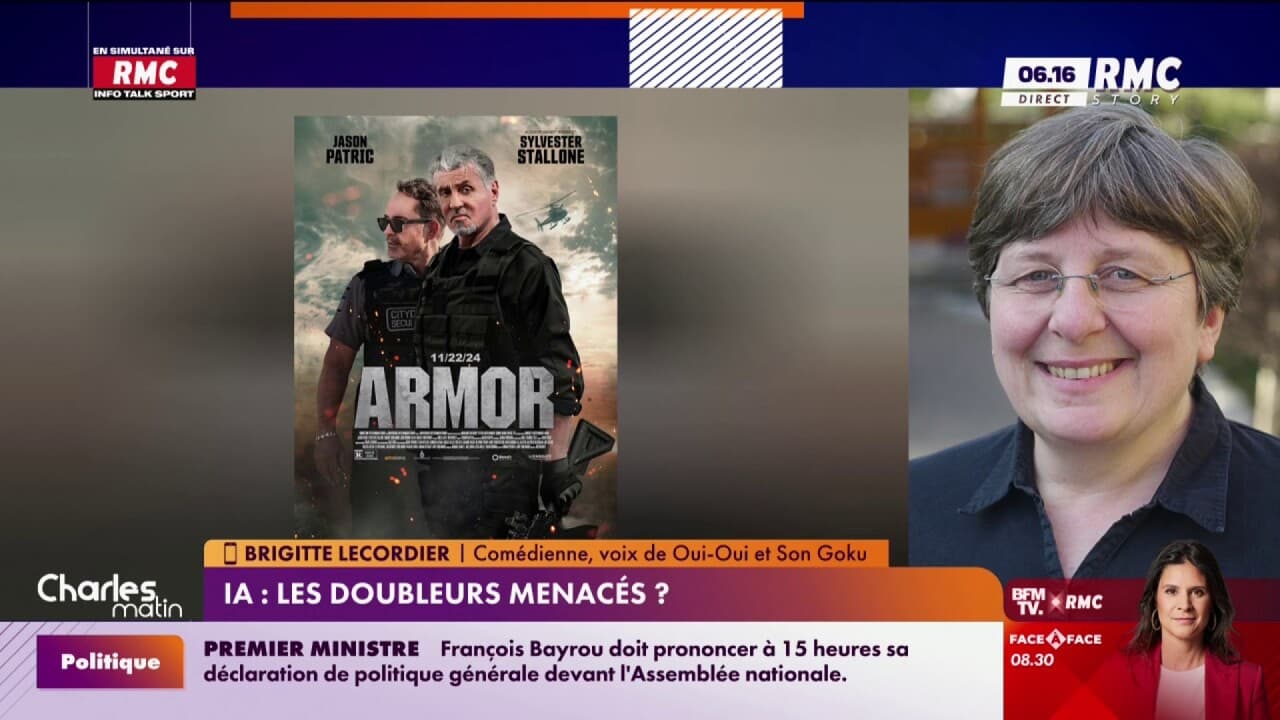

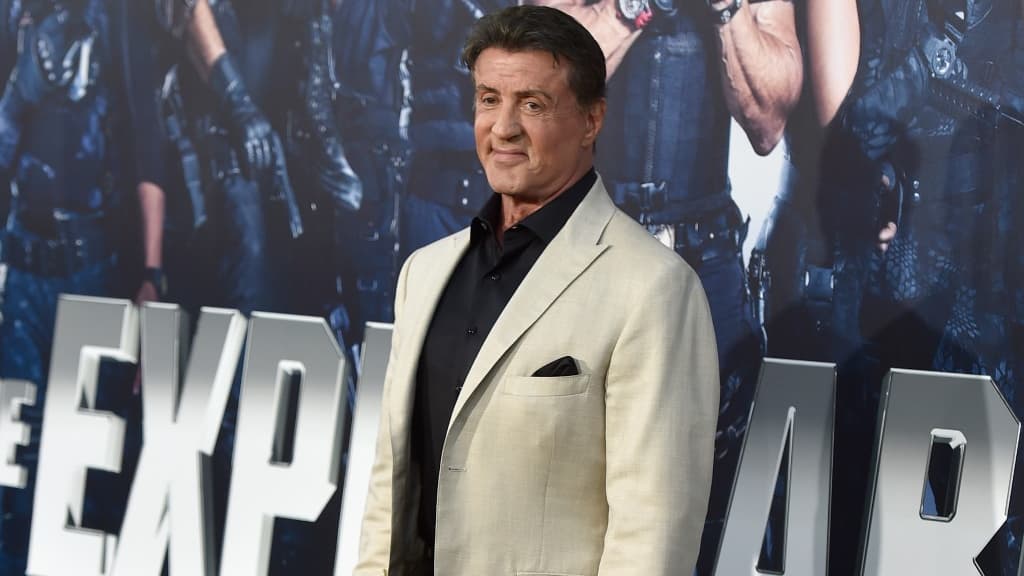

Eleven Labs has created an AI-generated vocal clone of deceased voice actor Alain Dorval, known for dubbing Sylvester Stallone. This has sparked concerns about intellectual property and labor rights among voice actors, with Brigitte Lecordier advocating for human dubbing. The AI-generated voice was shared online, prompting widespread reactions.[AI generated]

:quality(70)/cloudfront-eu-central-1.images.arcpublishing.com/liberation/JE2GK7ISFJHXVIDF4ONXYOPOZA.jpg)