The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

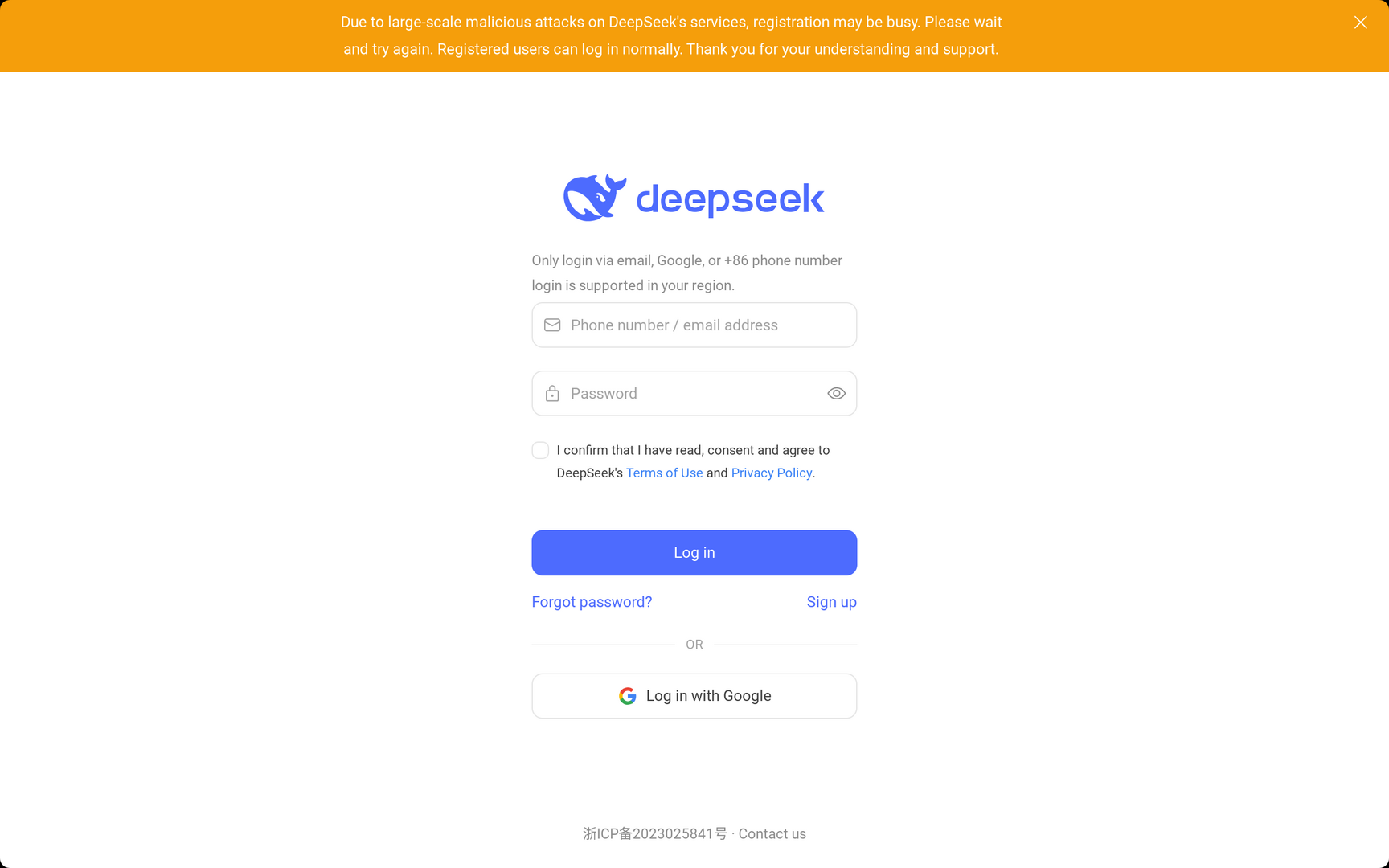

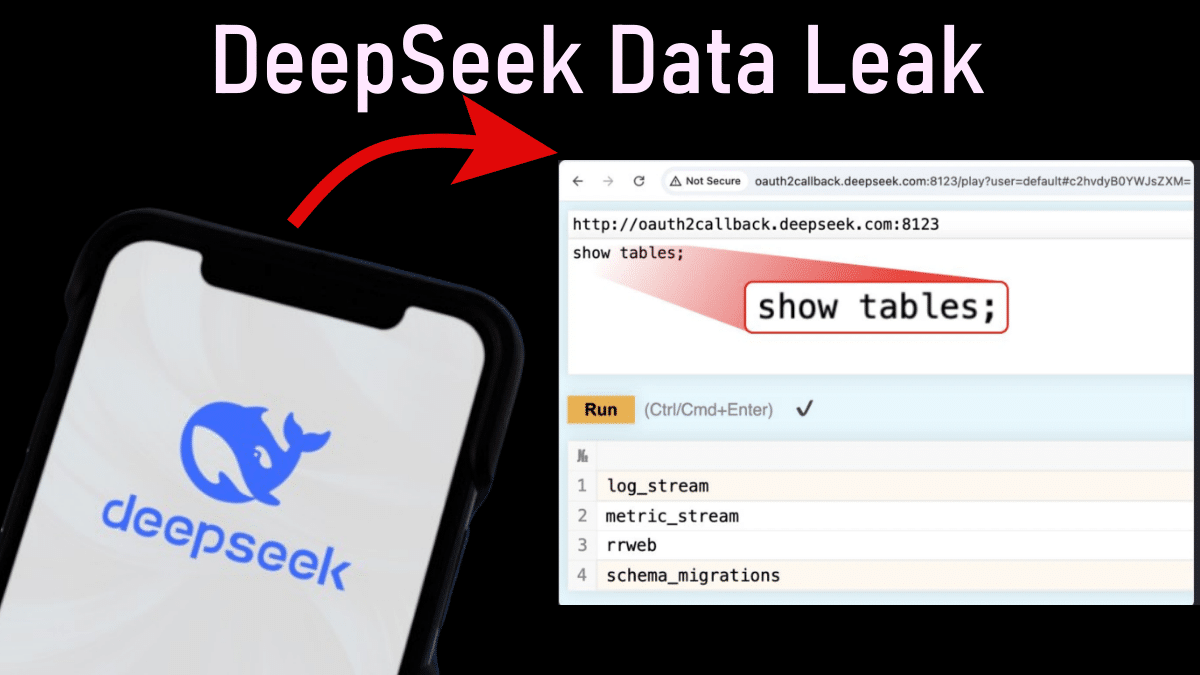

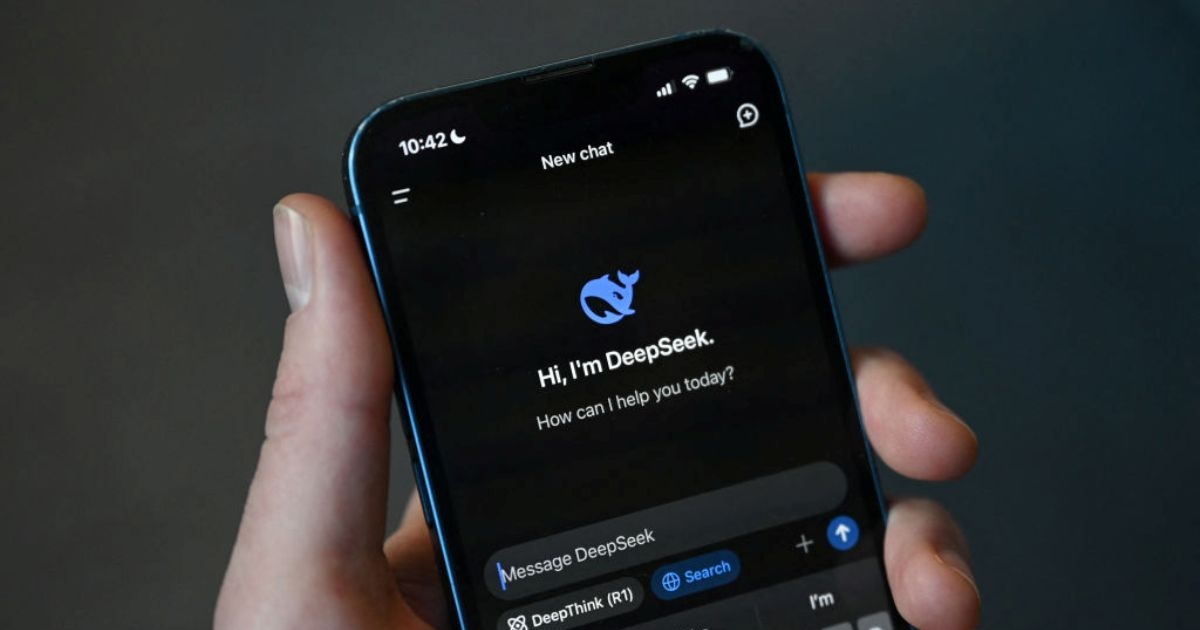

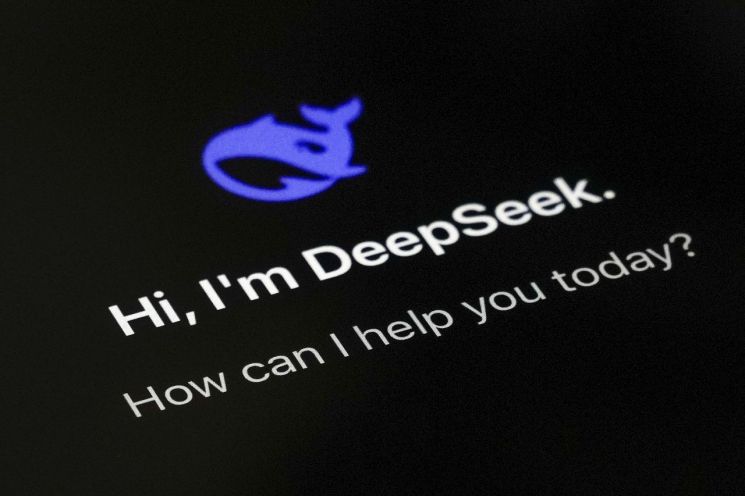

Wiz, a cybersecurity firm, discovered a security vulnerability in DeepSeek, a Chinese AI startup, exposing sensitive data online. The breach included chat records and API secrets, allowing unauthorized access. DeepSeek quickly addressed the issue, but concerns remain about data privacy, prompting inquiries from Italian and Australian regulators.[AI generated]

:strip_icc():format(jpeg):watermark(kly-media-production/assets/images/watermarks/liputan6/watermark-color-landscape-new.png,1100,20,0)/kly-media-production/medias/5115196/original/024757800_1738292628-DeepSeek__2_.jpeg)