The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

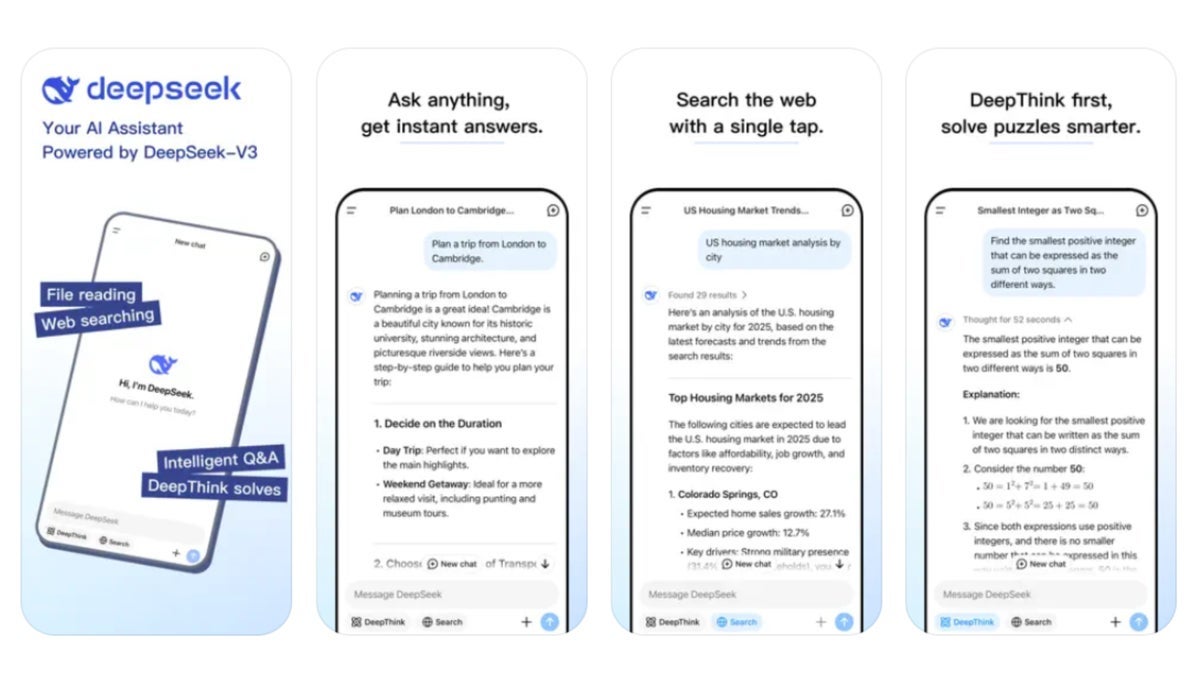

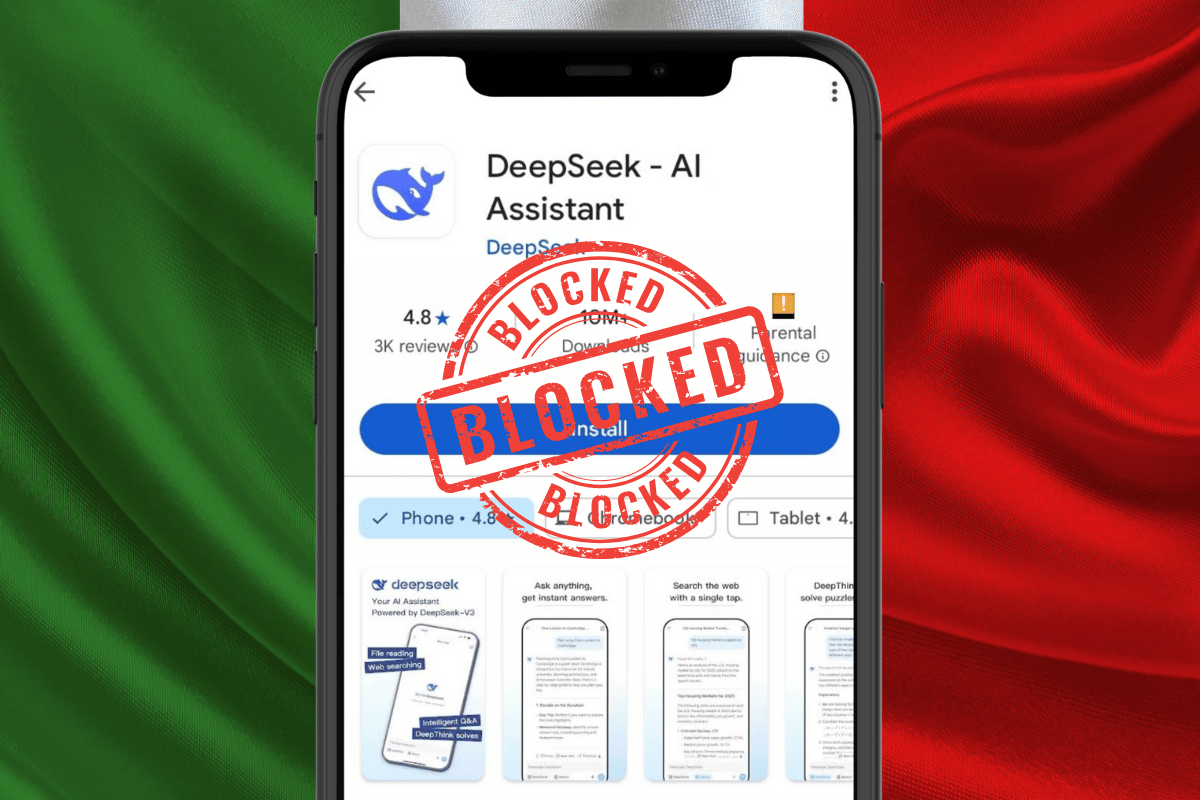

Italy's data protection authority, Garante, has demanded Chinese AI company DeepSeek clarify its data collection practices and storage locations, amid fears of privacy risks to millions of Italians. DeepSeek's app has been temporarily removed from Italian app stores. Australia and the US have also expressed concerns over potential privacy and security issues.[AI generated]

/cloudfront-ap-northeast-1.images.arcpublishing.com/sankei/JUATD2UMLVIRPMP4IXBGPOSDLA.jpg)

:quality(50)/cloudfront-ap-northeast-1.images.arcpublishing.com/sankei/WXG5N5AU4BMNJMTNVYLLTLUJOA.jpg)

/cloudfront-ap-northeast-1.images.arcpublishing.com/sankei/HHNQPITIHZLTNKAD34TJOL6QAY.jpg)

.jpg)

)

:strip_icc():format(jpeg)/kly-media-production/medias/5110555/original/000938400_1737970734-Screenshot_2025-01-27_163544.jpg)

:strip_icc():format(jpeg):watermark(kly-media-production/assets/images/watermarks/liputan6/watermark-color-landscape-new.png,1100,20,0)/kly-media-production/medias/5112982/original/047578700_1738207377-DeepSeek.jpeg)

:strip_icc():format(jpeg)/kly-media-production/medias/4309628/original/096718200_1675223815-Kreator_ChatGPT_OpenAI.jpg)

:strip_icc():format(jpeg)/kly-media-production/medias/2748581/original/016857400_1552342962-penatagon.jpg)

:quality(80)/https://asset.kgnewsroom.com/photo/pre/2025/01/31/b73436da-db41-4bb5-ad85-f487755b228d_jpg.jpg)

/data/photo/2025/01/30/679ade8772972.jpeg)

/data/photo/2025/01/30/679ae30e1b125.jpg)

/data/photo/2025/01/30/679acb35d6e16.jpg)

.jpg)

/https://www.ilsoftware.it/app/uploads/2025/01/deepseek-R1-ragionamento-AI.jpg)