The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

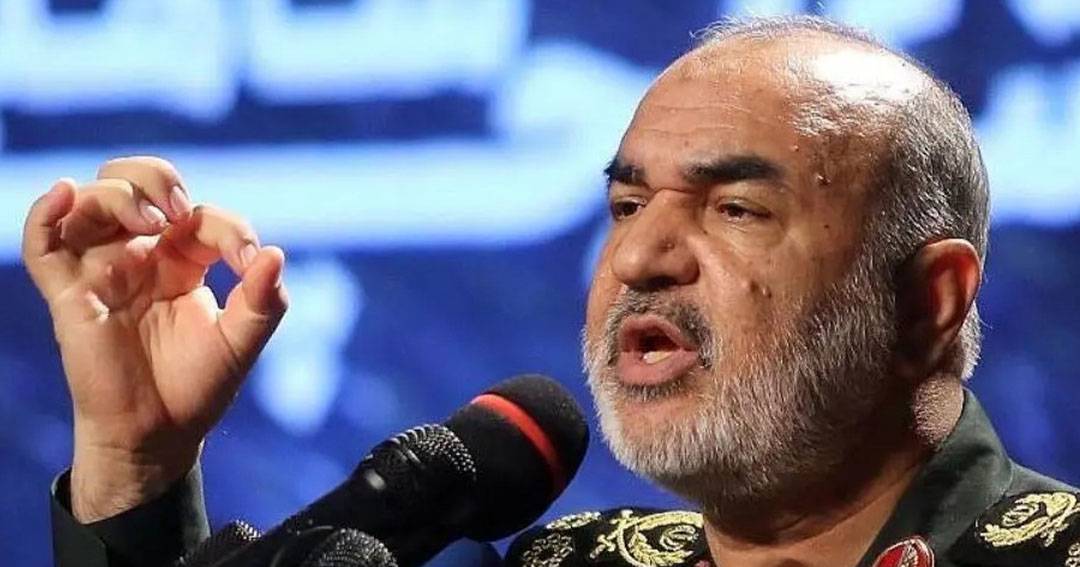

Iran's Revolutionary Guard leader, Major General Hossein Salami, announced the use of AI in military operations to accurately and swiftly target ships and aircraft. While emphasizing ethical considerations, Salami highlighted AI's role in identifying targets without harming innocent crew members, reflecting potential future military applications of AI technology.[AI generated]