The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

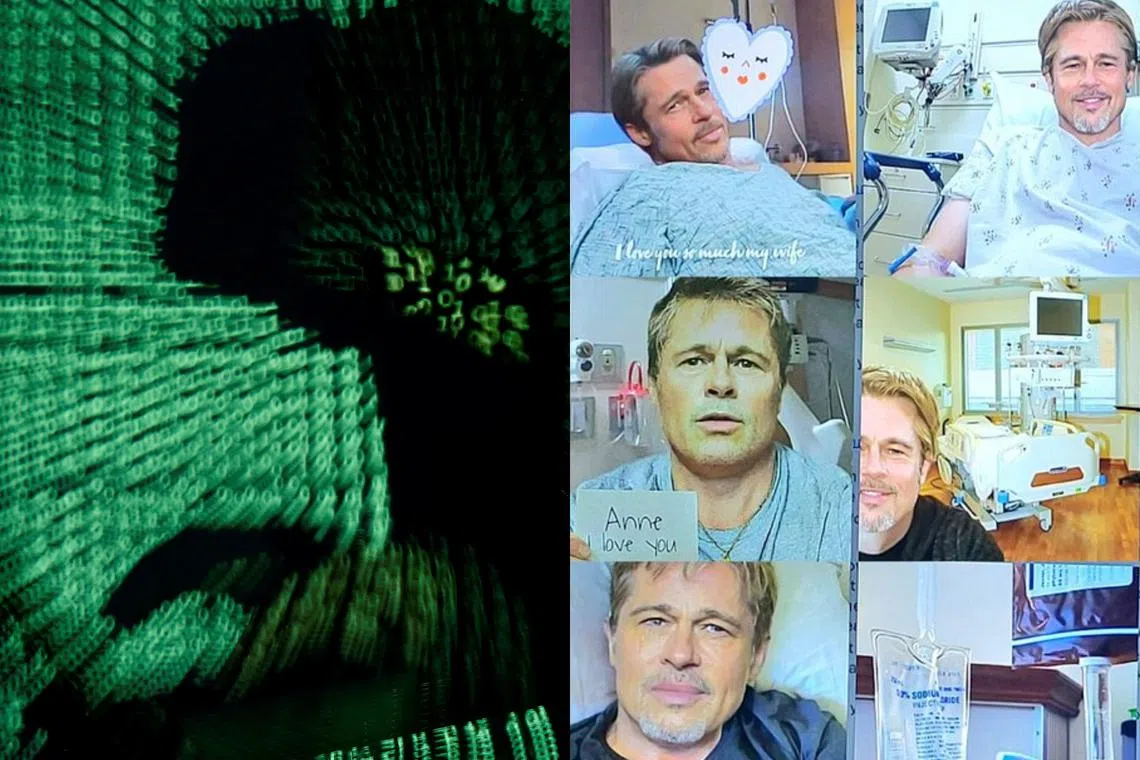

AI-generated deepfakes and fake emails are increasingly used in sophisticated online scams, leading to financial harm. In France, over 130,000 online scams were recorded in 2023, marking an 8% annual increase. Notable scams include a woman losing €830,000 to a fake Brad Pitt and fictitious donations for Los Angeles fire victims.[AI generated]