The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

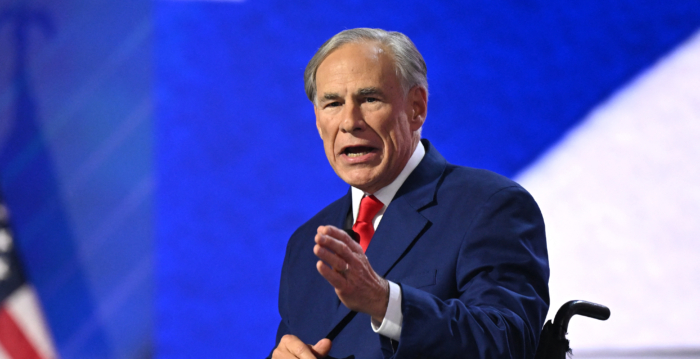

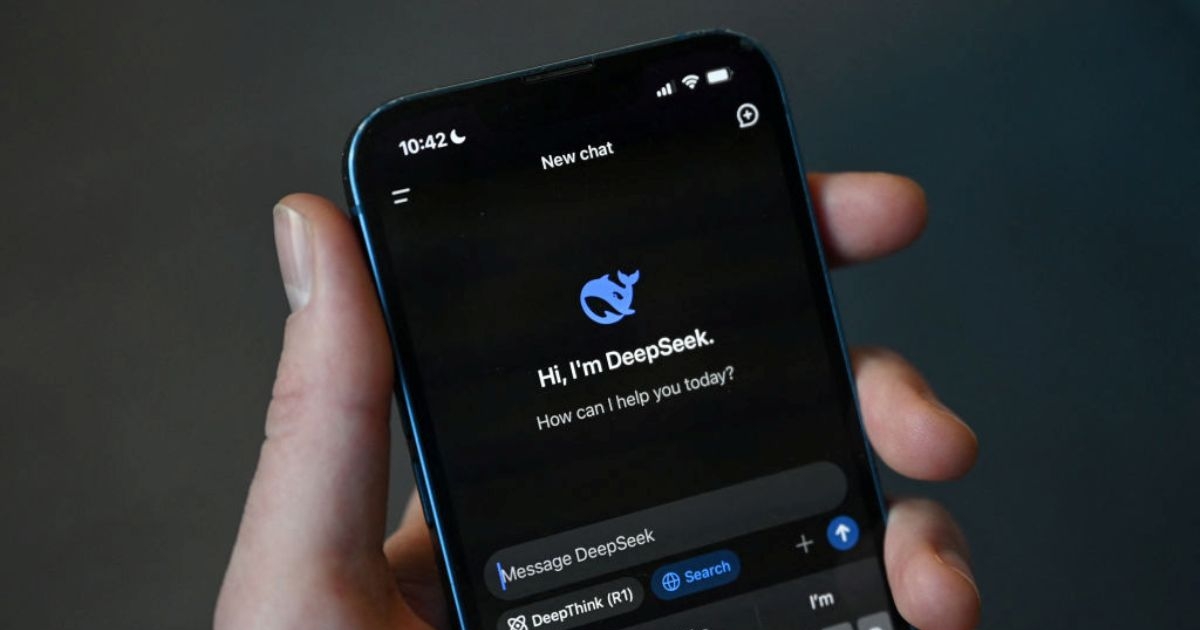

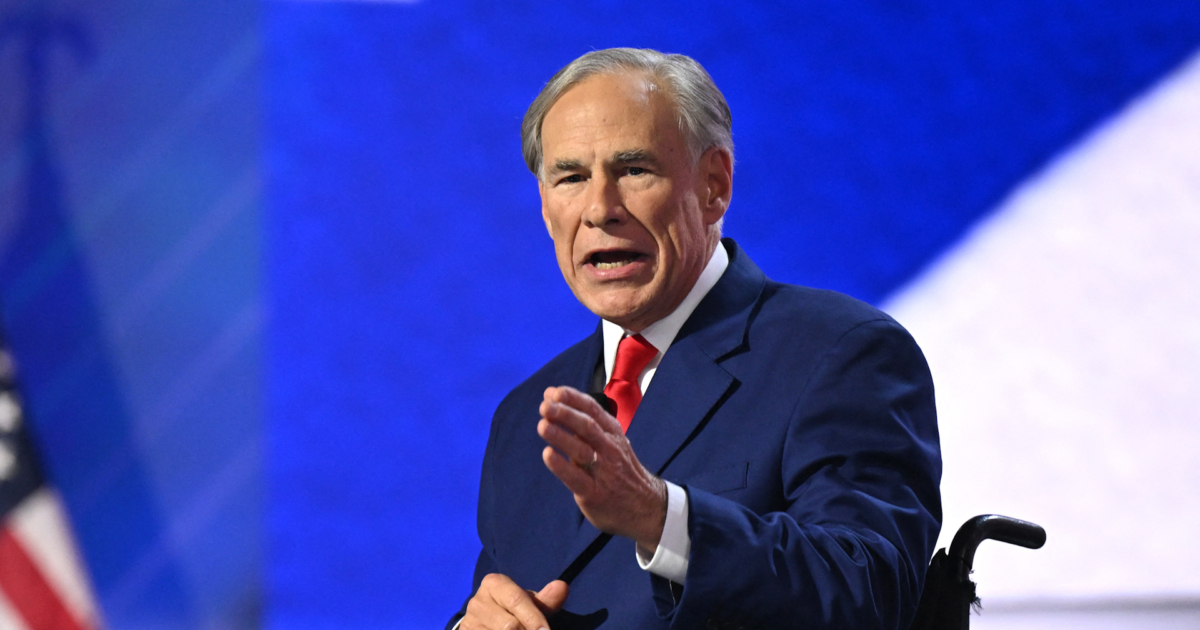

Texas Gov. Greg Abbott banned the Chinese AI chatbot DeepSeek and related apps on state government devices, citing risks of data collection and CCP infiltration of critical infrastructure. Taiwan’s Digital Development Department likewise barred DeepSeek usage for public agencies. The preventive measures sparked domestic and industry debate over security versus openness.[AI generated]