The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

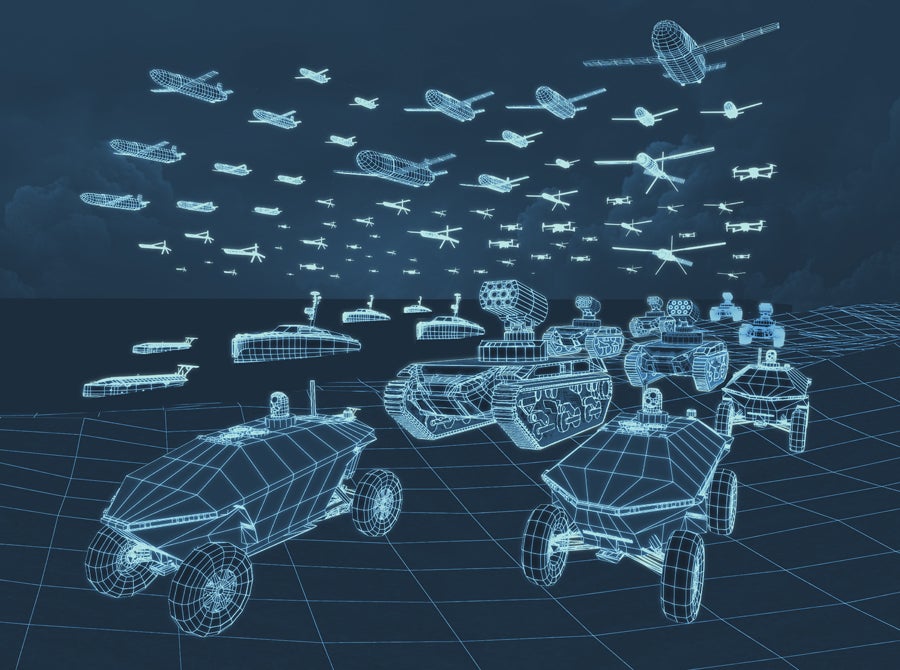

L3Harris introduced AMORPHOUS, an open-architecture AI platform enabling U.S. and allied forces to command thousands of heterogeneous unmanned assets via decentralized decision-making across multiple domains. Designed for complex military missions, the swarm-control software has undergone prototype testing with the U.S. Army and Defense Innovation Unit, raising potential hazard concerns despite no reported incidents.[AI generated]