The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

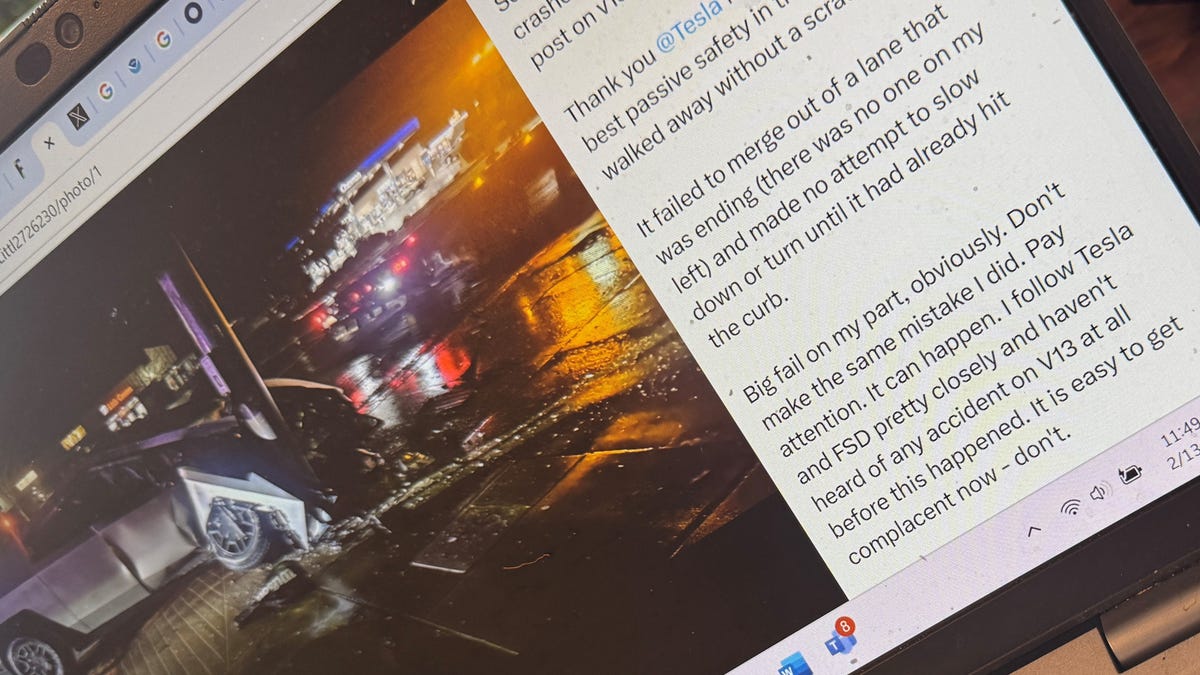

Florida-based software developer Jonathan Challinger experienced a crash when his Tesla Cybertruck's Full Self-Driving system (v13 and v13.2.4) failed to merge or turn, resulting in collisions with a curb and a pole. He shared the incident on social media and warned users to remain vigilant while using advanced driving features.[AI generated]