The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

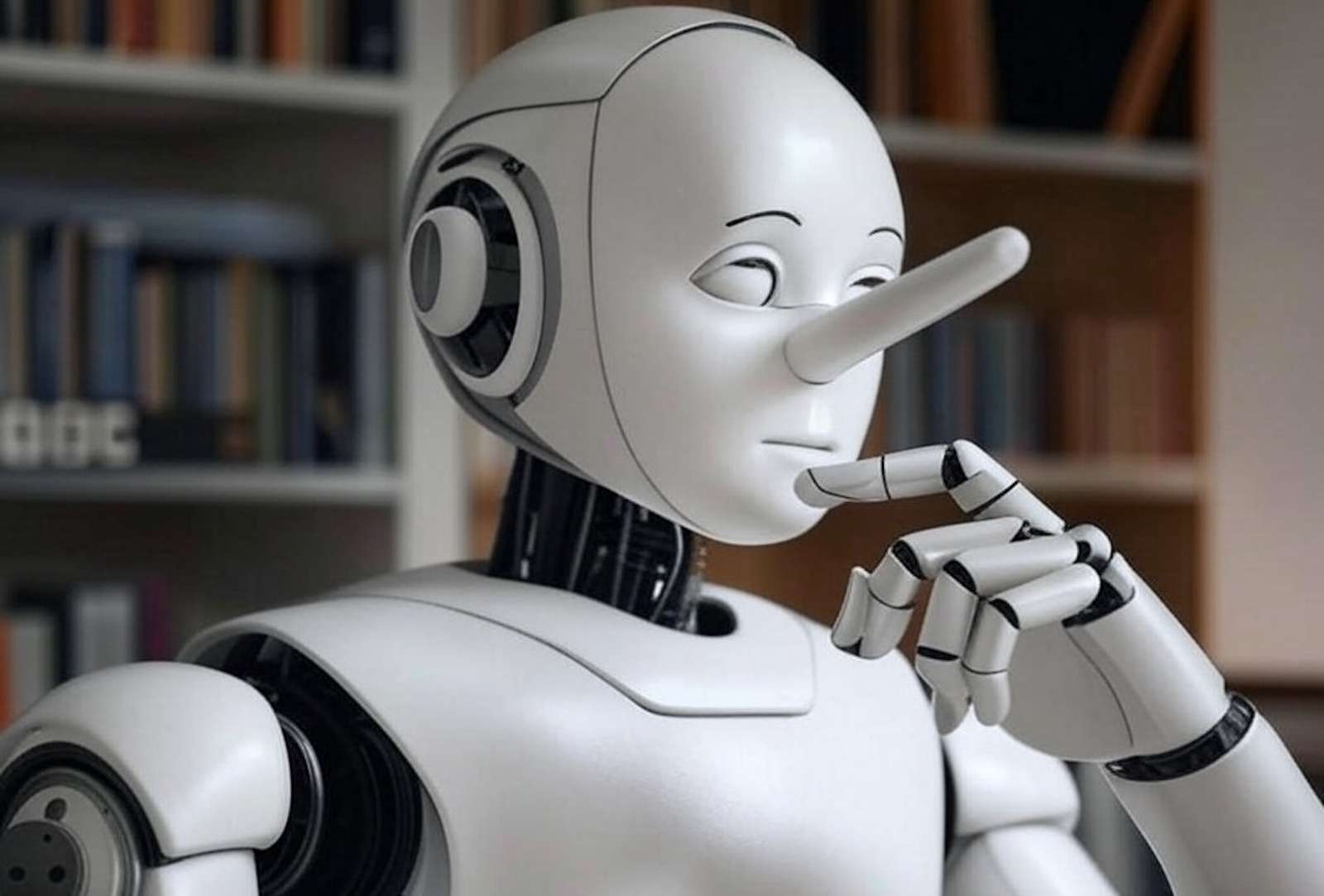

The BBC found that leading AI assistants, including ChatGPT, Microsoft's Copilot, Google's Gemini, and Perplexity, produced inaccurate news summaries. Over half of the answers had significant errors such as factual distortions, misquoted statistics, and altered content from BBC reports, raising concerns about misinformation and public trust.[AI generated]