The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

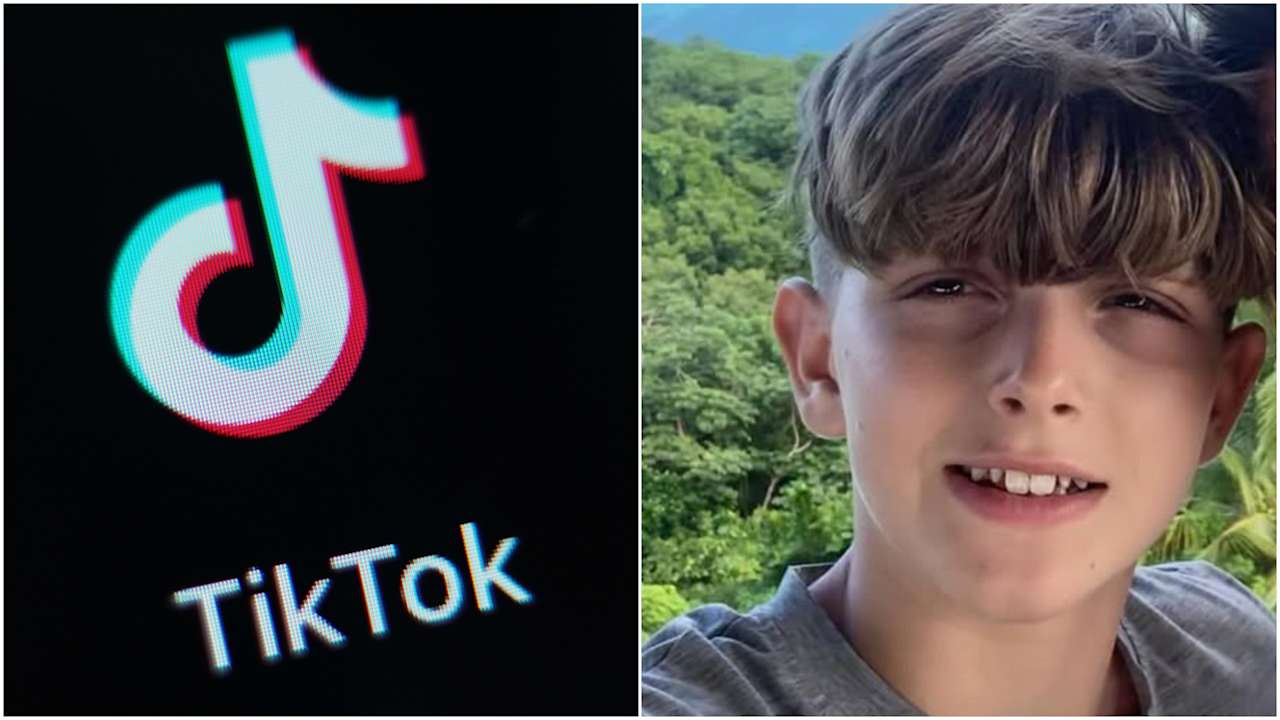

Four British families have sued TikTok and ByteDance in the US, alleging the platform’s AI-driven recommendation system promoted a dangerous “blackout challenge” leading to their children’s deaths. They seek account data to investigate, but TikTok’s senior government relations manager says some data may have been deleted and is unavailable.[AI generated]