The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

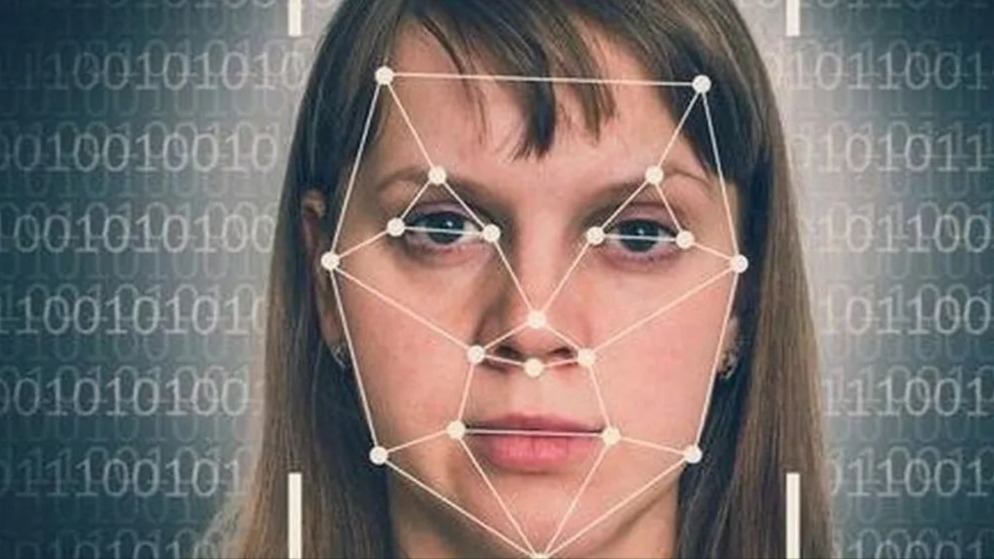

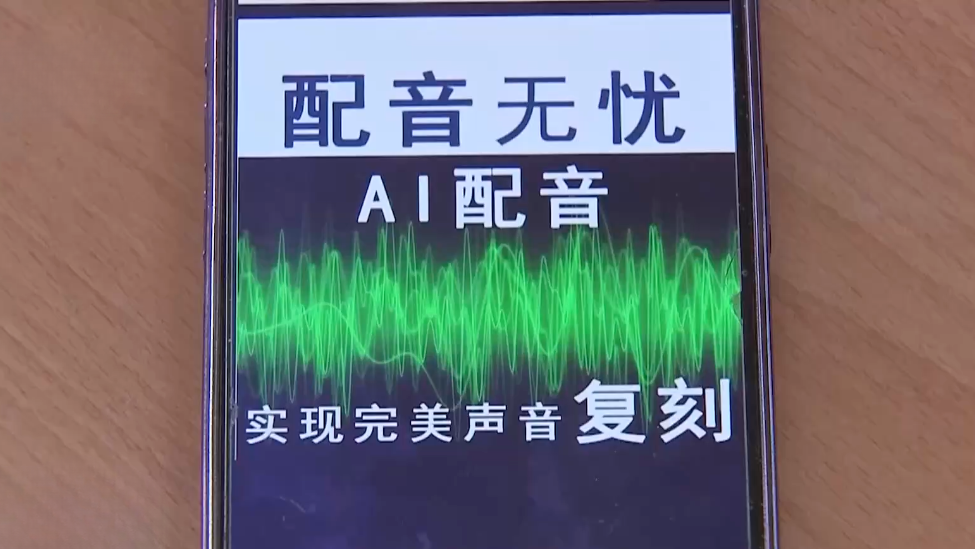

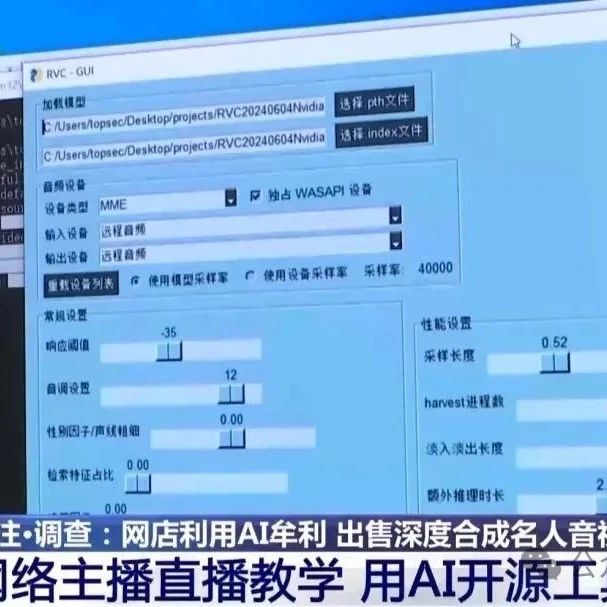

Multiple investigations reveal the misuse of AI deep synthesis technology to fabricate celebrity audio and video content, impersonating figures like Lei Jun, Liu Dehua, and Dr. Zhang Wenhong for fraudulent marketing and profit. Legal experts stress that unauthorized deepfakes violate intellectual property and personal rights, calling for stricter enforcement.[AI generated]