The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

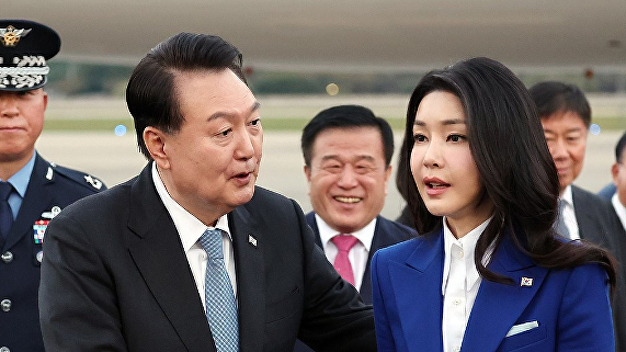

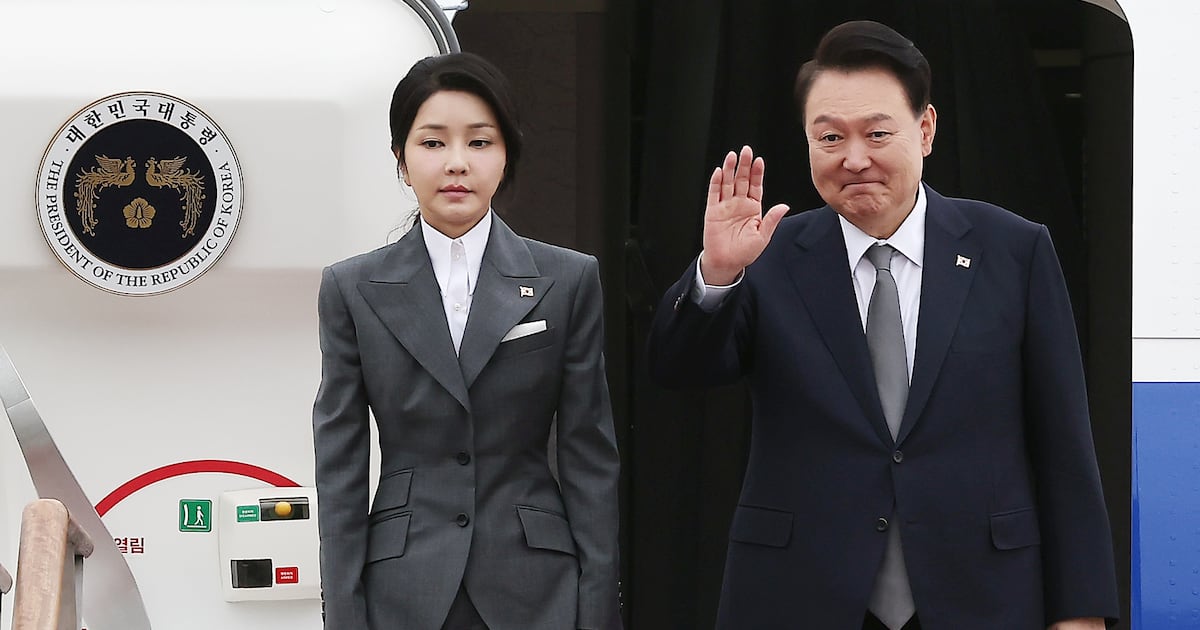

South Korea's regulatory agencies are acting swiftly against deepfake videos defaming President Yoon Suk-yeol and First Lady Kim Gun-hee. The manipulated videos, shown at pro-impeachment protests, have sparked legal investigations and led YouTube to remove related content due to serious defamation and human rights violations.[AI generated]