The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

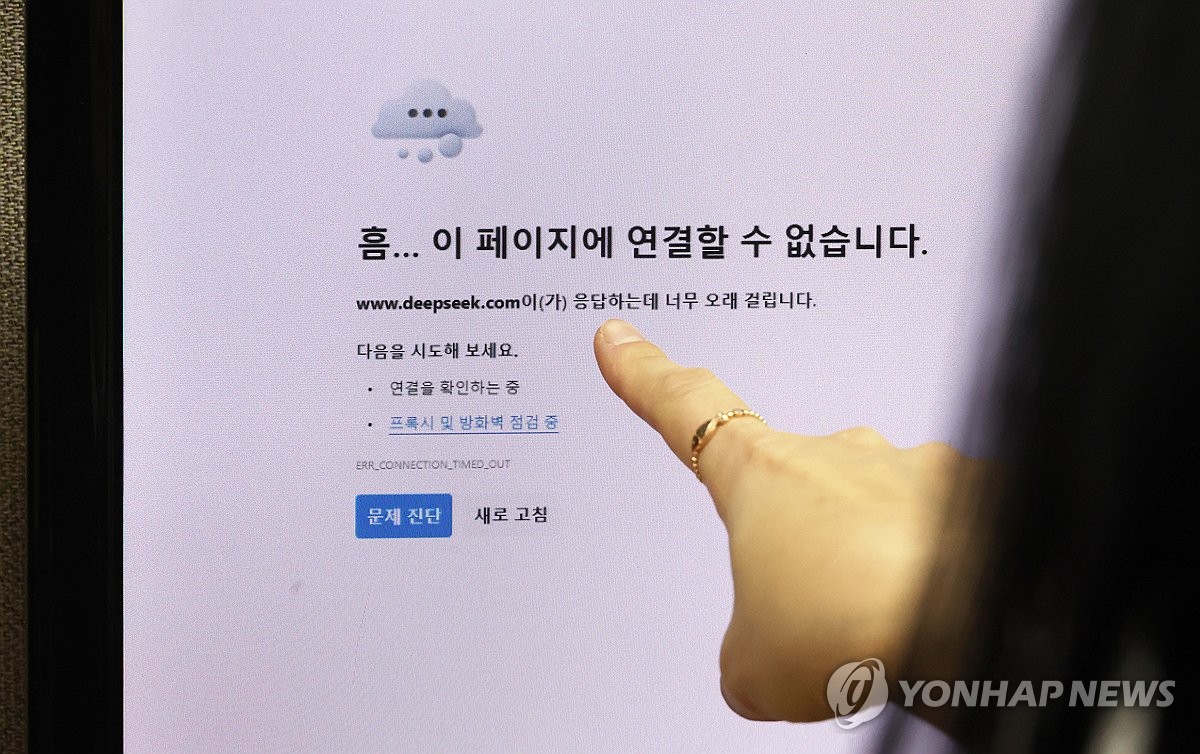

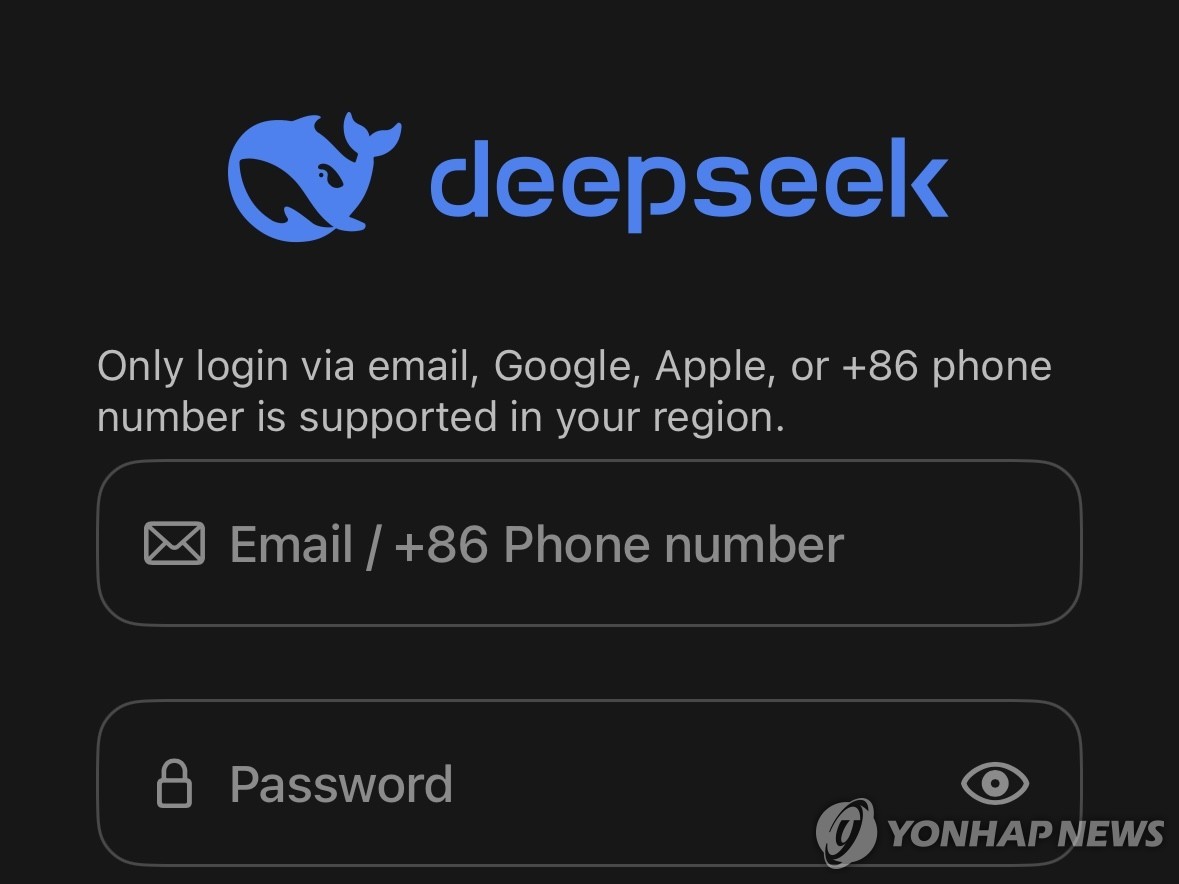

South Korea’s Personal Information Protection Commission has halted new downloads of Chinese AI app DeepSeek, following bans on internal use by several ministries due to risky data-collection practices. The government requires compliance with local privacy laws and app improvements before resumption. Existing users retain access while DeepSeek appoints a local representative to remedy shortcomings.[AI generated]

:format(jpg):quality(99)/f.elconfidencial.com/original/f1a/ff6/534/f1aff6534aaaf03d55a71c4770513202.jpg)