The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

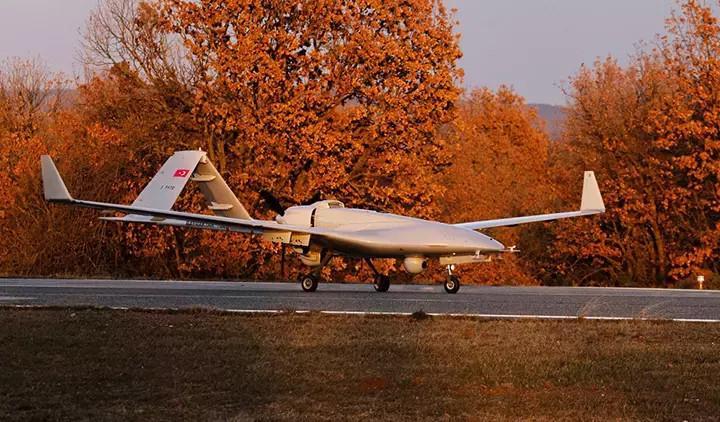

Baykar has begun test flights of its new Bayraktar TB2T-AI armed drone, integrating a turbo engine and advanced AI for autonomous navigation and target engagement. The UAV reached a record 30,318 feet in under 30 minutes and 160 knots (300 km/h), offering enhanced high-altitude endurance and combat potential.[AI generated]