The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

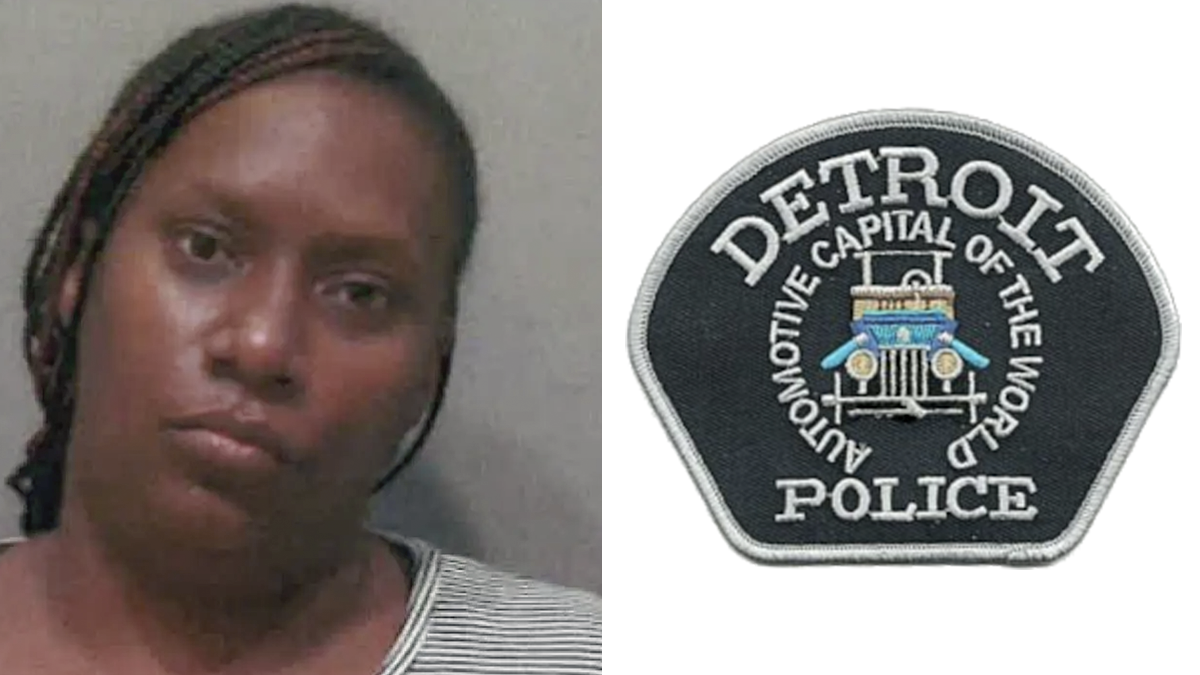

Detroit police wrongly arrested LaDonna Crutchfield after a facial recognition error misidentified her as a shooting suspect. Despite police claims contesting AI involvement in one instance, a lawsuit argues that reliance on such technology violated her rights and caused significant emotional distress.[AI generated]