The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

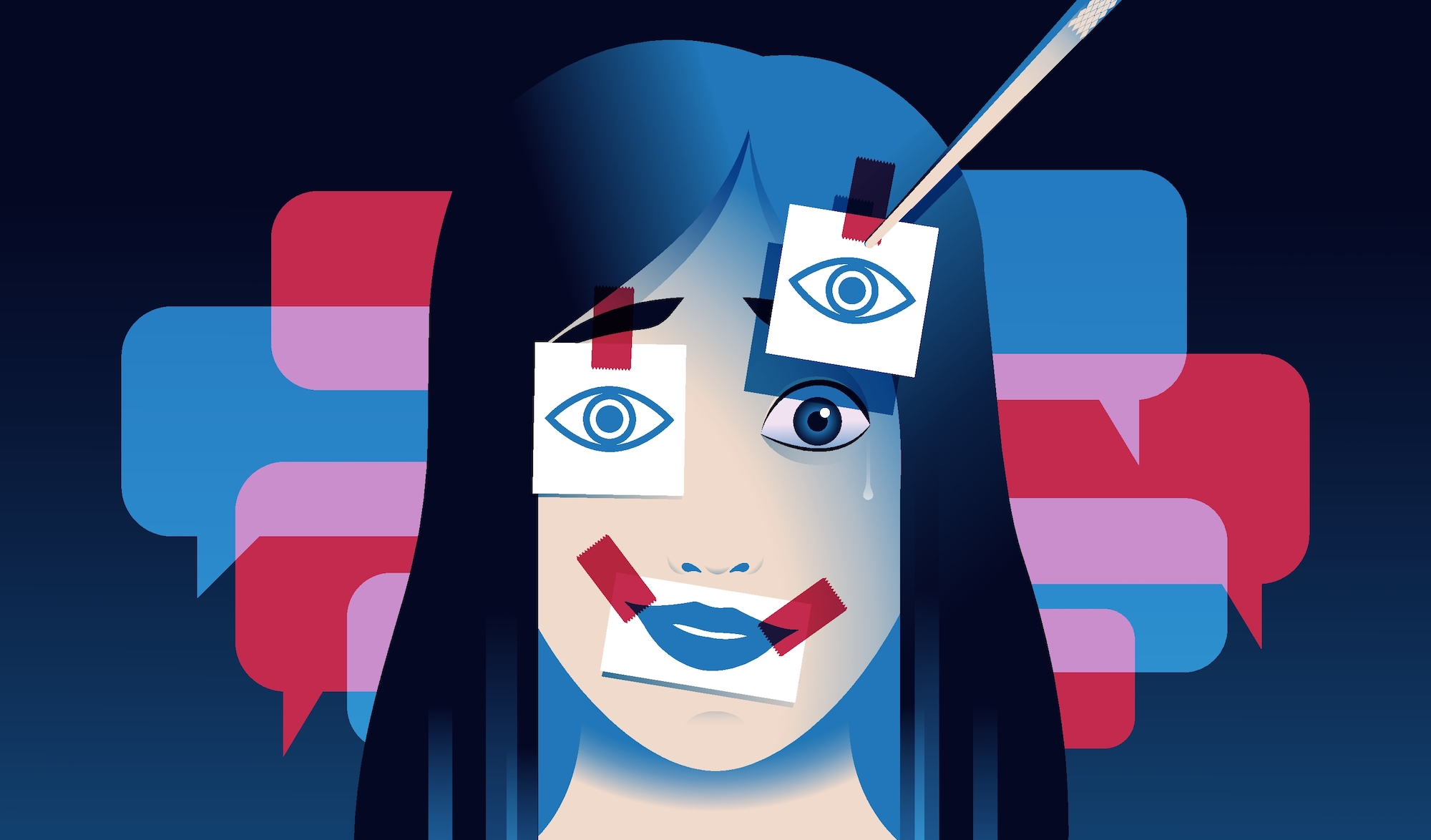

Malaysian cybersecurity expert Prof Dr Selvakumar Manickam warns that deepfake AI videos featuring political figures and sensitive issues pose significant national security risks by simulating attacks or false-flag operations. The rise in deepfake manipulations, also targeting celebrities, threatens destabilization of nations and damage to individual reputations across social media.[AI generated]