The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

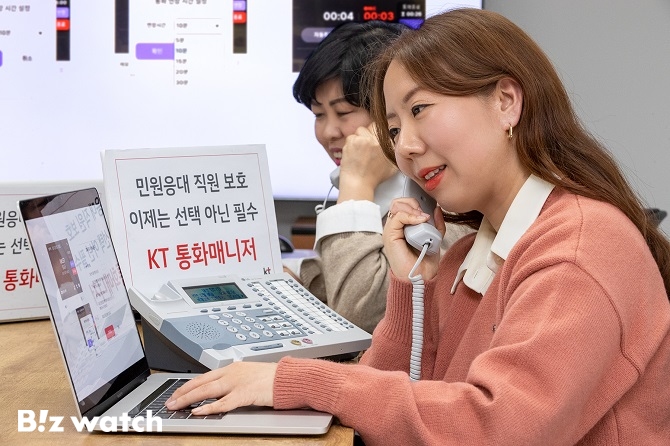

KT has enhanced its AI-powered call manager service to safeguard public officials and customer service staff by automatically issuing warnings and terminating calls when abusive language or prolonged conversations are detected. The system also records calls and provides text conversion, offering improved safety for public institutions and businesses.[AI generated]