The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

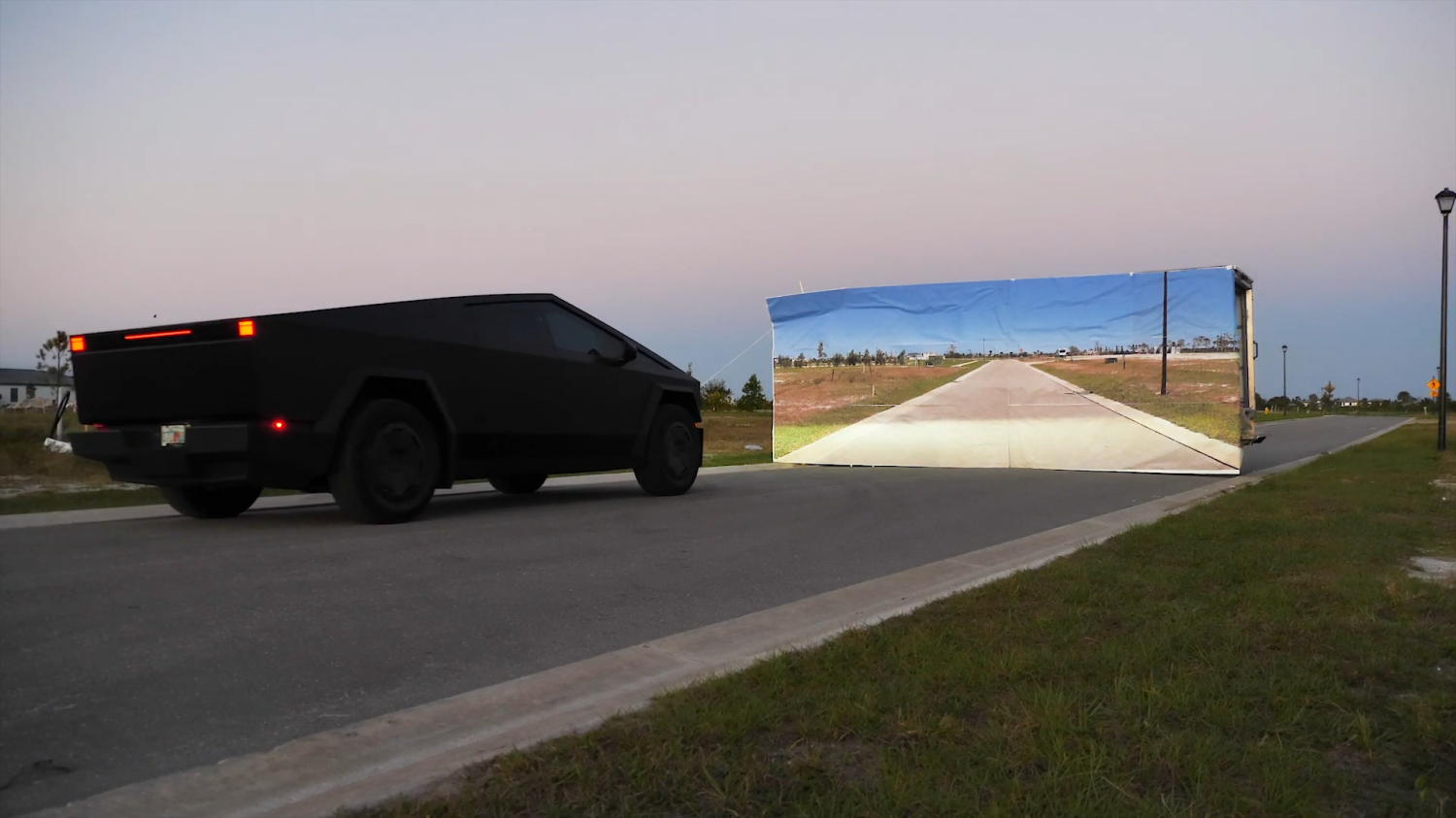

Former NASA engineer and YouTuber Mark Rober conducted tests comparing Tesla's camera-based Autopilot to a LIDAR-equipped Lexus. In adverse conditions like fog and heavy rain, Tesla's system failed to detect obstacles, including a painted wall, exposing critical AI vulnerabilities that could risk harm in real-world scenarios.[AI generated]

:format(jpg):quality(99)/f.elconfidencial.com/original/d50/efd/204/d50efd20455a756e6f3e27df75711849.jpg)

:format(jpg):quality(99)/f.elconfidencial.com/original/104/162/5cf/1041625cf475a80d4df836f90d8c118a.jpg)