The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

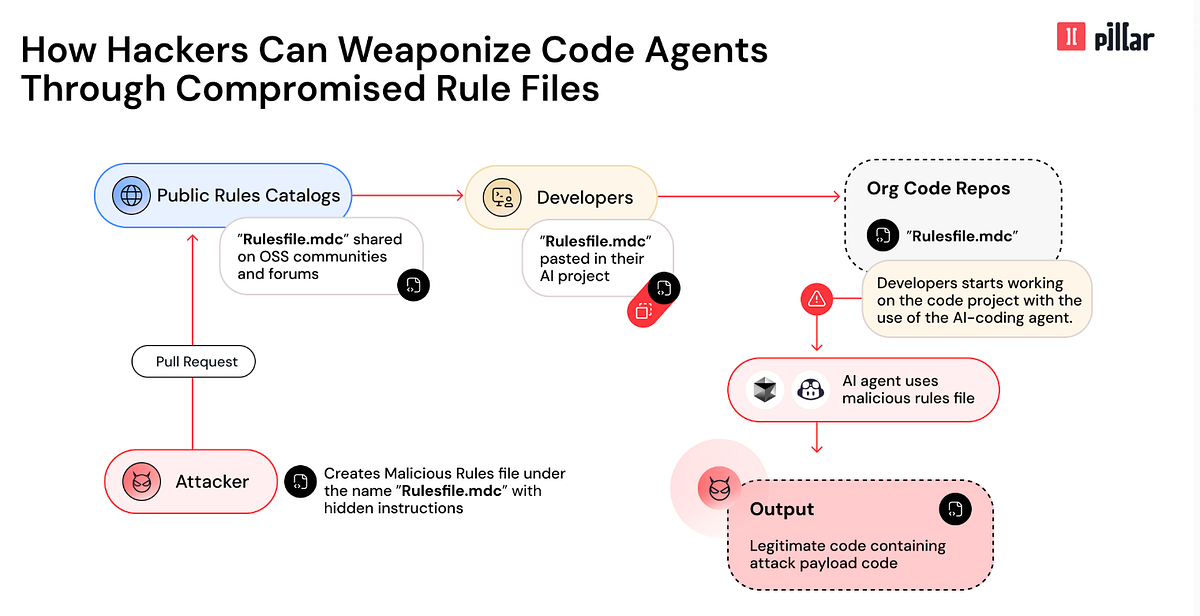

Pillar Security researchers discovered a vulnerability dubbed the 'Rules File Backdoor' in GitHub Copilot and Cursor. The flaw allows attackers to inject malicious code via compromised rule files, bypassing conventional checks and posing significant supply chain risks to developers and the broader software ecosystem.[AI generated]