The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

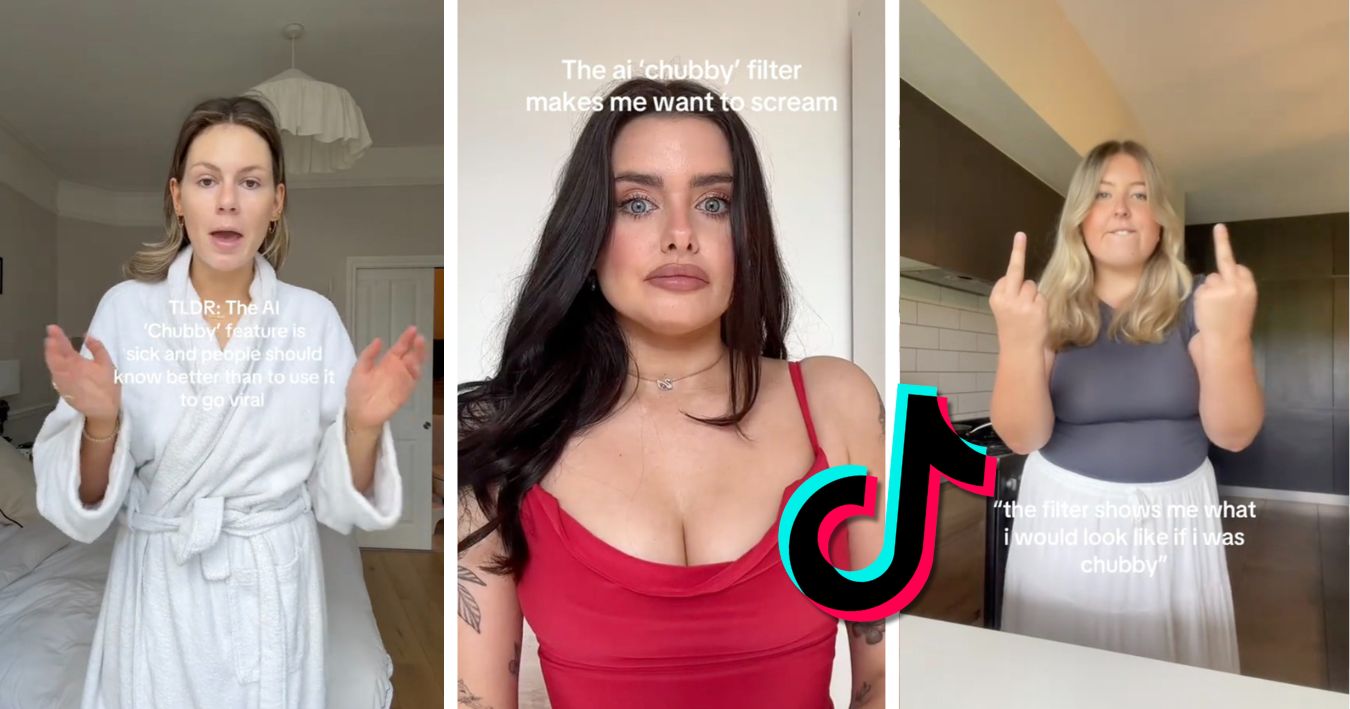

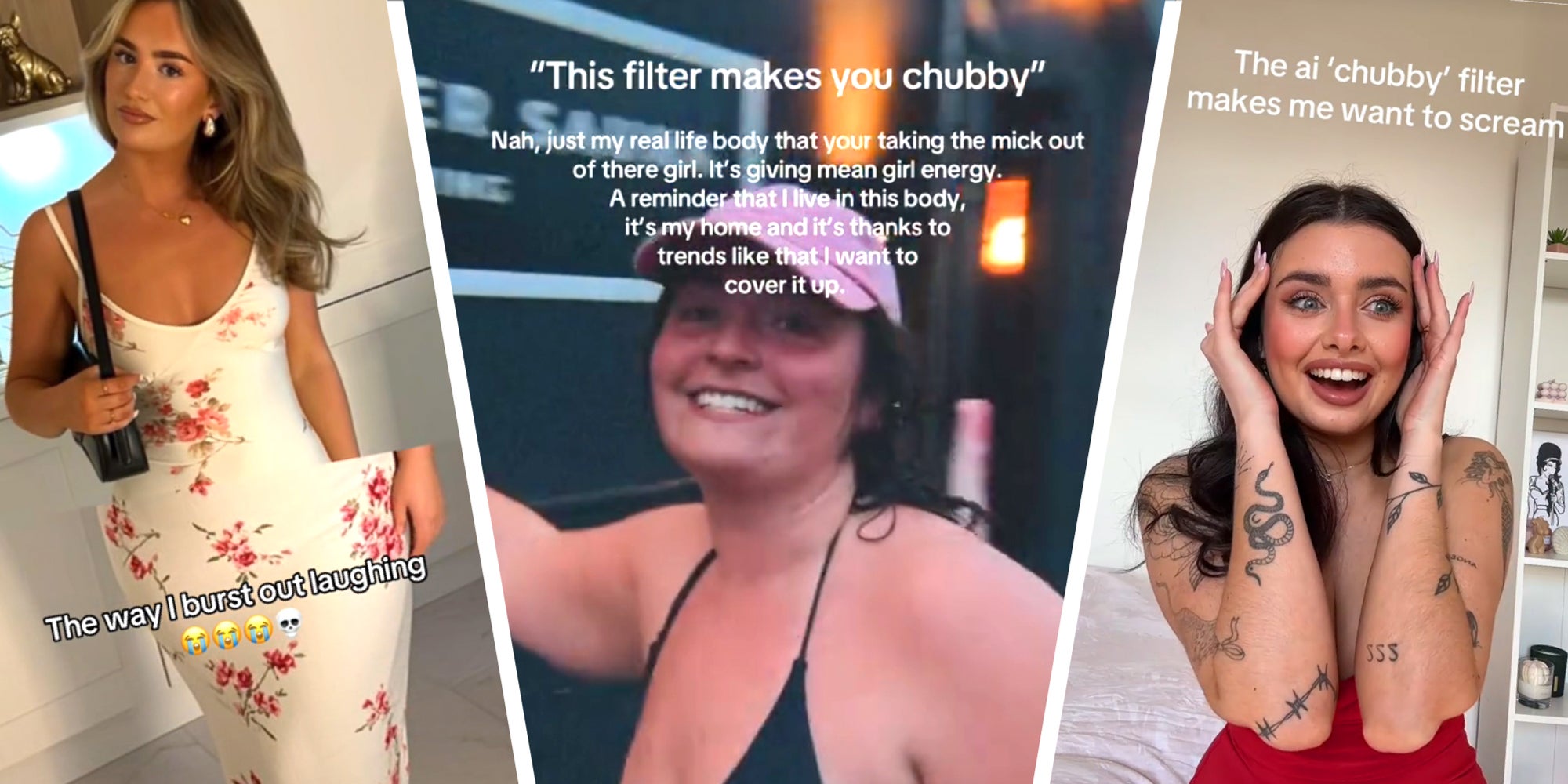

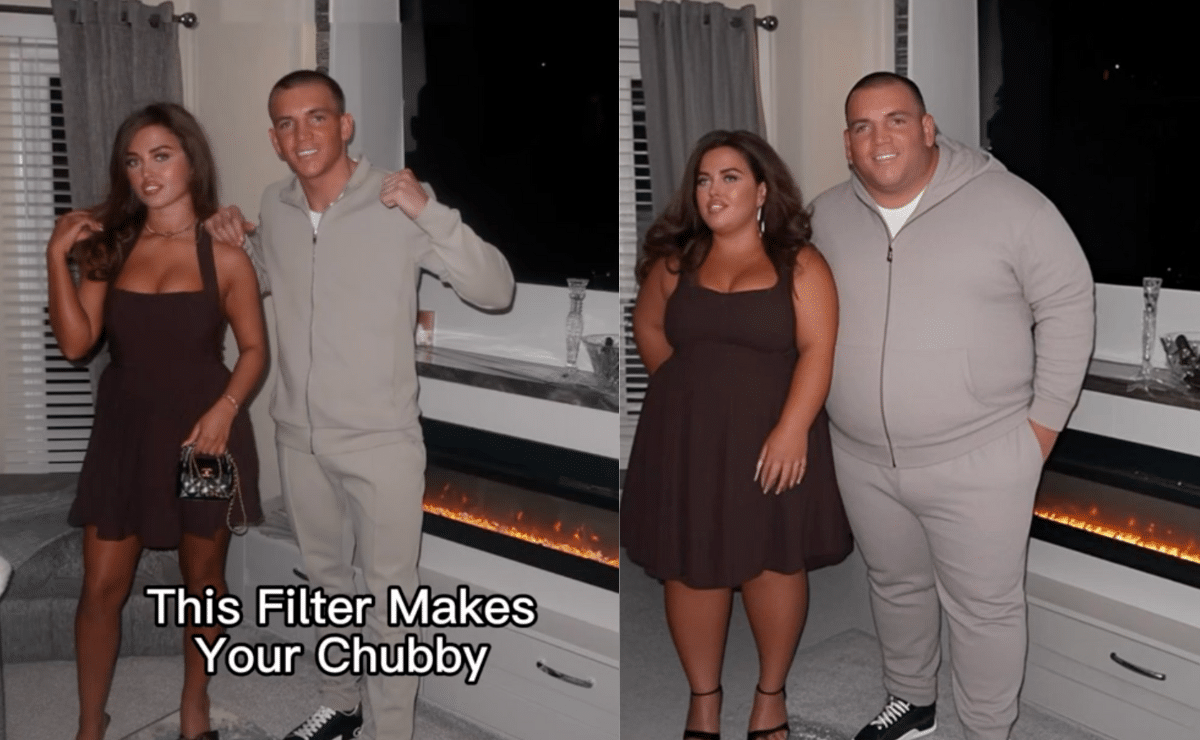

TikTok's AI-driven 'chubby filter' that makes users appear heavier has sparked controversy. While some users share altered images for fun, critics including influencer Sadie condemn the filter as perpetuating body shaming and a toxic diet culture, potentially contributing to eating disorders. TikTok has yet to comment on the issue.[AI generated]

)

.jpeg?width=1200&auto=webp&quality=75)