The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

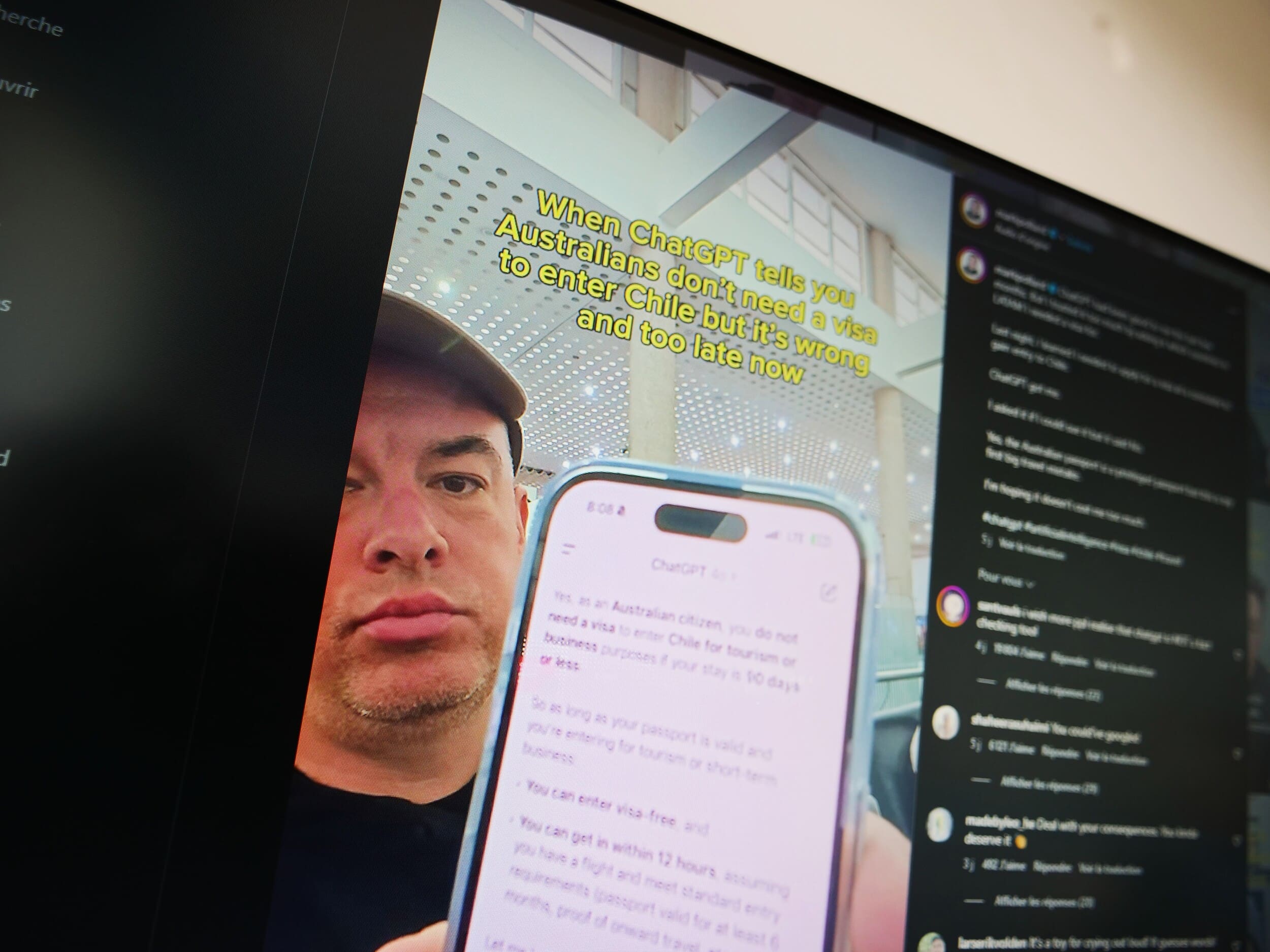

Mark Pollard, an Australian marketing strategist, was stranded at a Chilean airport and missed his conference after ChatGPT wrongly informed him he didn’t need a visa. This mishap highlights generative AI’s fallibility when used for critical travel advice, showing how inaccurate AI outputs can directly harm users’ plans.[AI generated]