The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

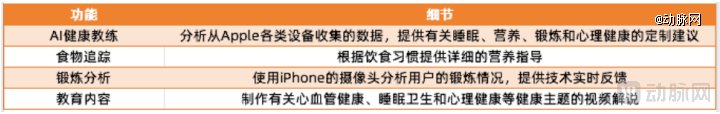

Apple is developing an AI health coach, code-named Project Mulberry, for iOS 19.4’s Health app. Drawing on Apple Watch and iPhone data, the AI ‘doctor’ will offer personalized medical advice trained on internal and external experts. The rollout next spring will feature expert-led educational videos to guide user health decisions.[AI generated]