The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

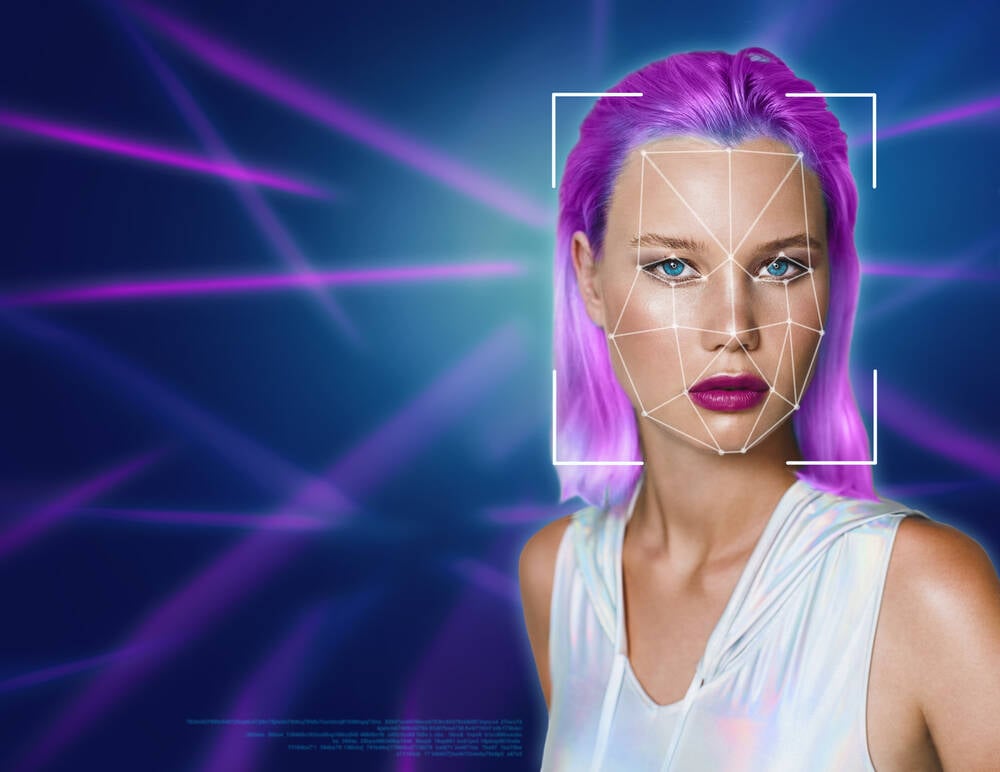

Security researcher Jeremiah Fowler exposed an unsecured database from South Korea’s GenNomis, revealing over 90,000 AI-generated explicit images, including deepfakes of celebrities and depictions of minors. The incident highlights severe privacy breaches and the potential weaponization of AI in generating nonconsensual harmful content.[AI generated]