The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

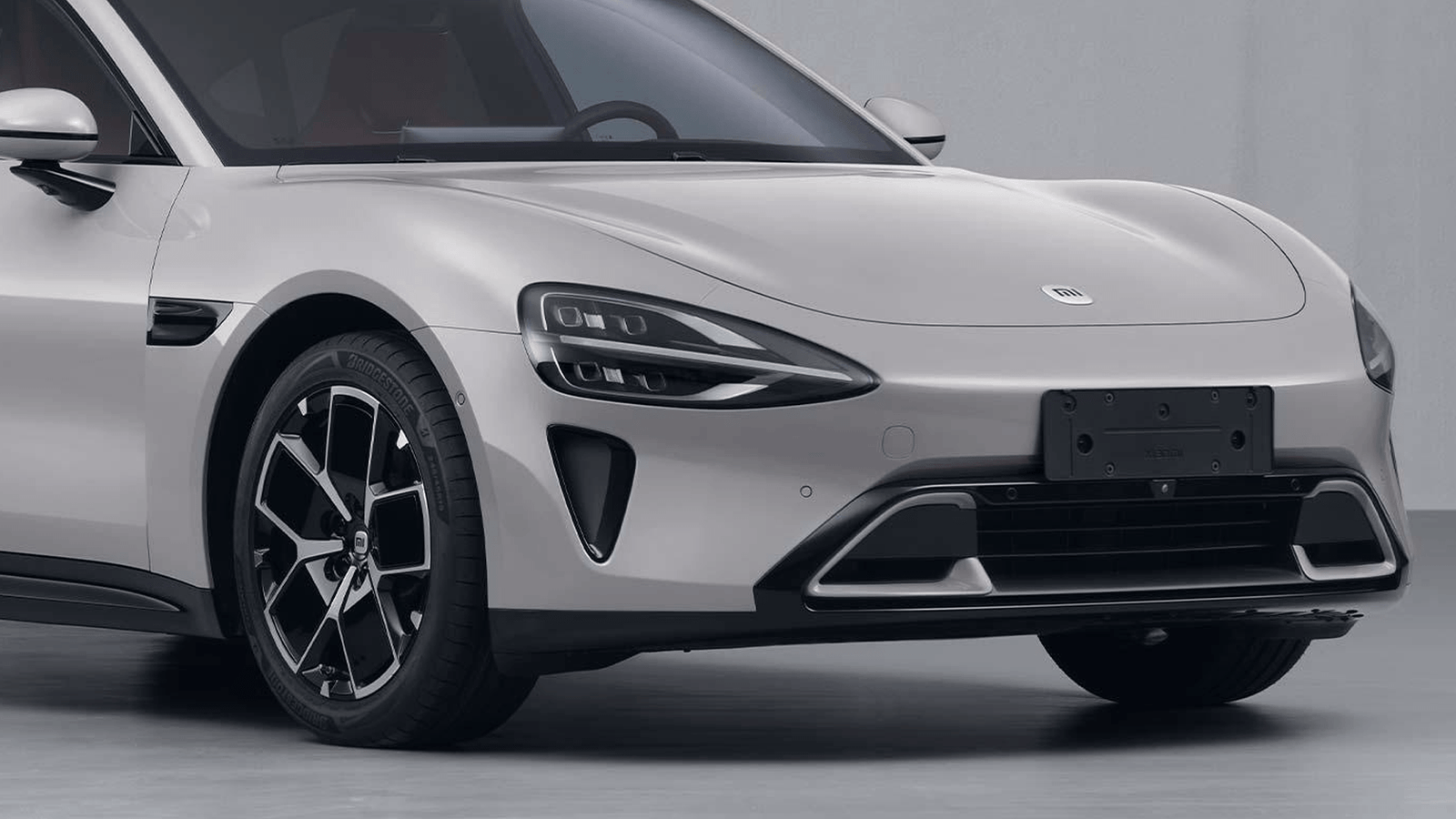

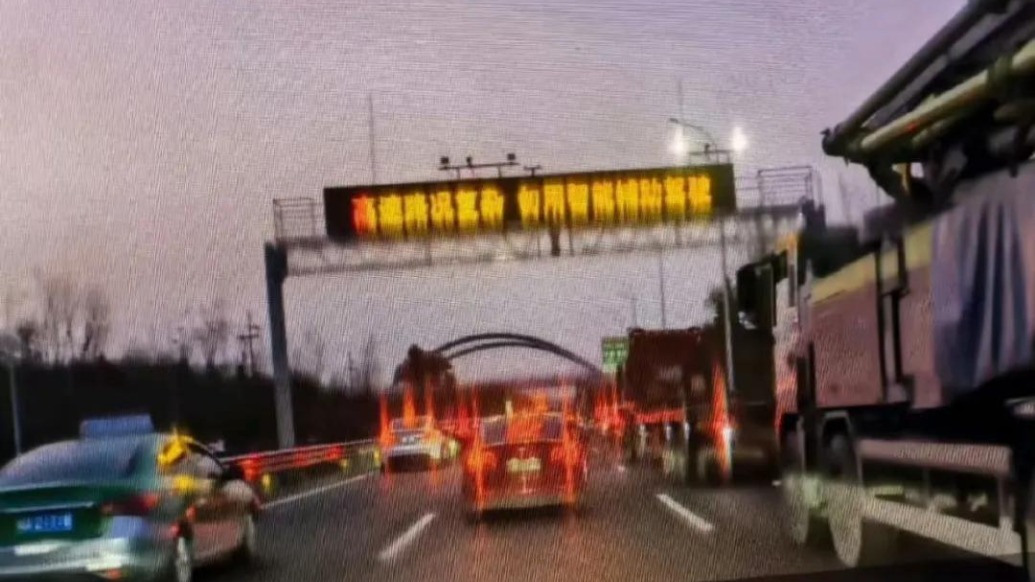

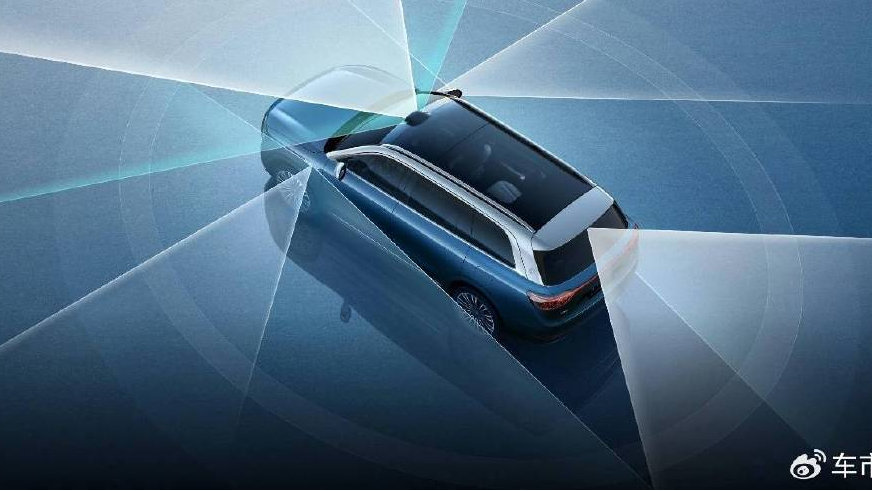

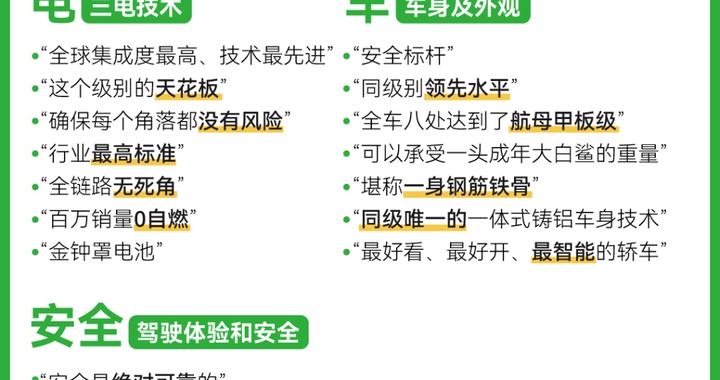

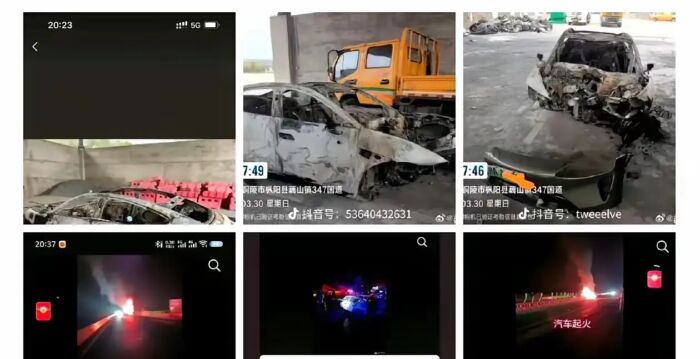

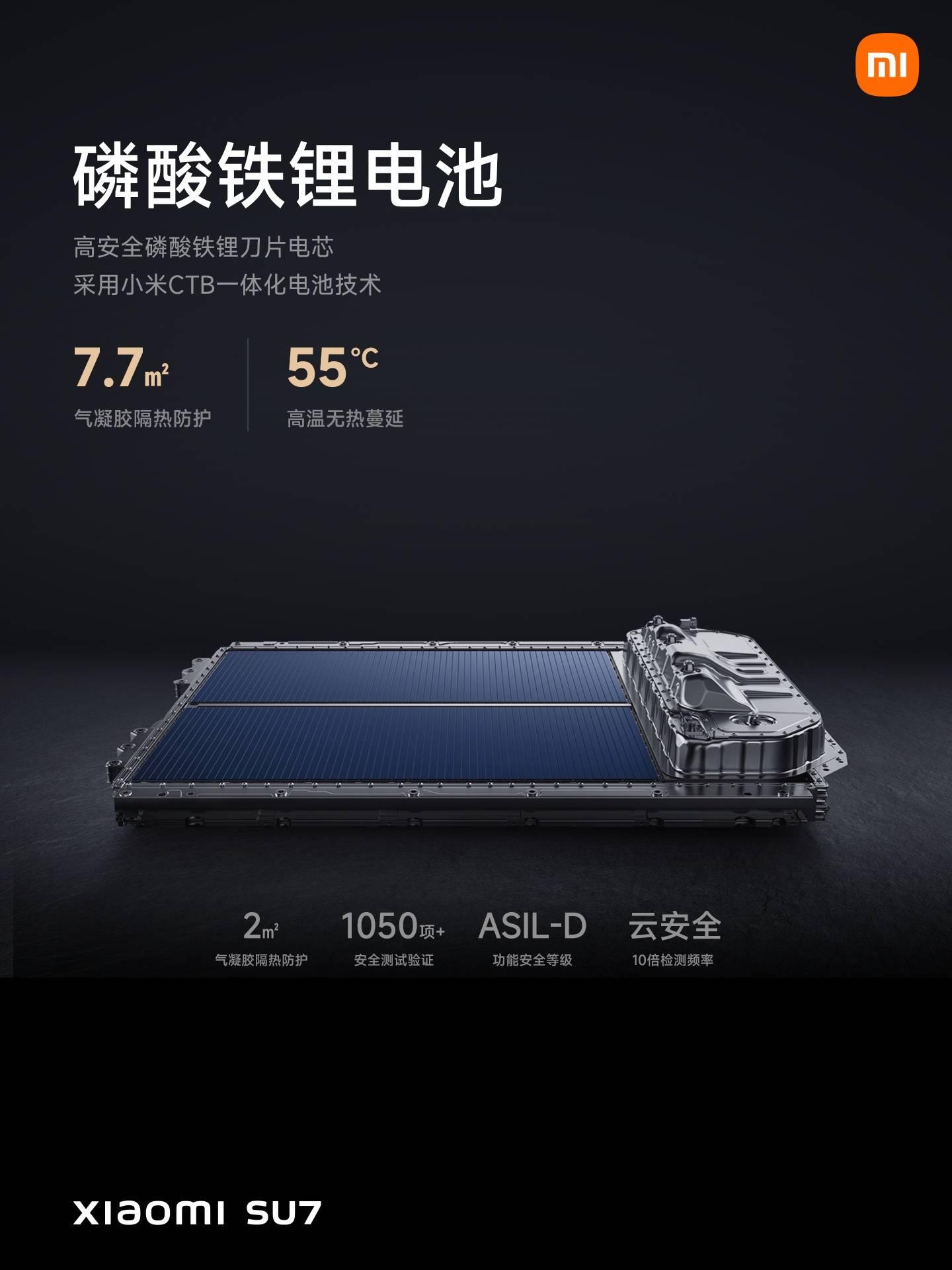

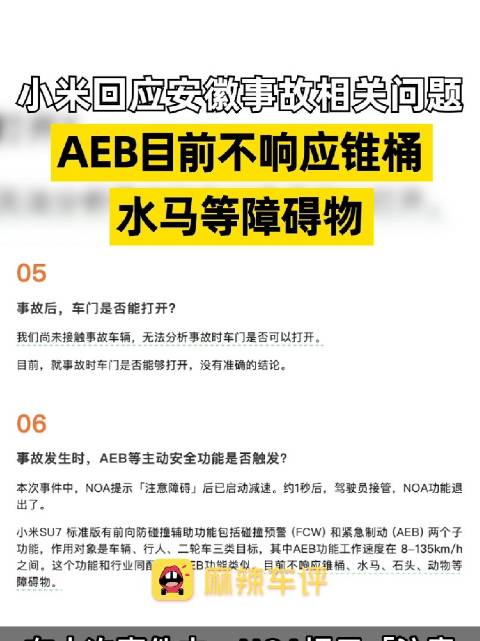

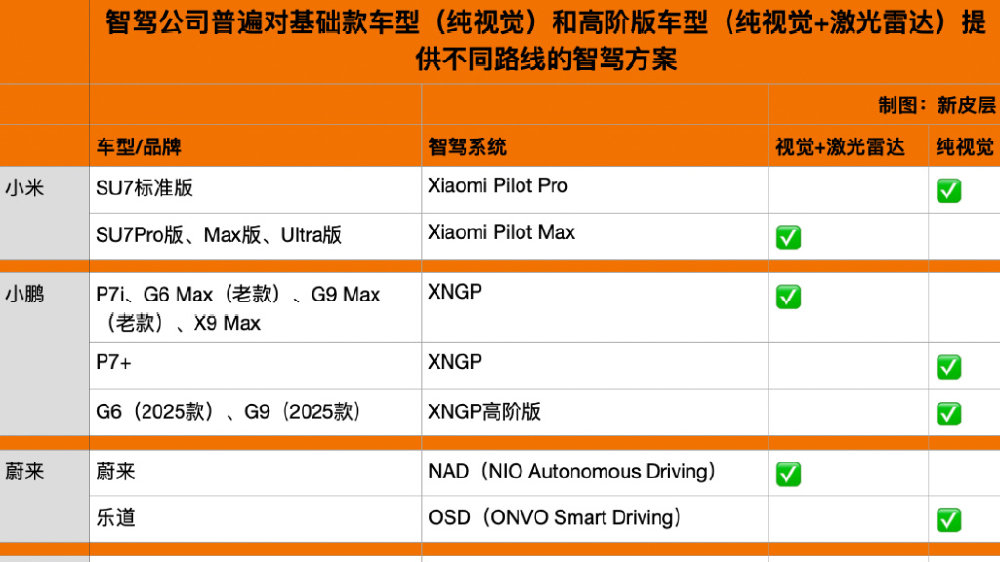

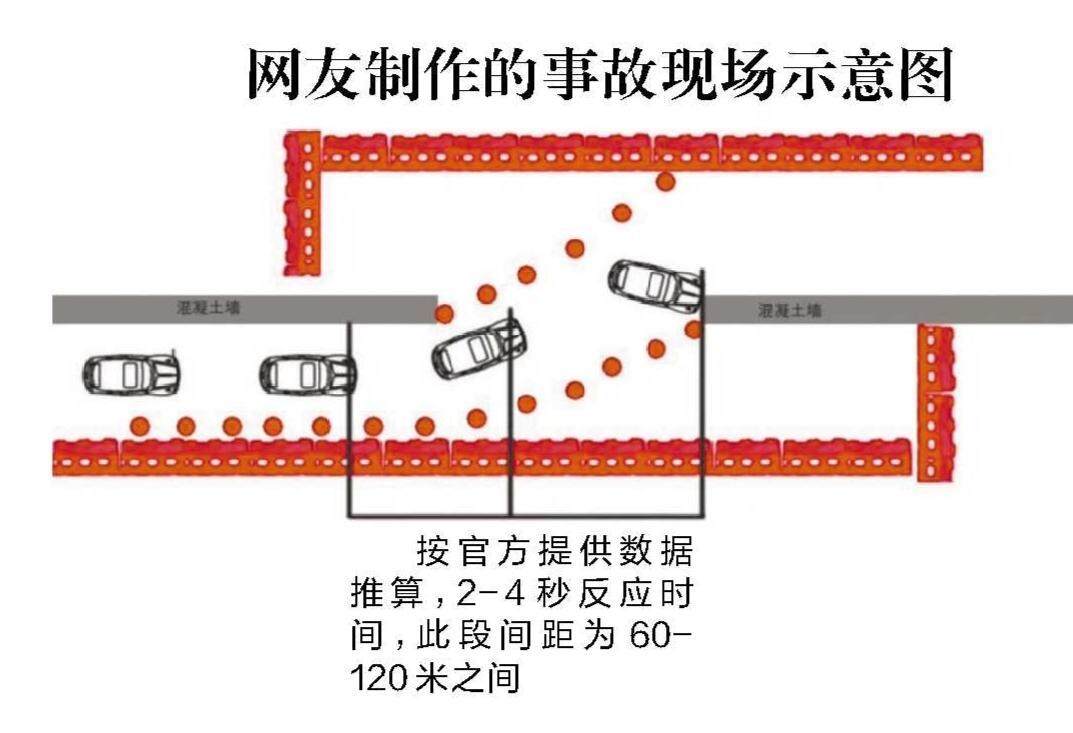

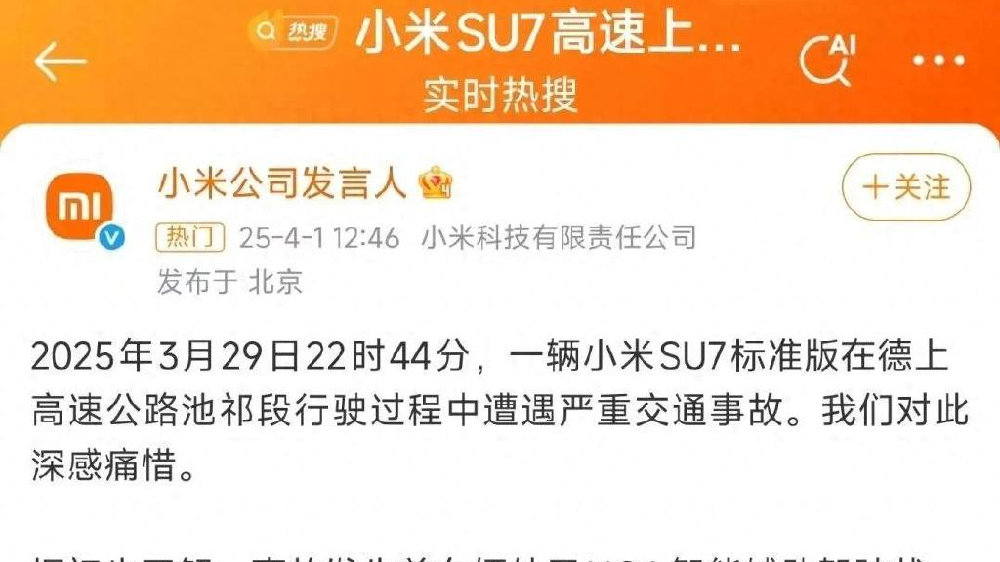

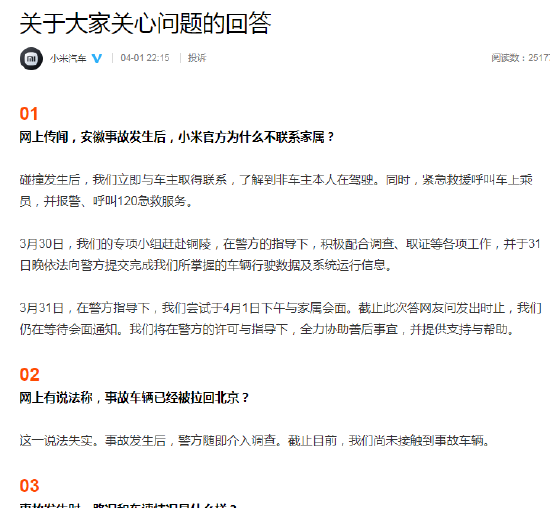

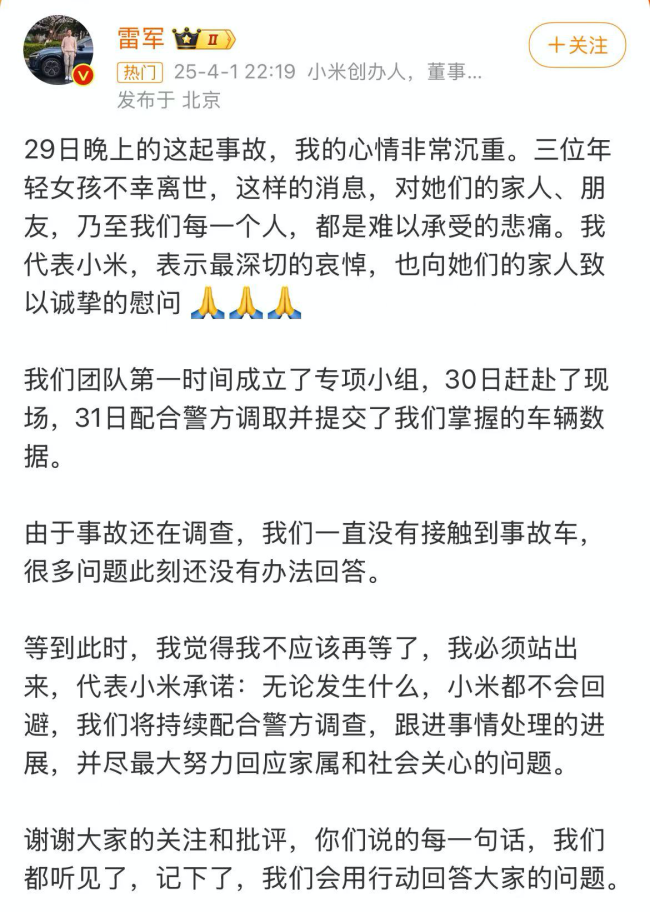

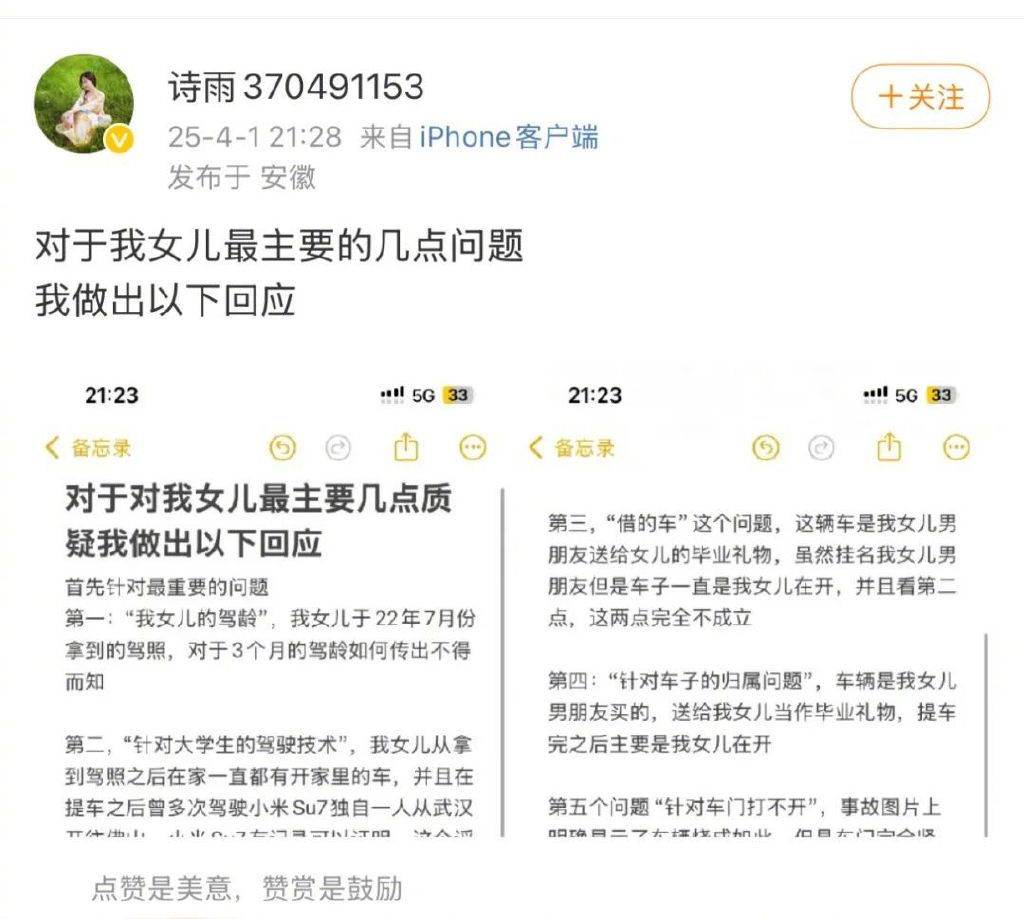

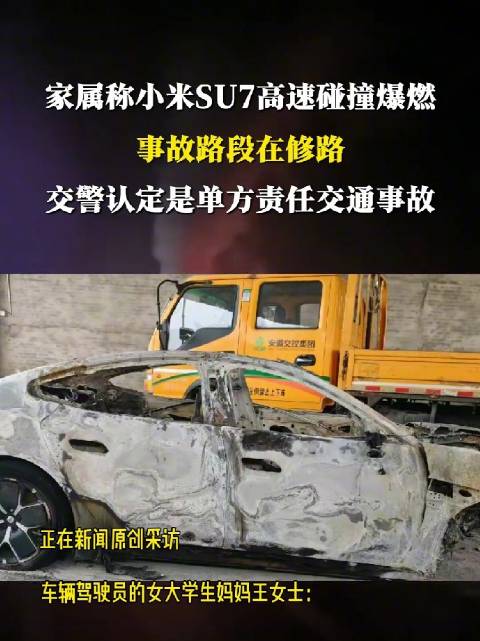

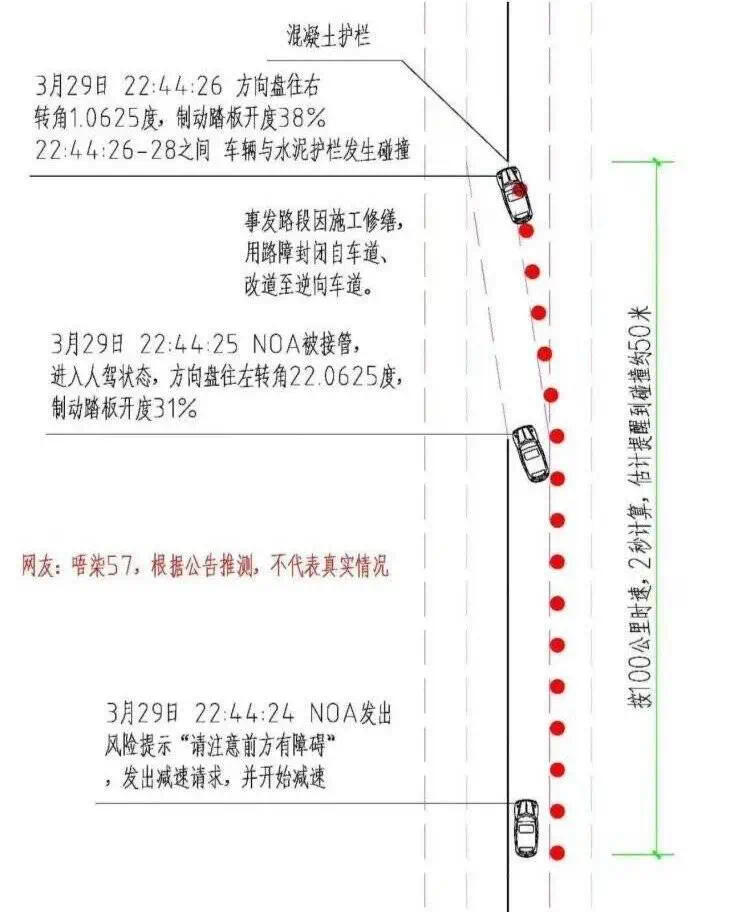

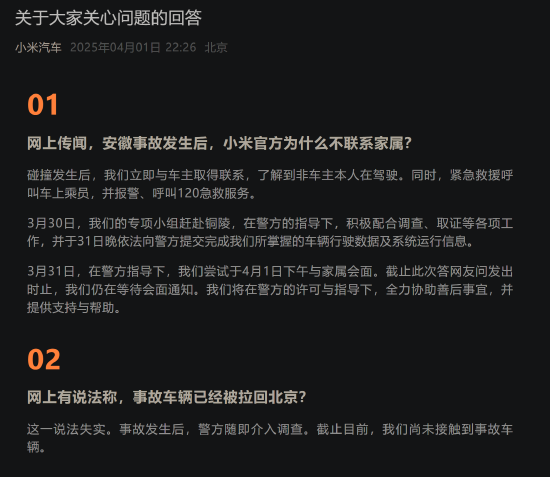

A Xiaomi SU7 electric vehicle, using its AI-powered driving assistance with an active autopilot, crashed on an expressway in Anhui province on March 29. The malfunction led to a fatal collision that killed three people. Xiaomi is cooperating with local authorities to investigate the incident.[AI generated]