The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

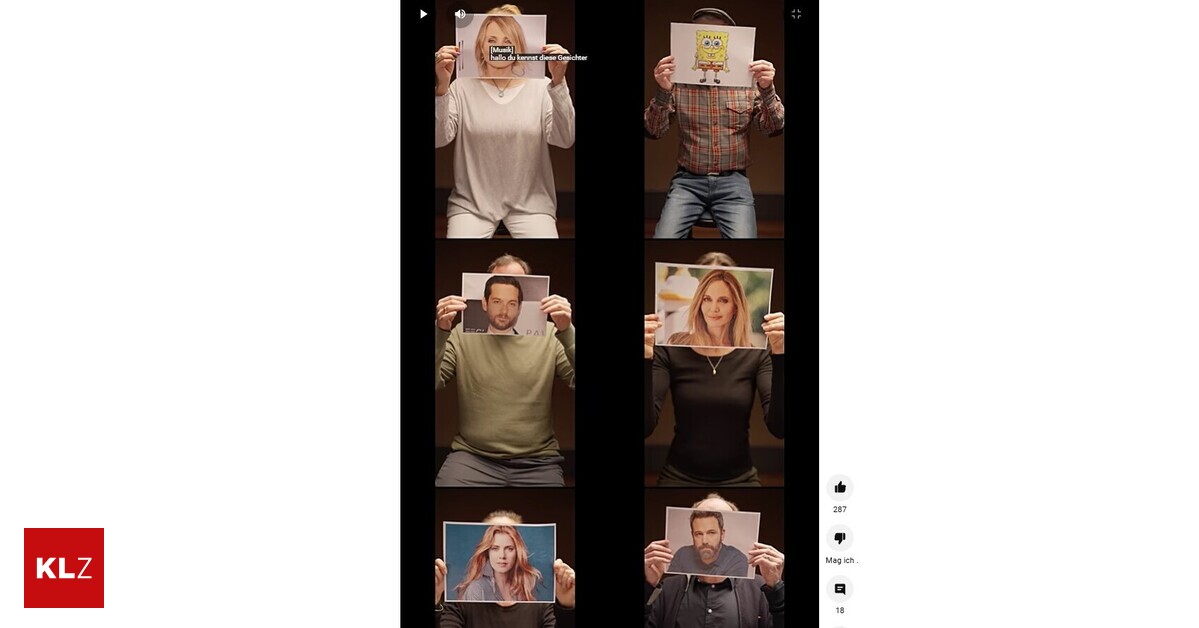

Prominent German voice actors for US stars issued a public warning via an image video about the potential replacement of human dubbing by AI-generated voices. They expressed deep concerns that advancing AI could eliminate authentic voice work, jeopardizing their industry and future job security.[AI generated]