The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

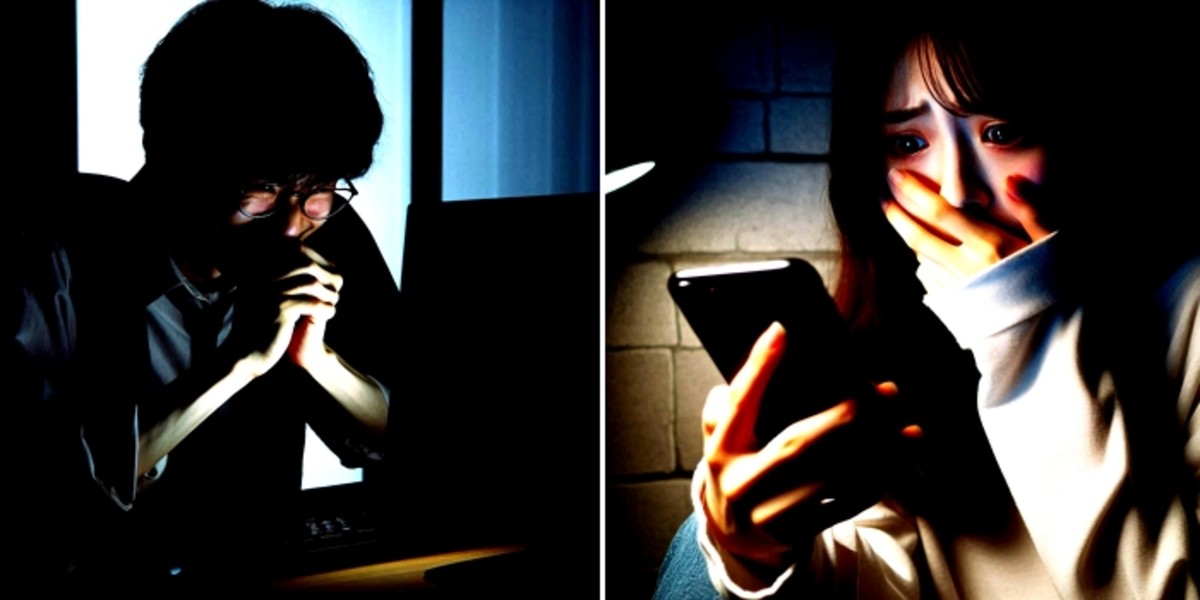

In Incheon, police arrested 15 suspects, including a 24-year-old graduate student, for using AI deepfake technology to superimpose female university alumni's faces onto explicit nude images. The manipulated content was distributed via Telegram groups, resulting in non-consensual sexual content and gross violations of privacy and human rights.[AI generated]