The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

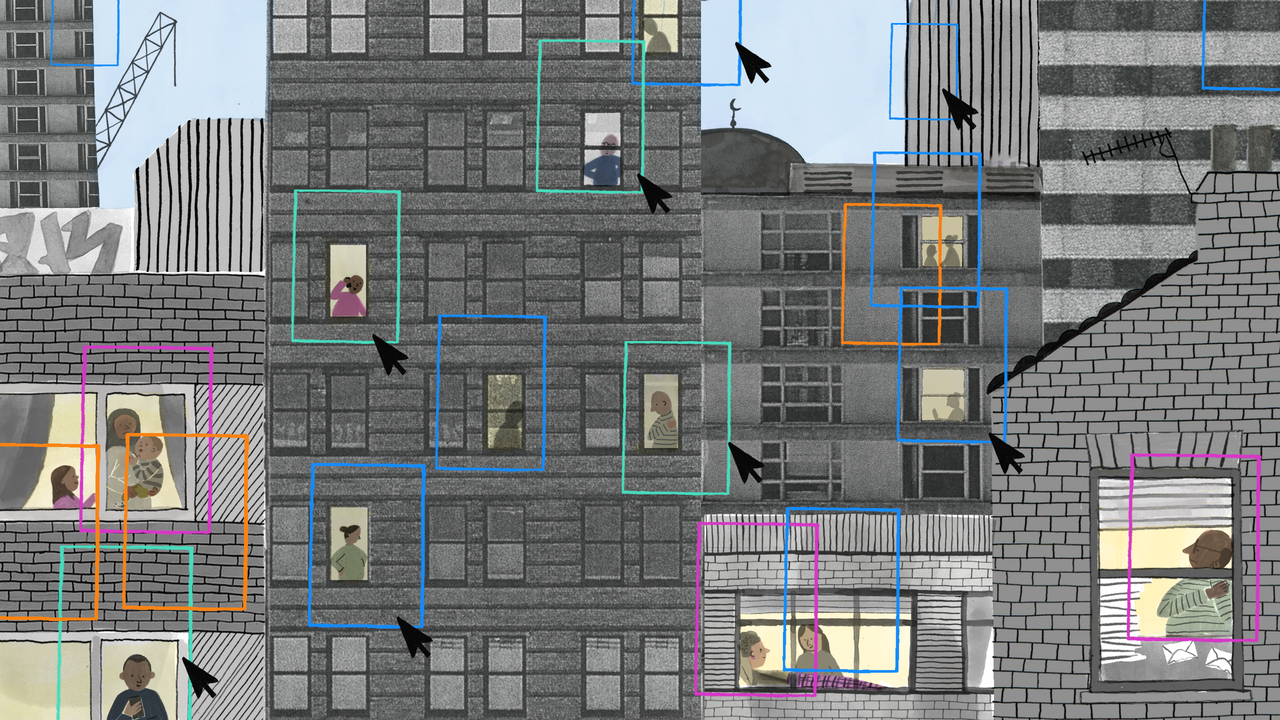

The UK Ministry of Justice, alongside police and government agencies, is developing an AI system to predict potential murderers by analyzing sensitive personal data. Criticized as 'chilling and dystopian' by civil rights groups like Statewatch, the project raises significant ethical, privacy, and human rights concerns.[AI generated]

)

.jpg)

.png)