The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

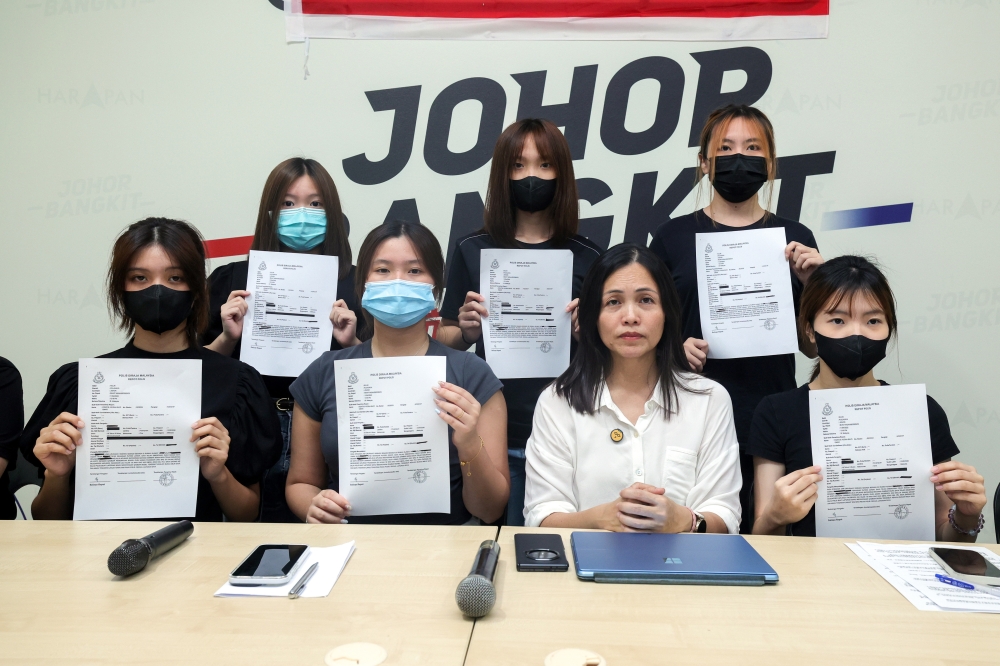

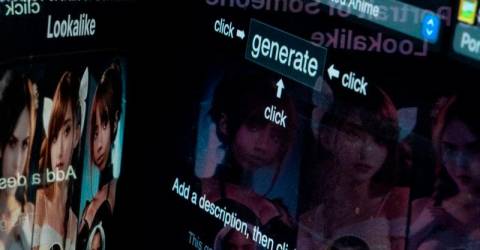

A 16-year-old boy in Johor was arrested on April 8 for allegedly using AI technology to edit, distribute, and sell lewd images of schoolmates, obtained from social media. Authorities seized his mobile phone and are urging further victim reports as investigations continue.[AI generated]

.jpeg/4fd635d842c5e14ad28b37cee249712b.jpeg)