The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

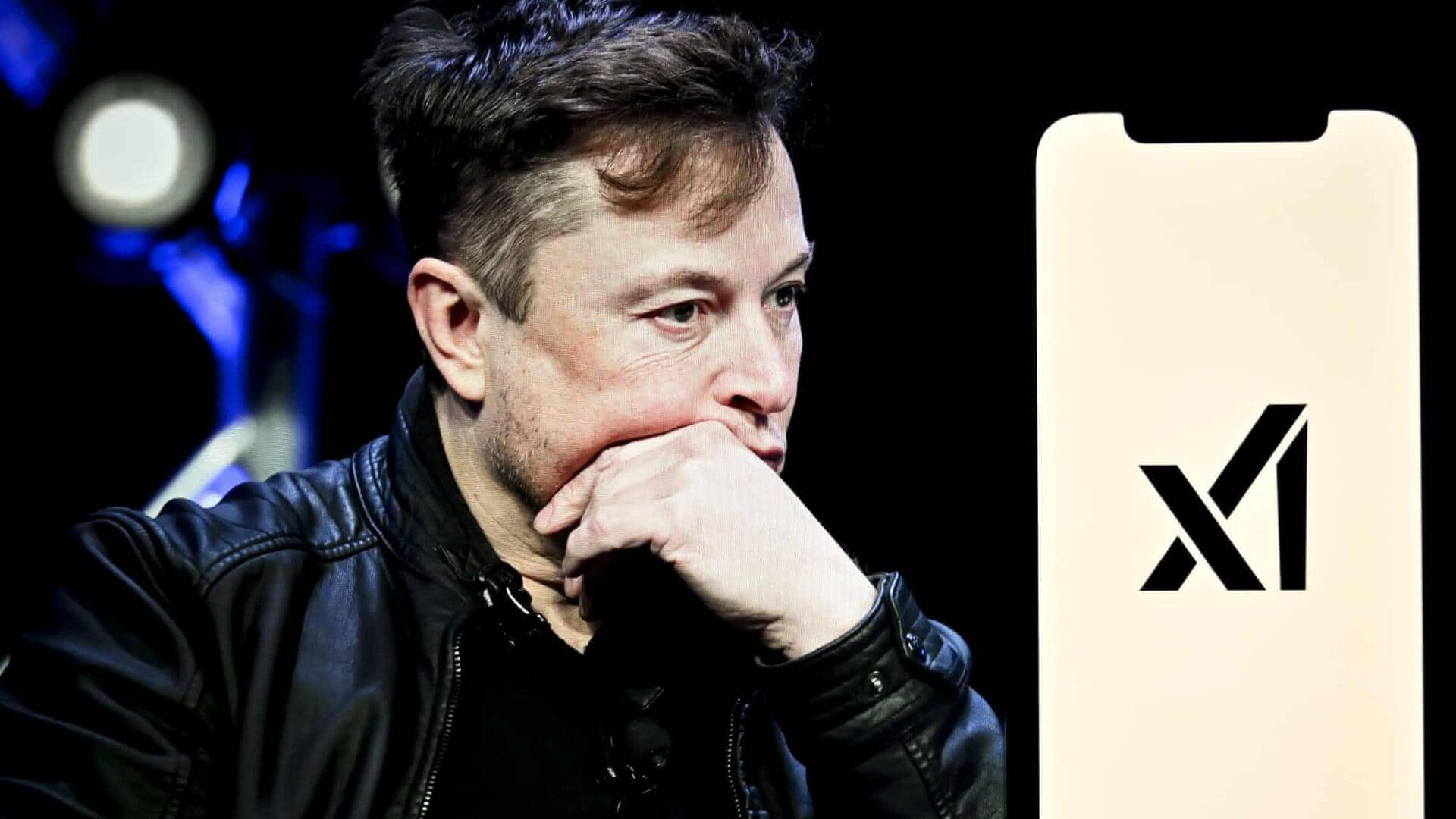

Environmental groups, including the Southern Environmental Law Center, have accused Elon Musk’s xAI of expanding its supercomputing facility in Memphis without proper permits. The facility, now using nearly 35 methane gas turbines, is alleged to violate the Clean Air Act, posing environmental and public health risks.[AI generated]