The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

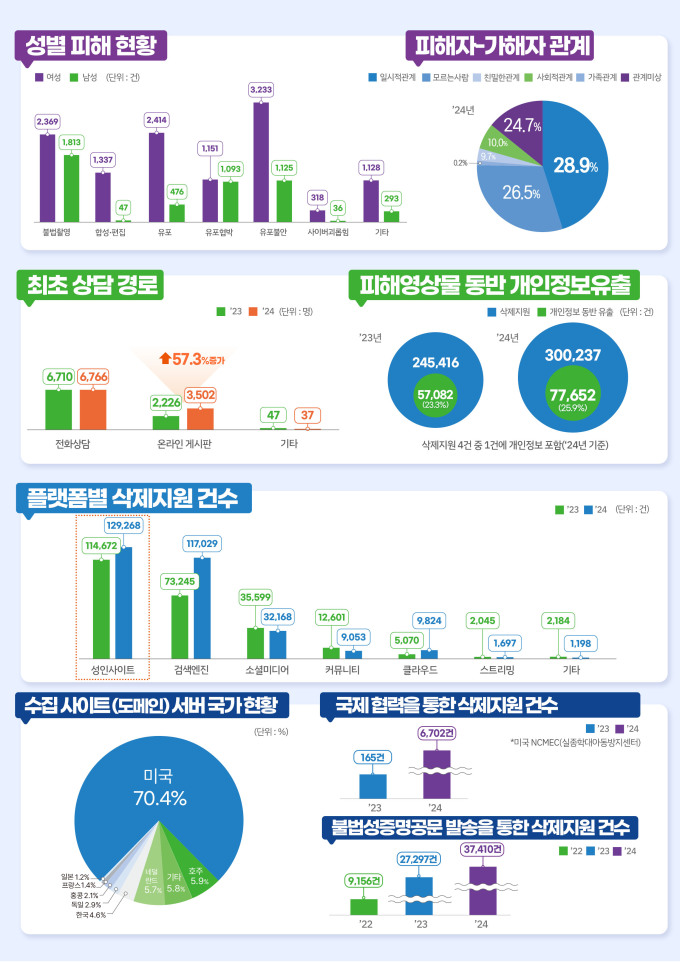

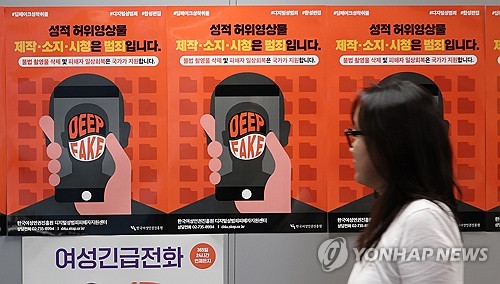

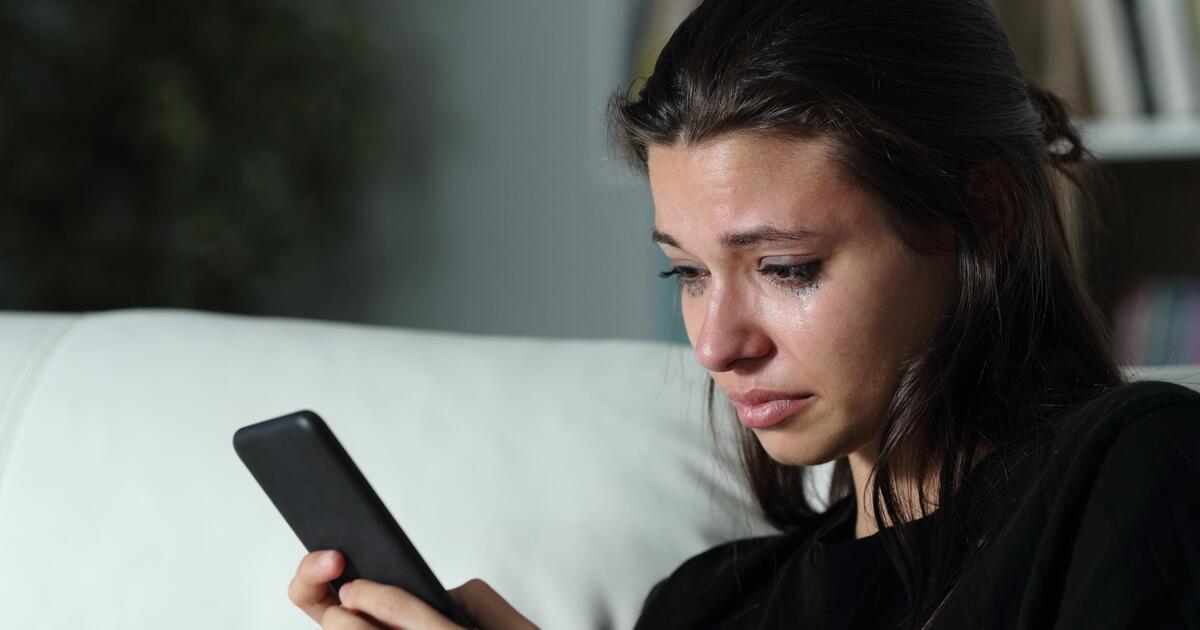

South Korea faces a rising wave of digital sex crimes using AI-generated deepfakes, heavily targeting women and young people. More than 10,000 victims have received support for counseling, content deletion, and legal aid as cases increase sharply, highlighting serious human rights breaches linked to the misuse of AI technology.[AI generated]