The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

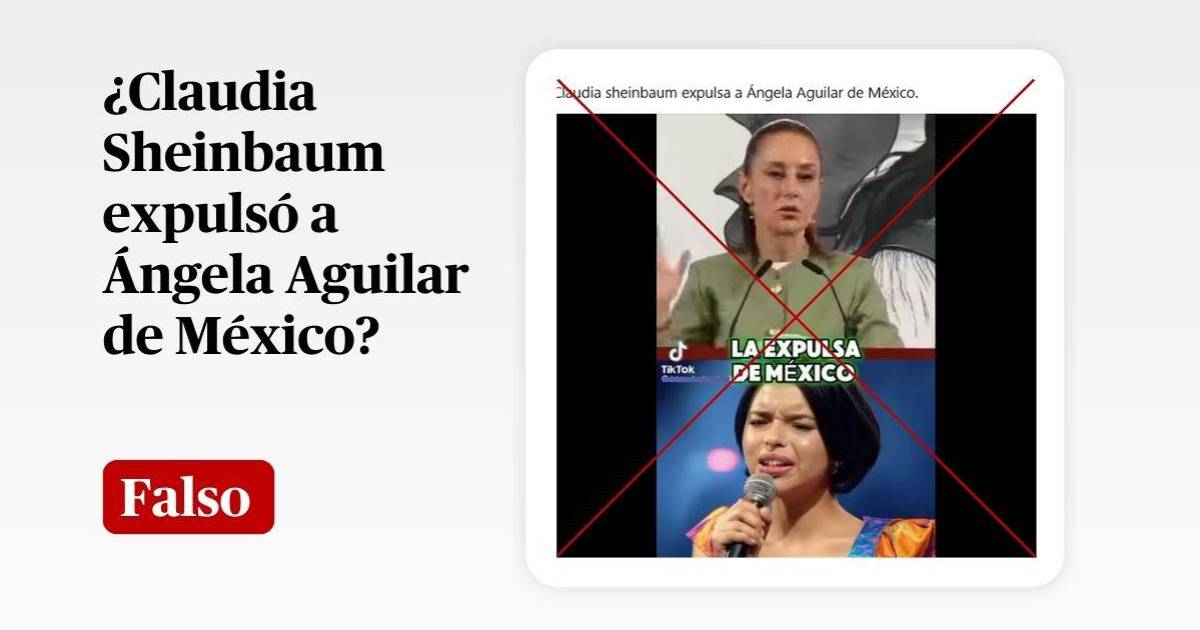

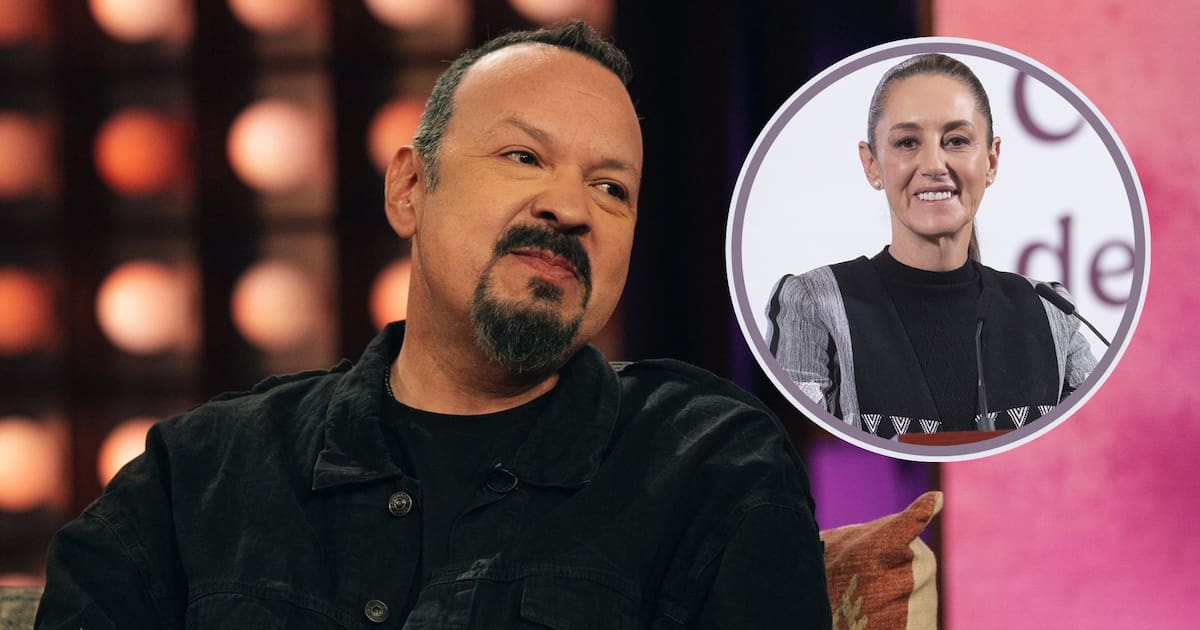

Singer Pepe Aguilar debunked deepfake videos and audios falsely portraying him as criticizing Mexican President Claudia Sheinbaum and her daughter. He warned about the risks of AI-generated misinformation and urged caution to prevent reputational damage and destabilization of public trust via manipulated media.[AI generated]