The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

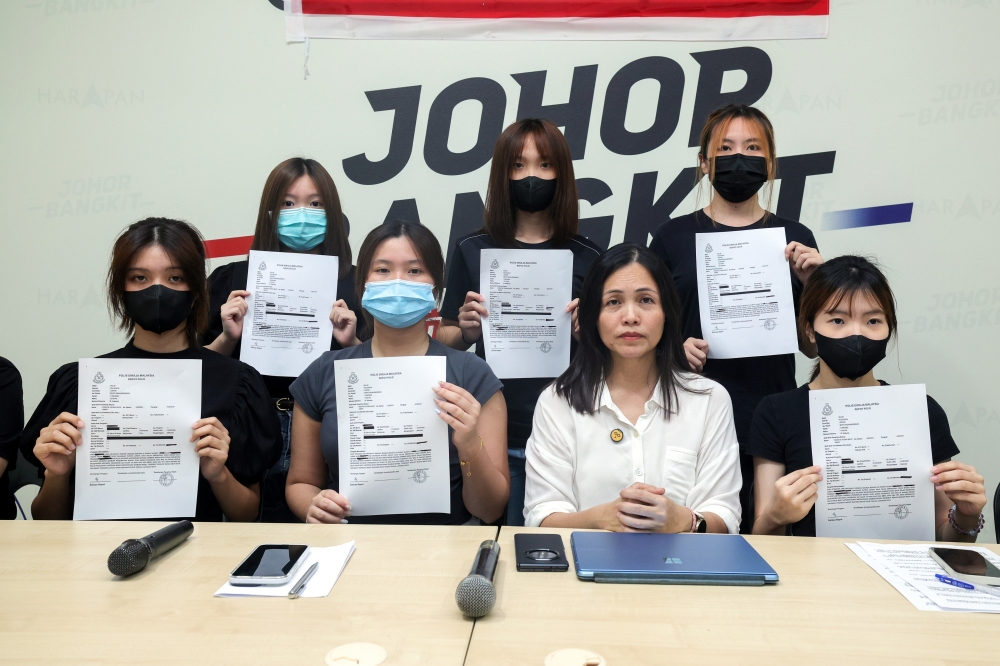

Deputy Communications Minister Teo Nie Ching has called for urgent digital safety reforms in Malaysian schools after AI-generated explicit images of 38 students, some as young as 12, were circulated. The incident highlights misuse of deepfake technology, breaches human rights, and necessitates stricter protocols in educational institutions.[AI generated]