The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

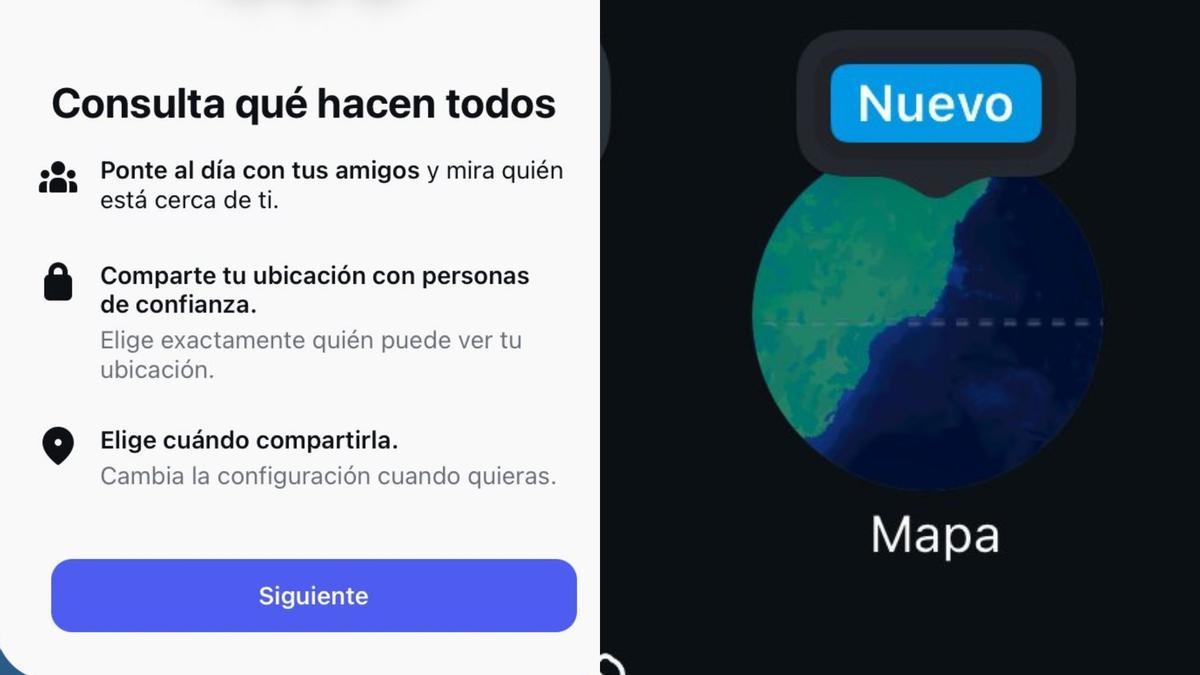

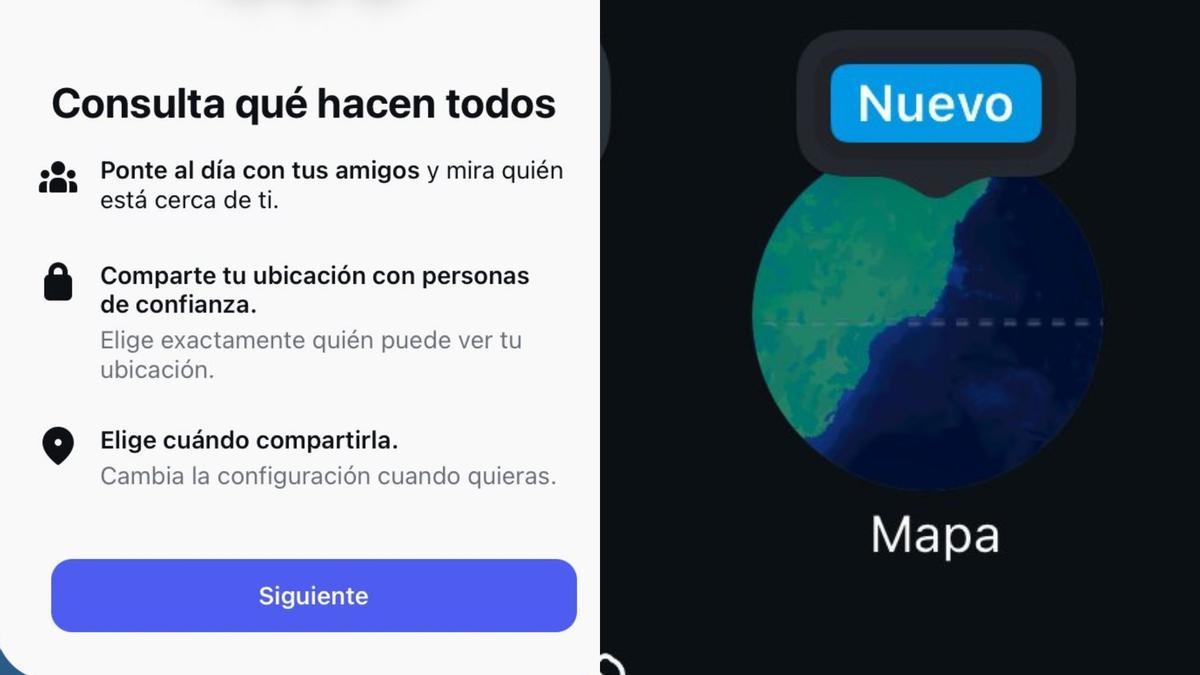

Meta’s Instagram rollout of a default-enabled “Maps of Friends” feature automatically shares real-time user locations with contacts without prior notification, prompting Latin American users and soon Spanish users to report privacy breaches, lack of consent and potential stalking risks. The unwanted updates can be disabled in app settings.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves an AI system that automatically updates and shares users' real-time location data with contacts, which can be inferred as AI-enabled processing of location and social data. While no direct harm is reported, the article emphasizes significant privacy concerns and potential misuse that could lead to violations of privacy rights and harm to users. Since the harm is plausible but not yet realized, and the AI system's role is central to the feature's operation and potential risks, this event fits the definition of an AI Hazard rather than an Incident or Complementary Information.[AI generated]