The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

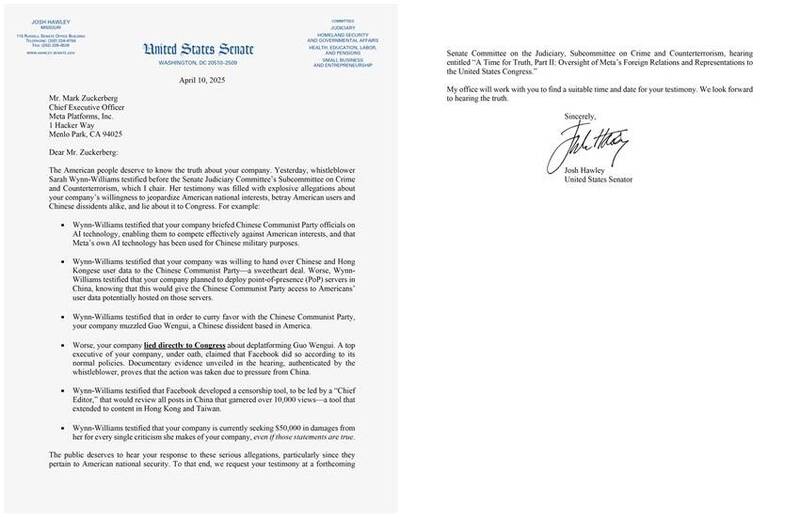

Meta is accused of collaborating with the Chinese government by developing AI-powered tools that censor popular posts from Taiwan and Hong Kong. Allegations claim Mark Zuckerberg was involved, prompting U.S. Senator Josh Hawley to summon him to testify before Congress over potential free speech and human rights violations.[AI generated]