The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

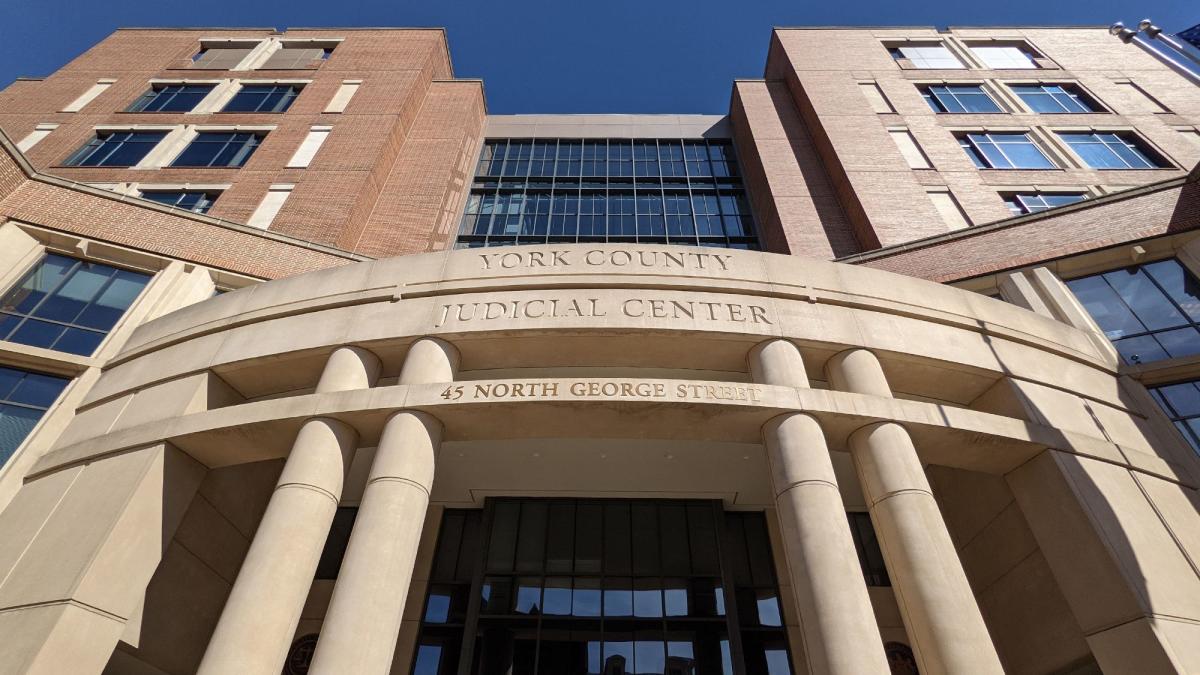

Luke A. Teipel, a 22-year-old from Dallastown, PA, faces 33 felony counts for possessing child sexual abuse material, including 29 AI-generated images. The case, the first under a new state law targeting synthetic child porn, highlights the harmful use of AI in creating illegal content.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions artificially-generated child sexual abuse material, indicating the involvement of AI systems in creating illegal content. The possession and alleged distribution of this material constitute a violation of laws protecting fundamental rights and cause harm to communities and individuals. The AI system's role in generating the harmful content is central to the incident, and the legal charges reflect the direct harm caused. Therefore, this event qualifies as an AI Incident.[AI generated]