The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

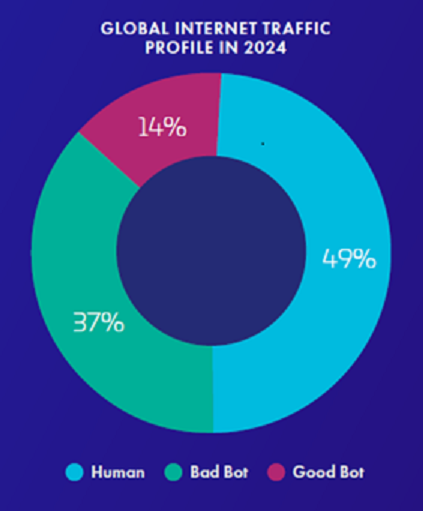

Cybersecurity reports from Imperva and Thales highlight that AI-driven bots now exceed human traffic online, constituting over 50% of global internet activity. The rise of AI-powered bots enables increasingly sophisticated, malicious attacks such as spamming and DDoS, posing significant risks to online infrastructure.[AI generated]