The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

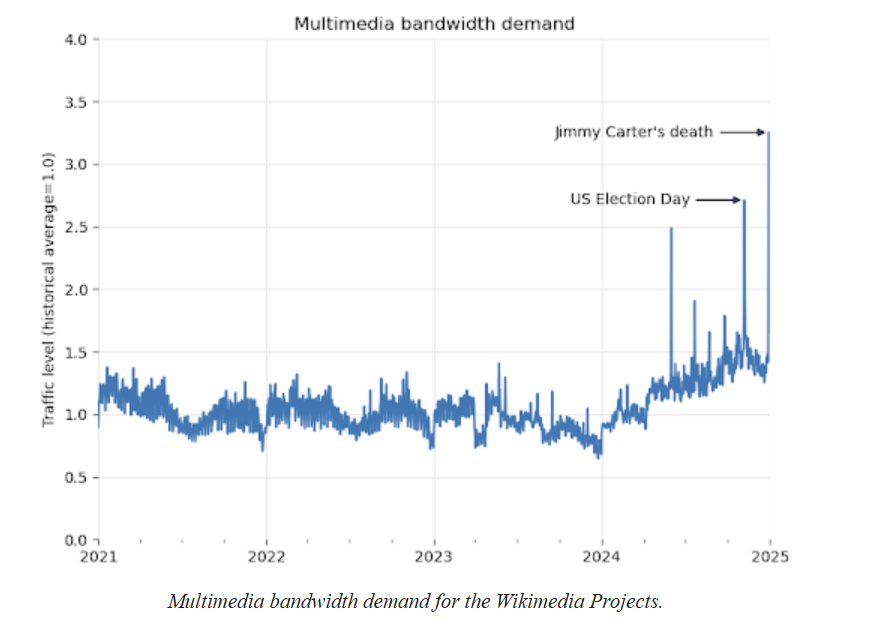

The Wikimedia Foundation has raised alarms over AI training methods that use automated web crawlers to extract vast amounts of data, overwhelming Wikipedia and related servers. This excessive data scraping is causing rising operational costs and poses risks of service disruptions, highlighting a growing digital infrastructure hazard.[AI generated]

.png?mbid=social_retweet)

/https://www.html.it/app/uploads/2025/04/wikipedia-.jpg)